Compare commits

97 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

e66ece993f | ||

|

|

0686860624 | ||

|

|

24ce46b380 | ||

|

|

a49bf81ff2 | ||

|

|

64501fd7f1 | ||

|

|

db3c0b0907 | ||

|

|

edda117a7a | ||

|

|

cdface0dd5 | ||

|

|

be6afe2d3a | ||

|

|

9163780000 | ||

|

|

d7aa7dfe64 | ||

|

|

f1decb531d | ||

|

|

99399c698b | ||

|

|

1f5f42f216 | ||

|

|

9082c4702f | ||

|

|

6cedcfbf77 | ||

|

|

8a631f045e | ||

|

|

a6a2ee5b6b | ||

|

|

016708276c | ||

|

|

4cfdc4c513 | ||

|

|

0f257c9308 | ||

|

|

c8104b6e78 | ||

|

|

1a1d731043 | ||

|

|

c5a000d2ae | ||

|

|

94d1924fa9 | ||

|

|

6c1cf68bca | ||

|

|

395af051bd | ||

|

|

42fd66675e | ||

|

|

21a3f3699b | ||

|

|

d168b2acac | ||

|

|

2ce8233921 | ||

|

|

697a4fa8a4 | ||

|

|

2f83c6c7d1 | ||

|

|

127f414e9c | ||

|

|

33c4ccffab | ||

|

|

bafe7f5a09 | ||

|

|

baf41112d1 | ||

|

|

a90dde94e1 | ||

|

|

7dfbfc7227 | ||

|

|

b10843d051 | ||

|

|

520ac8f4dc | ||

|

|

537a6e50e9 | ||

|

|

2d0cbdf1a8 | ||

|

|

5afb562aa3 | ||

|

|

db069c3d4a | ||

|

|

fae40c7e2f | ||

|

|

0c43b592dc | ||

|

|

2ab8924e2d | ||

|

|

0e31cfa784 | ||

|

|

8f7ffcf350 | ||

|

|

9c8507a0fd | ||

|

|

e9b2cab088 | ||

|

|

d3ccacccb1 | ||

|

|

df386c8fbc | ||

|

|

4d15dd6e17 | ||

|

|

56a0499636 | ||

|

|

10fc4768e8 | ||

|

|

2b63d7d10d | ||

|

|

1f177528c1 | ||

|

|

fc3bbb70a3 | ||

|

|

ce3cab0295 | ||

|

|

c784e5285e | ||

|

|

2bf9055cae | ||

|

|

8aba5aed4f | ||

|

|

0ce7cf5e10 | ||

|

|

96edcbccd7 | ||

|

|

4603afb6de | ||

|

|

56317b00af | ||

|

|

cacec9c1f3 | ||

|

|

44ee07f0b2 | ||

|

|

6a8d5e1731 | ||

|

|

d9962f65b3 | ||

|

|

119e88d87b | ||

|

|

71d9e010d9 | ||

|

|

5718caa957 | ||

|

|

efd8a32ed6 | ||

|

|

b22d700e16 | ||

|

|

ccdacea0c4 | ||

|

|

4bdcbc1cb5 | ||

|

|

833c6cf2ec | ||

|

|

dd6dbdd90a | ||

|

|

63013cc565 | ||

|

|

912402364a | ||

|

|

159f51b12b | ||

|

|

7678a91b0e | ||

|

|

b13899c63d | ||

|

|

3a0d882c5e | ||

|

|

cb81f0ad6d | ||

|

|

518bacf628 | ||

|

|

ca63b03e55 | ||

|

|

cecef88d6b | ||

|

|

7ffd805a03 | ||

|

|

a7e2a0c981 | ||

|

|

2a570bb4ca | ||

|

|

5ca8f0706d | ||

|

|

a9b4436cdc | ||

|

|

5f91999512 |

52

README.md

52

README.md

@@ -47,6 +47,7 @@ turn almost any device into a file server with resumable uploads/downloads using

|

||||

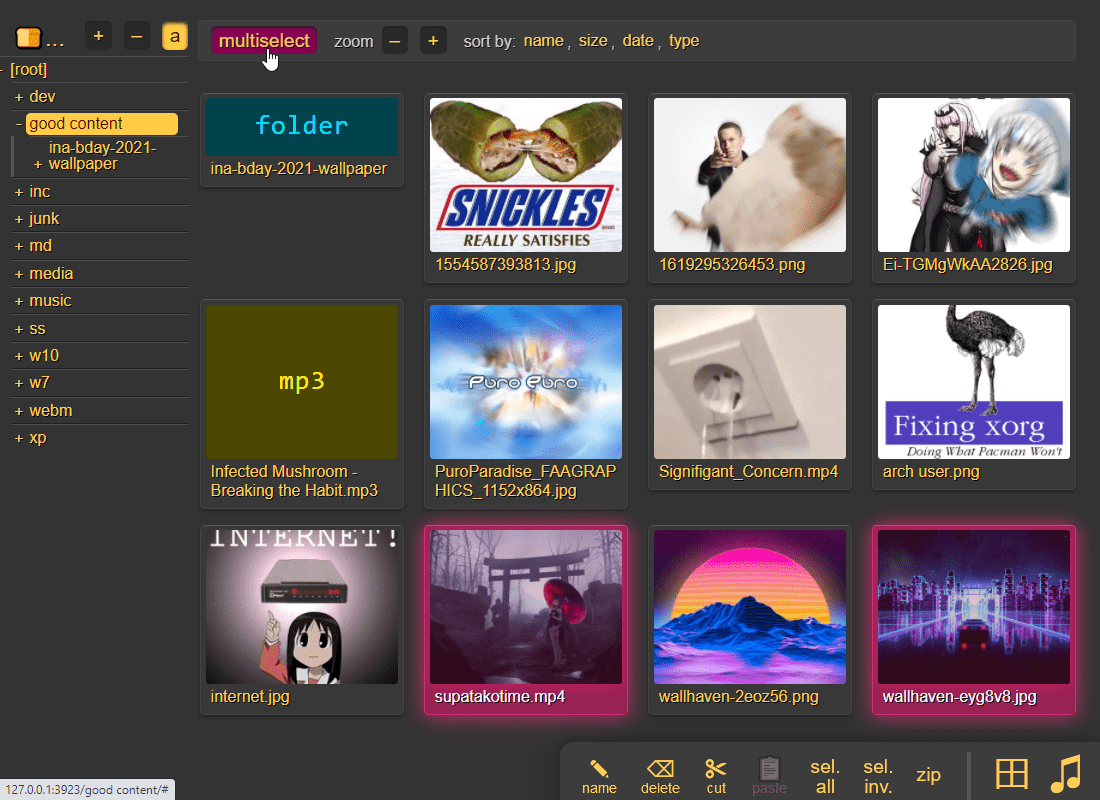

* [file manager](#file-manager) - cut/paste, rename, and delete files/folders (if you have permission)

|

||||

* [shares](#shares) - share a file or folder by creating a temporary link

|

||||

* [batch rename](#batch-rename) - select some files and press `F2` to bring up the rename UI

|

||||

* [rss feeds](#rss-feeds) - monitor a folder with your RSS reader

|

||||

* [media player](#media-player) - plays almost every audio format there is

|

||||

* [audio equalizer](#audio-equalizer) - and [dynamic range compressor](https://en.wikipedia.org/wiki/Dynamic_range_compression)

|

||||

* [fix unreliable playback on android](#fix-unreliable-playback-on-android) - due to phone / app settings

|

||||

@@ -338,6 +339,9 @@ same order here too

|

||||

|

||||

* [Chrome issue 1352210](https://bugs.chromium.org/p/chromium/issues/detail?id=1352210) -- plaintext http may be faster at filehashing than https (but also extremely CPU-intensive)

|

||||

|

||||

* [Chrome issue 383568268](https://issues.chromium.org/issues/383568268) -- filereaders in webworkers can OOM / crash the browser-tab

|

||||

* copyparty has a workaround which seems to work well enough

|

||||

|

||||

* [Firefox issue 1790500](https://bugzilla.mozilla.org/show_bug.cgi?id=1790500) -- entire browser can crash after uploading ~4000 small files

|

||||

|

||||

* Android: music playback randomly stops due to [battery usage settings](#fix-unreliable-playback-on-android)

|

||||

@@ -427,7 +431,7 @@ configuring accounts/volumes with arguments:

|

||||

|

||||

permissions:

|

||||

* `r` (read): browse folder contents, download files, download as zip/tar, see filekeys/dirkeys

|

||||

* `w` (write): upload files, move files *into* this folder

|

||||

* `w` (write): upload files, move/copy files *into* this folder

|

||||

* `m` (move): move files/folders *from* this folder

|

||||

* `d` (delete): delete files/folders

|

||||

* `.` (dots): user can ask to show dotfiles in directory listings

|

||||

@@ -507,7 +511,8 @@ the browser has the following hotkeys (always qwerty)

|

||||

* `ESC` close various things

|

||||

* `ctrl-K` delete selected files/folders

|

||||

* `ctrl-X` cut selected files/folders

|

||||

* `ctrl-V` paste

|

||||

* `ctrl-C` copy selected files/folders to clipboard

|

||||

* `ctrl-V` paste (move/copy)

|

||||

* `Y` download selected files

|

||||

* `F2` [rename](#batch-rename) selected file/folder

|

||||

* when a file/folder is selected (in not-grid-view):

|

||||

@@ -576,6 +581,7 @@ click the `🌲` or pressing the `B` hotkey to toggle between breadcrumbs path (

|

||||

|

||||

press `g` or `田` to toggle grid-view instead of the file listing and `t` toggles icons / thumbnails

|

||||

* can be made default globally with `--grid` or per-volume with volflag `grid`

|

||||

* enable by adding `?imgs` to a link, or disable with `?imgs=0`

|

||||

|

||||

|

||||

|

||||

@@ -755,10 +761,11 @@ file selection: click somewhere on the line (not the link itself), then:

|

||||

* shift-click another line for range-select

|

||||

|

||||

* cut: select some files and `ctrl-x`

|

||||

* copy: select some files and `ctrl-c`

|

||||

* paste: `ctrl-v` in another folder

|

||||

* rename: `F2`

|

||||

|

||||

you can move files across browser tabs (cut in one tab, paste in another)

|

||||

you can copy/move files across browser tabs (cut/copy in one tab, paste in another)

|

||||

|

||||

|

||||

## shares

|

||||

@@ -845,6 +852,30 @@ or a mix of both:

|

||||

the metadata keys you can use in the format field are the ones in the file-browser table header (whatever is collected with `-mte` and `-mtp`)

|

||||

|

||||

|

||||

## rss feeds

|

||||

|

||||

monitor a folder with your RSS reader , optionally recursive

|

||||

|

||||

must be enabled per-volume with volflag `rss` or globally with `--rss`

|

||||

|

||||

the feed includes itunes metadata for use with podcast readers such as [AntennaPod](https://antennapod.org/)

|

||||

|

||||

a feed example: https://cd.ocv.me/a/d2/d22/?rss&fext=mp3

|

||||

|

||||

url parameters:

|

||||

|

||||

* `pw=hunter2` for password auth

|

||||

* `recursive` to also include subfolders

|

||||

* `title=foo` changes the feed title (default: folder name)

|

||||

* `fext=mp3,opus` only include mp3 and opus files (default: all)

|

||||

* `nf=30` only show the first 30 results (default: 250)

|

||||

* `sort=m` sort by mtime (file last-modified), newest first (default)

|

||||

* `u` = upload-time; NOTE: non-uploaded files have upload-time `0`

|

||||

* `n` = filename

|

||||

* `a` = filesize

|

||||

* uppercase = reverse-sort; `M` = oldest file first

|

||||

|

||||

|

||||

## media player

|

||||

|

||||

plays almost every audio format there is (if the server has FFmpeg installed for on-demand transcoding)

|

||||

@@ -1069,11 +1100,12 @@ using the GUI (winXP or later):

|

||||

* on winXP only, click the `Sign up for online storage` hyperlink instead and put the URL there

|

||||

* providing your password as the username is recommended; the password field can be anything or empty

|

||||

|

||||

known client bugs:

|

||||

the webdav client that's built into windows has the following list of bugs; you can avoid all of these by connecting with rclone instead:

|

||||

* win7+ doesn't actually send the password to the server when reauthenticating after a reboot unless you first try to login with an incorrect password and then switch to the correct password

|

||||

* or just type your password into the username field instead to get around it entirely

|

||||

* connecting to a folder which allows anonymous read will make writing impossible, as windows has decided it doesn't need to login

|

||||

* workaround: connect twice; first to a folder which requires auth, then to the folder you actually want, and leave both of those mounted

|

||||

* or set the server-option `--dav-auth` to force password-auth for all webdav clients

|

||||

* win7+ may open a new tcp connection for every file and sometimes forgets to close them, eventually needing a reboot

|

||||

* maybe NIC-related (??), happens with win10-ltsc on e1000e but not virtio

|

||||

* windows cannot access folders which contain filenames with invalid unicode or forbidden characters (`<>:"/\|?*`), or names ending with `.`

|

||||

@@ -1240,7 +1272,7 @@ note:

|

||||

|

||||

### exclude-patterns

|

||||

|

||||

to save some time, you can provide a regex pattern for filepaths to only index by filename/path/size/last-modified (and not the hash of the file contents) by setting `--no-hash \.iso$` or the volflag `:c,nohash=\.iso$`, this has the following consequences:

|

||||

to save some time, you can provide a regex pattern for filepaths to only index by filename/path/size/last-modified (and not the hash of the file contents) by setting `--no-hash '\.iso$'` or the volflag `:c,nohash=\.iso$`, this has the following consequences:

|

||||

* initial indexing is way faster, especially when the volume is on a network disk

|

||||

* makes it impossible to [file-search](#file-search)

|

||||

* if someone uploads the same file contents, the upload will not be detected as a dupe, so it will not get symlinked or rejected

|

||||

@@ -1251,6 +1283,8 @@ similarly, you can fully ignore files/folders using `--no-idx [...]` and `:c,noi

|

||||

|

||||

if you set `--no-hash [...]` globally, you can enable hashing for specific volumes using flag `:c,nohash=`

|

||||

|

||||

to exclude certain filepaths from search-results, use `--srch-excl` or volflag `srch_excl` instead of `--no-idx`, for example `--srch-excl 'password|logs/[0-9]'`

|

||||

|

||||

### filesystem guards

|

||||

|

||||

avoid traversing into other filesystems using `--xdev` / volflag `:c,xdev`, skipping any symlinks or bind-mounts to another HDD for example

|

||||

@@ -1457,7 +1491,9 @@ replace copyparty passwords with oauth and such

|

||||

|

||||

you can disable the built-in password-based login system, and instead replace it with a separate piece of software (an identity provider) which will then handle authenticating / authorizing of users; this makes it possible to login with passkeys / fido2 / webauthn / yubikey / ldap / active directory / oauth / many other single-sign-on contraptions

|

||||

|

||||

a popular choice is [Authelia](https://www.authelia.com/) (config-file based), another one is [authentik](https://goauthentik.io/) (GUI-based, more complex)

|

||||

* the regular config-defined users will be used as a fallback for requests which don't include a valid (trusted) IdP username header

|

||||

|

||||

some popular identity providers are [Authelia](https://www.authelia.com/) (config-file based) and [authentik](https://goauthentik.io/) (GUI-based, more complex)

|

||||

|

||||

there is a [docker-compose example](./docs/examples/docker/idp-authelia-traefik) which is hopefully a good starting point (alternatively see [./docs/idp.md](./docs/idp.md) if you're the DIY type)

|

||||

|

||||

@@ -1659,6 +1695,7 @@ scrape_configs:

|

||||

currently the following metrics are available,

|

||||

* `cpp_uptime_seconds` time since last copyparty restart

|

||||

* `cpp_boot_unixtime_seconds` same but as an absolute timestamp

|

||||

* `cpp_active_dl` number of active downloads

|

||||

* `cpp_http_conns` number of open http(s) connections

|

||||

* `cpp_http_reqs` number of http(s) requests handled

|

||||

* `cpp_sus_reqs` number of 403/422/malicious requests

|

||||

@@ -1908,6 +1945,9 @@ quick summary of more eccentric web-browsers trying to view a directory index:

|

||||

| **ie4** and **netscape** 4.0 | can browse, upload with `?b=u`, auth with `&pw=wark` |

|

||||

| **ncsa mosaic** 2.7 | does not get a pass, [pic1](https://user-images.githubusercontent.com/241032/174189227-ae816026-cf6f-4be5-a26e-1b3b072c1b2f.png) - [pic2](https://user-images.githubusercontent.com/241032/174189225-5651c059-5152-46e9-ac26-7e98e497901b.png) |

|

||||

| **SerenityOS** (7e98457) | hits a page fault, works with `?b=u`, file upload not-impl |

|

||||

| **nintendo 3ds** | can browse, upload, view thumbnails (thx bnjmn) |

|

||||

|

||||

<p align="center"><img src="https://github.com/user-attachments/assets/88deab3d-6cad-4017-8841-2f041472b853" /></p>

|

||||

|

||||

|

||||

# client examples

|

||||

|

||||

@@ -2,7 +2,7 @@ standalone programs which are executed by copyparty when an event happens (uploa

|

||||

|

||||

these programs either take zero arguments, or a filepath (the affected file), or a json message with filepath + additional info

|

||||

|

||||

run copyparty with `--help-hooks` for usage details / hook type explanations (xm/xbu/xau/xiu/xbr/xar/xbd/xad/xban)

|

||||

run copyparty with `--help-hooks` for usage details / hook type explanations (xm/xbu/xau/xiu/xbc/xac/xbr/xar/xbd/xad/xban)

|

||||

|

||||

> **note:** in addition to event hooks (the stuff described here), copyparty has another api to run your programs/scripts while providing way more information such as audio tags / video codecs / etc and optionally daisychaining data between scripts in a processing pipeline; if that's what you want then see [mtp plugins](../mtag/) instead

|

||||

|

||||

|

||||

@@ -393,7 +393,8 @@ class Gateway(object):

|

||||

if r.status != 200:

|

||||

self.closeconn()

|

||||

info("http error %s reading dir %r", r.status, web_path)

|

||||

raise FuseOSError(errno.ENOENT)

|

||||

err = errno.ENOENT if r.status == 404 else errno.EIO

|

||||

raise FuseOSError(err)

|

||||

|

||||

ctype = r.getheader("Content-Type", "")

|

||||

if ctype == "application/json":

|

||||

@@ -1128,7 +1129,7 @@ def main():

|

||||

|

||||

# dircache is always a boost,

|

||||

# only want to disable it for tests etc,

|

||||

cdn = 9 # max num dirs; 0=disable

|

||||

cdn = 24 # max num dirs; keep larger than max dir depth; 0=disable

|

||||

cds = 1 # numsec until an entry goes stale

|

||||

|

||||

where = "local directory"

|

||||

|

||||

97

bin/u2c.py

97

bin/u2c.py

@@ -1,8 +1,8 @@

|

||||

#!/usr/bin/env python3

|

||||

from __future__ import print_function, unicode_literals

|

||||

|

||||

S_VERSION = "2.4"

|

||||

S_BUILD_DT = "2024-10-16"

|

||||

S_VERSION = "2.7"

|

||||

S_BUILD_DT = "2024-12-06"

|

||||

|

||||

"""

|

||||

u2c.py: upload to copyparty

|

||||

@@ -154,6 +154,7 @@ class HCli(object):

|

||||

self.tls = tls

|

||||

self.verify = ar.te or not ar.td

|

||||

self.conns = []

|

||||

self.hconns = []

|

||||

if tls:

|

||||

import ssl

|

||||

|

||||

@@ -173,7 +174,7 @@ class HCli(object):

|

||||

"User-Agent": "u2c/%s" % (S_VERSION,),

|

||||

}

|

||||

|

||||

def _connect(self):

|

||||

def _connect(self, timeout):

|

||||

args = {}

|

||||

if PY37:

|

||||

args["blocksize"] = 1048576

|

||||

@@ -185,9 +186,11 @@ class HCli(object):

|

||||

if self.ctx:

|

||||

args = {"context": self.ctx}

|

||||

|

||||

return C(self.addr, self.port, timeout=999, **args)

|

||||

return C(self.addr, self.port, timeout=timeout, **args)

|

||||

|

||||

def req(self, meth, vpath, hdrs, body=None, ctype=None):

|

||||

now = time.time()

|

||||

|

||||

hdrs.update(self.base_hdrs)

|

||||

if self.ar.a:

|

||||

hdrs["PW"] = self.ar.a

|

||||

@@ -198,7 +201,11 @@ class HCli(object):

|

||||

0 if not body else body.len if hasattr(body, "len") else len(body)

|

||||

)

|

||||

|

||||

c = self.conns.pop() if self.conns else self._connect()

|

||||

# large timeout for handshakes (safededup)

|

||||

conns = self.hconns if ctype == MJ else self.conns

|

||||

while conns and self.ar.cxp < now - conns[0][0]:

|

||||

conns.pop(0)[1].close()

|

||||

c = conns.pop()[1] if conns else self._connect(999 if ctype == MJ else 128)

|

||||

try:

|

||||

c.request(meth, vpath, body, hdrs)

|

||||

if PY27:

|

||||

@@ -207,8 +214,15 @@ class HCli(object):

|

||||

rsp = c.getresponse()

|

||||

|

||||

data = rsp.read()

|

||||

self.conns.append(c)

|

||||

conns.append((time.time(), c))

|

||||

return rsp.status, data.decode("utf-8")

|

||||

except http_client.BadStatusLine:

|

||||

if self.ar.cxp > 4:

|

||||

t = "\nWARNING: --cxp probably too high; reducing from %d to 4"

|

||||

print(t % (self.ar.cxp,))

|

||||

self.ar.cxp = 4

|

||||

c.close()

|

||||

raise

|

||||

except:

|

||||

c.close()

|

||||

raise

|

||||

@@ -870,9 +884,10 @@ def upload(fsl, stats, maxsz):

|

||||

if sc >= 400:

|

||||

raise Exception("http %s: %s" % (sc, txt))

|

||||

finally:

|

||||

fsl.f.close()

|

||||

if nsub != -1:

|

||||

fsl.unsub()

|

||||

if fsl.f:

|

||||

fsl.f.close()

|

||||

if nsub != -1:

|

||||

fsl.unsub()

|

||||

|

||||

|

||||

class Ctl(object):

|

||||

@@ -1018,8 +1033,8 @@ class Ctl(object):

|

||||

handshake(self.ar, file, False)

|

||||

|

||||

def _fancy(self):

|

||||

atexit.register(self.cleanup_vt100)

|

||||

if VT100 and not self.ar.ns:

|

||||

atexit.register(self.cleanup_vt100)

|

||||

ss.scroll_region(3)

|

||||

|

||||

Daemon(self.hasher)

|

||||

@@ -1027,6 +1042,7 @@ class Ctl(object):

|

||||

Daemon(self.handshaker)

|

||||

Daemon(self.uploader)

|

||||

|

||||

last_sp = -1

|

||||

while True:

|

||||

with self.exit_cond:

|

||||

self.exit_cond.wait(0.07)

|

||||

@@ -1065,6 +1081,12 @@ class Ctl(object):

|

||||

else:

|

||||

txt = " "

|

||||

|

||||

if not VT100: # OSC9;4 (taskbar-progress)

|

||||

sp = int(self.up_b * 100 / self.nbytes) or 1

|

||||

if last_sp != sp:

|

||||

last_sp = sp

|

||||

txt += "\033]9;4;1;%d\033\\" % (sp,)

|

||||

|

||||

if not self.up_br:

|

||||

spd = self.hash_b / ((time.time() - self.t0) or 1)

|

||||

eta = (self.nbytes - self.hash_b) / (spd or 1)

|

||||

@@ -1075,11 +1097,15 @@ class Ctl(object):

|

||||

|

||||

spd = humansize(spd)

|

||||

self.eta = str(datetime.timedelta(seconds=int(eta)))

|

||||

if eta > 2591999:

|

||||

self.eta = self.eta.split(",")[0] # truncate HH:MM:SS

|

||||

sleft = humansize(self.nbytes - self.up_b)

|

||||

nleft = self.nfiles - self.up_f

|

||||

tail = "\033[K\033[u" if VT100 and not self.ar.ns else "\r"

|

||||

|

||||

t = "%s eta @ %s/s, %s, %d# left\033[K" % (self.eta, spd, sleft, nleft)

|

||||

if not self.hash_b:

|

||||

t = " now hashing..."

|

||||

eprint(txt + "\033]0;{0}\033\\\r{0}{1}".format(t, tail))

|

||||

|

||||

if self.ar.wlist:

|

||||

@@ -1100,7 +1126,10 @@ class Ctl(object):

|

||||

handshake(self.ar, file, False)

|

||||

|

||||

def cleanup_vt100(self):

|

||||

ss.scroll_region(None)

|

||||

if VT100:

|

||||

ss.scroll_region(None)

|

||||

else:

|

||||

eprint("\033]9;4;0\033\\")

|

||||

eprint("\033[J\033]0;\033\\")

|

||||

|

||||

def cb_hasher(self, file, ofs):

|

||||

@@ -1115,7 +1144,9 @@ class Ctl(object):

|

||||

isdir = stat.S_ISDIR(inf.st_mode)

|

||||

if self.ar.z or self.ar.drd:

|

||||

rd = rel if isdir else os.path.dirname(rel)

|

||||

srd = rd.decode("utf-8", "replace").replace("\\", "/")

|

||||

srd = rd.decode("utf-8", "replace").replace("\\", "/").rstrip("/")

|

||||

if srd:

|

||||

srd += "/"

|

||||

if prd != rd:

|

||||

prd = rd

|

||||

ls = {}

|

||||

@@ -1138,7 +1169,10 @@ class Ctl(object):

|

||||

|

||||

if self.ar.drd:

|

||||

dp = os.path.join(top, rd)

|

||||

lnodes = set(os.listdir(dp))

|

||||

try:

|

||||

lnodes = set(os.listdir(dp))

|

||||

except:

|

||||

lnodes = list(ls) # fs eio; don't delete

|

||||

if ptn:

|

||||

zs = dp.replace(sep, b"/").rstrip(b"/") + b"/"

|

||||

zls = [zs + x for x in lnodes]

|

||||

@@ -1147,11 +1181,11 @@ class Ctl(object):

|

||||

bnames = [x for x in ls if x not in lnodes and x != b".hist"]

|

||||

vpath = self.ar.url.split("://")[-1].split("/", 1)[-1]

|

||||

names = [x.decode("utf-8", WTF8) for x in bnames]

|

||||

locs = [vpath + srd + "/" + x for x in names]

|

||||

locs = [vpath + srd + x for x in names]

|

||||

while locs:

|

||||

req = locs

|

||||

while req:

|

||||

print("DELETING ~%s/#%s" % (srd, len(req)))

|

||||

print("DELETING ~%s#%s" % (srd, len(req)))

|

||||

body = json.dumps(req).encode("utf-8")

|

||||

sc, txt = web.req(

|

||||

"POST", self.ar.url + "?delete", {}, body, MJ

|

||||

@@ -1496,6 +1530,7 @@ source file/folder selection uses rsync syntax, meaning that:

|

||||

ap.add_argument("--szm", type=int, metavar="MiB", default=96, help="max size of each POST (default is cloudflare max)")

|

||||

ap.add_argument("-nh", action="store_true", help="disable hashing while uploading")

|

||||

ap.add_argument("-ns", action="store_true", help="no status panel (for slow consoles and macos)")

|

||||

ap.add_argument("--cxp", type=float, metavar="SEC", default=57, help="assume http connections expired after SEConds")

|

||||

ap.add_argument("--cd", type=float, metavar="SEC", default=5, help="delay before reattempting a failed handshake/upload")

|

||||

ap.add_argument("--safe", action="store_true", help="use simple fallback approach")

|

||||

ap.add_argument("-z", action="store_true", help="ZOOMIN' (skip uploading files if they exist at the destination with the ~same last-modified timestamp, so same as yolo / turbo with date-chk but even faster)")

|

||||

@@ -1515,6 +1550,38 @@ source file/folder selection uses rsync syntax, meaning that:

|

||||

except:

|

||||

pass

|

||||

|

||||

# msys2 doesn't uncygpath absolute paths with whitespace

|

||||

if not VT100:

|

||||

zsl = []

|

||||

for fn in ar.files:

|

||||

if re.search("^/[a-z]/", fn):

|

||||

fn = r"%s:\%s" % (fn[1:2], fn[3:])

|

||||

zsl.append(fn.replace("/", "\\"))

|

||||

ar.files = zsl

|

||||

|

||||

fok = []

|

||||

fng = []

|

||||

for fn in ar.files:

|

||||

if os.path.exists(fn):

|

||||

fok.append(fn)

|

||||

elif VT100:

|

||||

fng.append(fn)

|

||||

else:

|

||||

# windows leaves glob-expansion to the invoked process... okayyy let's get to work

|

||||

from glob import glob

|

||||

|

||||

fns = glob(fn)

|

||||

if fns:

|

||||

fok.extend(fns)

|

||||

else:

|

||||

fng.append(fn)

|

||||

|

||||

if fng:

|

||||

t = "some files/folders were not found:\n %s"

|

||||

raise Exception(t % ("\n ".join(fng),))

|

||||

|

||||

ar.files = fok

|

||||

|

||||

if ar.drd:

|

||||

ar.dr = True

|

||||

|

||||

|

||||

@@ -1,6 +1,6 @@

|

||||

# Maintainer: icxes <dev.null@need.moe>

|

||||

pkgname=copyparty

|

||||

pkgver="1.15.7"

|

||||

pkgver="1.16.4"

|

||||

pkgrel=1

|

||||

pkgdesc="File server with accelerated resumable uploads, dedup, WebDAV, FTP, TFTP, zeroconf, media indexer, thumbnails++"

|

||||

arch=("any")

|

||||

@@ -21,7 +21,7 @@ optdepends=("ffmpeg: thumbnails for videos, images (slower) and audio, music tag

|

||||

)

|

||||

source=("https://github.com/9001/${pkgname}/releases/download/v${pkgver}/${pkgname}-${pkgver}.tar.gz")

|

||||

backup=("etc/${pkgname}.d/init" )

|

||||

sha256sums=("b492f91e3e157d30b17c5cddad43e9a64f8e18e1ce0a05b50c09ca5eba843a56")

|

||||

sha256sums=("a2f2902f5d453330f9bc9bdd238d2f8d9fb748d284f94dcf56c79de15670e017")

|

||||

|

||||

build() {

|

||||

cd "${srcdir}/${pkgname}-${pkgver}"

|

||||

|

||||

@@ -1,5 +1,5 @@

|

||||

{

|

||||

"url": "https://github.com/9001/copyparty/releases/download/v1.15.7/copyparty-sfx.py",

|

||||

"version": "1.15.7",

|

||||

"hash": "sha256-j5zkMkrN/lPxXGBe2cUfIh6fFXM4lgkFLIt3TCJb3Mg="

|

||||

"url": "https://github.com/9001/copyparty/releases/download/v1.16.4/copyparty-sfx.py",

|

||||

"version": "1.16.4",

|

||||

"hash": "sha256-fHb+ePAXci+MiuJWsP/y7IaUc68wcWjEgJ7R/l9h8qY="

|

||||

}

|

||||

@@ -80,6 +80,7 @@ web/deps/prismd.css

|

||||

web/deps/scp.woff2

|

||||

web/deps/sha512.ac.js

|

||||

web/deps/sha512.hw.js

|

||||

web/iiam.gif

|

||||

web/md.css

|

||||

web/md.html

|

||||

web/md.js

|

||||

|

||||

@@ -50,6 +50,8 @@ from .util import (

|

||||

PARTFTPY_VER,

|

||||

PY_DESC,

|

||||

PYFTPD_VER,

|

||||

RAM_AVAIL,

|

||||

RAM_TOTAL,

|

||||

SQLITE_VER,

|

||||

UNPLICATIONS,

|

||||

Daemon,

|

||||

@@ -684,6 +686,8 @@ def get_sects():

|

||||

\033[36mxbu\033[35m executes CMD before a file upload starts

|

||||

\033[36mxau\033[35m executes CMD after a file upload finishes

|

||||

\033[36mxiu\033[35m executes CMD after all uploads finish and volume is idle

|

||||

\033[36mxbc\033[35m executes CMD before a file copy

|

||||

\033[36mxac\033[35m executes CMD after a file copy

|

||||

\033[36mxbr\033[35m executes CMD before a file rename/move

|

||||

\033[36mxar\033[35m executes CMD after a file rename/move

|

||||

\033[36mxbd\033[35m executes CMD before a file delete

|

||||

@@ -874,8 +878,9 @@ def get_sects():

|

||||

use argon2id with timecost 3, 256 MiB, 4 threads, version 19 (0x13/v1.3)

|

||||

|

||||

\033[36m--ah-alg scrypt\033[0m # which is the same as:

|

||||

\033[36m--ah-alg scrypt,13,2,8,4\033[0m

|

||||

use scrypt with cost 2**13, 2 iterations, blocksize 8, 4 threads

|

||||

\033[36m--ah-alg scrypt,13,2,8,4,32\033[0m

|

||||

use scrypt with cost 2**13, 2 iterations, blocksize 8, 4 threads,

|

||||

and allow using up to 32 MiB RAM (ram=cost*blksz roughly)

|

||||

|

||||

\033[36m--ah-alg sha2\033[0m # which is the same as:

|

||||

\033[36m--ah-alg sha2,424242\033[0m

|

||||

@@ -1037,7 +1042,7 @@ def add_network(ap):

|

||||

else:

|

||||

ap2.add_argument("--freebind", action="store_true", help="allow listening on IPs which do not yet exist, for example if the network interfaces haven't finished going up. Only makes sense for IPs other than '0.0.0.0', '127.0.0.1', '::', and '::1'. May require running as root (unless net.ipv6.ip_nonlocal_bind)")

|

||||

ap2.add_argument("--s-thead", metavar="SEC", type=int, default=120, help="socket timeout (read request header)")

|

||||

ap2.add_argument("--s-tbody", metavar="SEC", type=float, default=186.0, help="socket timeout (read/write request/response bodies). Use 60 on fast servers (default is extremely safe). Disable with 0 if reverse-proxied for a 2%% speed boost")

|

||||

ap2.add_argument("--s-tbody", metavar="SEC", type=float, default=128.0, help="socket timeout (read/write request/response bodies). Use 60 on fast servers (default is extremely safe). Disable with 0 if reverse-proxied for a 2%% speed boost")

|

||||

ap2.add_argument("--s-rd-sz", metavar="B", type=int, default=256*1024, help="socket read size in bytes (indirectly affects filesystem writes; recommendation: keep equal-to or lower-than \033[33m--iobuf\033[0m)")

|

||||

ap2.add_argument("--s-wr-sz", metavar="B", type=int, default=256*1024, help="socket write size in bytes")

|

||||

ap2.add_argument("--s-wr-slp", metavar="SEC", type=float, default=0.0, help="debug: socket write delay in seconds")

|

||||

@@ -1078,7 +1083,7 @@ def add_cert(ap, cert_path):

|

||||

def add_auth(ap):

|

||||

ses_db = os.path.join(E.cfg, "sessions.db")

|

||||

ap2 = ap.add_argument_group('IdP / identity provider / user authentication options')

|

||||

ap2.add_argument("--idp-h-usr", metavar="HN", type=u, default="", help="bypass the copyparty authentication checks and assume the request-header \033[33mHN\033[0m contains the username of the requesting user (for use with authentik/oauth/...)\n\033[1;31mWARNING:\033[0m if you enable this, make sure clients are unable to specify this header themselves; must be washed away and replaced by a reverse-proxy")

|

||||

ap2.add_argument("--idp-h-usr", metavar="HN", type=u, default="", help="bypass the copyparty authentication checks if the request-header \033[33mHN\033[0m contains a username to associate the request with (for use with authentik/oauth/...)\n\033[1;31mWARNING:\033[0m if you enable this, make sure clients are unable to specify this header themselves; must be washed away and replaced by a reverse-proxy")

|

||||

ap2.add_argument("--idp-h-grp", metavar="HN", type=u, default="", help="assume the request-header \033[33mHN\033[0m contains the groupname of the requesting user; can be referenced in config files for group-based access control")

|

||||

ap2.add_argument("--idp-h-key", metavar="HN", type=u, default="", help="optional but recommended safeguard; your reverse-proxy will insert a secret header named \033[33mHN\033[0m into all requests, and the other IdP headers will be ignored if this header is not present")

|

||||

ap2.add_argument("--idp-gsep", metavar="RE", type=u, default="|:;+,", help="if there are multiple groups in \033[33m--idp-h-grp\033[0m, they are separated by one of the characters in \033[33mRE\033[0m")

|

||||

@@ -1119,6 +1124,8 @@ def add_zc_mdns(ap):

|

||||

ap2.add_argument("--zm6", action="store_true", help="IPv6 only")

|

||||

ap2.add_argument("--zmv", action="store_true", help="verbose mdns")

|

||||

ap2.add_argument("--zmvv", action="store_true", help="verboser mdns")

|

||||

ap2.add_argument("--zm-no-pe", action="store_true", help="mute parser errors (invalid incoming MDNS packets)")

|

||||

ap2.add_argument("--zm-nwa-1", action="store_true", help="disable workaround for avahi-bug #379 (corruption in Avahi's mDNS reflection feature)")

|

||||

ap2.add_argument("--zms", metavar="dhf", type=u, default="", help="list of services to announce -- d=webdav h=http f=ftp s=smb -- lowercase=plaintext uppercase=TLS -- default: all enabled services except http/https (\033[32mDdfs\033[0m if \033[33m--ftp\033[0m and \033[33m--smb\033[0m is set, \033[32mDd\033[0m otherwise)")

|

||||

ap2.add_argument("--zm-ld", metavar="PATH", type=u, default="", help="link a specific folder for webdav shares")

|

||||

ap2.add_argument("--zm-lh", metavar="PATH", type=u, default="", help="link a specific folder for http shares")

|

||||

@@ -1201,6 +1208,8 @@ def add_hooks(ap):

|

||||

ap2.add_argument("--xbu", metavar="CMD", type=u, action="append", help="execute \033[33mCMD\033[0m before a file upload starts")

|

||||

ap2.add_argument("--xau", metavar="CMD", type=u, action="append", help="execute \033[33mCMD\033[0m after a file upload finishes")

|

||||

ap2.add_argument("--xiu", metavar="CMD", type=u, action="append", help="execute \033[33mCMD\033[0m after all uploads finish and volume is idle")

|

||||

ap2.add_argument("--xbc", metavar="CMD", type=u, action="append", help="execute \033[33mCMD\033[0m before a file copy")

|

||||

ap2.add_argument("--xac", metavar="CMD", type=u, action="append", help="execute \033[33mCMD\033[0m after a file copy")

|

||||

ap2.add_argument("--xbr", metavar="CMD", type=u, action="append", help="execute \033[33mCMD\033[0m before a file move/rename")

|

||||

ap2.add_argument("--xar", metavar="CMD", type=u, action="append", help="execute \033[33mCMD\033[0m after a file move/rename")

|

||||

ap2.add_argument("--xbd", metavar="CMD", type=u, action="append", help="execute \033[33mCMD\033[0m before a file delete")

|

||||

@@ -1233,6 +1242,7 @@ def add_optouts(ap):

|

||||

ap2.add_argument("--no-dav", action="store_true", help="disable webdav support")

|

||||

ap2.add_argument("--no-del", action="store_true", help="disable delete operations")

|

||||

ap2.add_argument("--no-mv", action="store_true", help="disable move/rename operations")

|

||||

ap2.add_argument("--no-cp", action="store_true", help="disable copy operations")

|

||||

ap2.add_argument("-nth", action="store_true", help="no title hostname; don't show \033[33m--name\033[0m in <title>")

|

||||

ap2.add_argument("-nih", action="store_true", help="no info hostname -- don't show in UI")

|

||||

ap2.add_argument("-nid", action="store_true", help="no info disk-usage -- don't show in UI")

|

||||

@@ -1307,7 +1317,8 @@ def add_logging(ap):

|

||||

ap2.add_argument("--log-conn", action="store_true", help="debug: print tcp-server msgs")

|

||||

ap2.add_argument("--log-htp", action="store_true", help="debug: print http-server threadpool scaling")

|

||||

ap2.add_argument("--ihead", metavar="HEADER", type=u, action='append', help="print request \033[33mHEADER\033[0m; [\033[32m*\033[0m]=all")

|

||||

ap2.add_argument("--lf-url", metavar="RE", type=u, default=r"^/\.cpr/|\?th=[wj]$|/\.(_|ql_|DS_Store$|localized$)", help="dont log URLs matching regex \033[33mRE\033[0m")

|

||||

ap2.add_argument("--ohead", metavar="HEADER", type=u, action='append', help="print response \033[33mHEADER\033[0m; [\033[32m*\033[0m]=all")

|

||||

ap2.add_argument("--lf-url", metavar="RE", type=u, default=r"^/\.cpr/|[?&]th=[wjp]|/\.(_|ql_|DS_Store$|localized$)", help="dont log URLs matching regex \033[33mRE\033[0m")

|

||||

|

||||

|

||||

def add_admin(ap):

|

||||

@@ -1315,9 +1326,12 @@ def add_admin(ap):

|

||||

ap2.add_argument("--no-reload", action="store_true", help="disable ?reload=cfg (reload users/volumes/volflags from config file)")

|

||||

ap2.add_argument("--no-rescan", action="store_true", help="disable ?scan (volume reindexing)")

|

||||

ap2.add_argument("--no-stack", action="store_true", help="disable ?stack (list all stacks)")

|

||||

ap2.add_argument("--dl-list", metavar="LVL", type=int, default=2, help="who can see active downloads in the controlpanel? [\033[32m0\033[0m]=nobody, [\033[32m1\033[0m]=admins, [\033[32m2\033[0m]=everyone")

|

||||

|

||||

|

||||

def add_thumbnail(ap):

|

||||

th_ram = (RAM_AVAIL or RAM_TOTAL or 9) * 0.6

|

||||

th_ram = int(max(min(th_ram, 6), 1) * 10) / 10

|

||||

ap2 = ap.add_argument_group('thumbnail options')

|

||||

ap2.add_argument("--no-thumb", action="store_true", help="disable all thumbnails (volflag=dthumb)")

|

||||

ap2.add_argument("--no-vthumb", action="store_true", help="disable video thumbnails (volflag=dvthumb)")

|

||||

@@ -1325,7 +1339,7 @@ def add_thumbnail(ap):

|

||||

ap2.add_argument("--th-size", metavar="WxH", default="320x256", help="thumbnail res (volflag=thsize)")

|

||||

ap2.add_argument("--th-mt", metavar="CORES", type=int, default=CORES, help="num cpu cores to use for generating thumbnails")

|

||||

ap2.add_argument("--th-convt", metavar="SEC", type=float, default=60.0, help="conversion timeout in seconds (volflag=convt)")

|

||||

ap2.add_argument("--th-ram-max", metavar="GB", type=float, default=6.0, help="max memory usage (GiB) permitted by thumbnailer; not very accurate")

|

||||

ap2.add_argument("--th-ram-max", metavar="GB", type=float, default=th_ram, help="max memory usage (GiB) permitted by thumbnailer; not very accurate")

|

||||

ap2.add_argument("--th-crop", metavar="TXT", type=u, default="y", help="crop thumbnails to 4:3 or keep dynamic height; client can override in UI unless force. [\033[32my\033[0m]=crop, [\033[32mn\033[0m]=nocrop, [\033[32mfy\033[0m]=force-y, [\033[32mfn\033[0m]=force-n (volflag=crop)")

|

||||

ap2.add_argument("--th-x3", metavar="TXT", type=u, default="n", help="show thumbs at 3x resolution; client can override in UI unless force. [\033[32my\033[0m]=yes, [\033[32mn\033[0m]=no, [\033[32mfy\033[0m]=force-yes, [\033[32mfn\033[0m]=force-no (volflag=th3x)")

|

||||

ap2.add_argument("--th-dec", metavar="LIBS", default="vips,pil,ff", help="image decoders, in order of preference")

|

||||

@@ -1340,12 +1354,12 @@ def add_thumbnail(ap):

|

||||

# https://pillow.readthedocs.io/en/stable/handbook/image-file-formats.html

|

||||

# https://github.com/libvips/libvips

|

||||

# ffmpeg -hide_banner -demuxers | awk '/^ D /{print$2}' | while IFS= read -r x; do ffmpeg -hide_banner -h demuxer=$x; done | grep -E '^Demuxer |extensions:'

|

||||

ap2.add_argument("--th-r-pil", metavar="T,T", type=u, default="avif,avifs,blp,bmp,dcx,dds,dib,emf,eps,fits,flc,fli,fpx,gif,heic,heics,heif,heifs,icns,ico,im,j2p,j2k,jp2,jpeg,jpg,jpx,pbm,pcx,pgm,png,pnm,ppm,psd,qoi,sgi,spi,tga,tif,tiff,webp,wmf,xbm,xpm", help="image formats to decode using pillow")

|

||||

ap2.add_argument("--th-r-pil", metavar="T,T", type=u, default="avif,avifs,blp,bmp,cbz,dcx,dds,dib,emf,eps,fits,flc,fli,fpx,gif,heic,heics,heif,heifs,icns,ico,im,j2p,j2k,jp2,jpeg,jpg,jpx,pbm,pcx,pgm,png,pnm,ppm,psd,qoi,sgi,spi,tga,tif,tiff,webp,wmf,xbm,xpm", help="image formats to decode using pillow")

|

||||

ap2.add_argument("--th-r-vips", metavar="T,T", type=u, default="avif,exr,fit,fits,fts,gif,hdr,heic,jp2,jpeg,jpg,jpx,jxl,nii,pfm,pgm,png,ppm,svg,tif,tiff,webp", help="image formats to decode using pyvips")

|

||||

ap2.add_argument("--th-r-ffi", metavar="T,T", type=u, default="apng,avif,avifs,bmp,dds,dib,fit,fits,fts,gif,hdr,heic,heics,heif,heifs,icns,ico,jp2,jpeg,jpg,jpx,jxl,pbm,pcx,pfm,pgm,png,pnm,ppm,psd,qoi,sgi,tga,tif,tiff,webp,xbm,xpm", help="image formats to decode using ffmpeg")

|

||||

ap2.add_argument("--th-r-ffi", metavar="T,T", type=u, default="apng,avif,avifs,bmp,cbz,dds,dib,fit,fits,fts,gif,hdr,heic,heics,heif,heifs,icns,ico,jp2,jpeg,jpg,jpx,jxl,pbm,pcx,pfm,pgm,png,pnm,ppm,psd,qoi,sgi,tga,tif,tiff,webp,xbm,xpm", help="image formats to decode using ffmpeg")

|

||||

ap2.add_argument("--th-r-ffv", metavar="T,T", type=u, default="3gp,asf,av1,avc,avi,flv,h264,h265,hevc,m4v,mjpeg,mjpg,mkv,mov,mp4,mpeg,mpeg2,mpegts,mpg,mpg2,mts,nut,ogm,ogv,rm,ts,vob,webm,wmv", help="video formats to decode using ffmpeg")

|

||||

ap2.add_argument("--th-r-ffa", metavar="T,T", type=u, default="aac,ac3,aif,aiff,alac,alaw,amr,apac,ape,au,bonk,dfpwm,dts,flac,gsm,ilbc,it,itgz,itxz,itz,m4a,mdgz,mdxz,mdz,mo3,mod,mp2,mp3,mpc,mptm,mt2,mulaw,ogg,okt,opus,ra,s3m,s3gz,s3xz,s3z,tak,tta,ulaw,wav,wma,wv,xm,xmgz,xmxz,xmz,xpk", help="audio formats to decode using ffmpeg")

|

||||

ap2.add_argument("--au-unpk", metavar="E=F.C", type=u, default="mdz=mod.zip, mdgz=mod.gz, mdxz=mod.xz, s3z=s3m.zip, s3gz=s3m.gz, s3xz=s3m.xz, xmz=xm.zip, xmgz=xm.gz, xmxz=xm.xz, itz=it.zip, itgz=it.gz, itxz=it.xz", help="audio formats to decompress before passing to ffmpeg")

|

||||

ap2.add_argument("--au-unpk", metavar="E=F.C", type=u, default="mdz=mod.zip, mdgz=mod.gz, mdxz=mod.xz, s3z=s3m.zip, s3gz=s3m.gz, s3xz=s3m.xz, xmz=xm.zip, xmgz=xm.gz, xmxz=xm.xz, itz=it.zip, itgz=it.gz, itxz=it.xz, cbz=jpg.cbz", help="audio/image formats to decompress before passing to ffmpeg")

|

||||

|

||||

|

||||

def add_transcoding(ap):

|

||||

@@ -1357,6 +1371,14 @@ def add_transcoding(ap):

|

||||

ap2.add_argument("--ac-maxage", metavar="SEC", type=int, default=86400, help="delete cached transcode output after \033[33mSEC\033[0m seconds")

|

||||

|

||||

|

||||

def add_rss(ap):

|

||||

ap2 = ap.add_argument_group('RSS options')

|

||||

ap2.add_argument("--rss", action="store_true", help="enable RSS output (experimental)")

|

||||

ap2.add_argument("--rss-nf", metavar="HITS", type=int, default=250, help="default number of files to return (url-param 'nf')")

|

||||

ap2.add_argument("--rss-fext", metavar="E,E", type=u, default="", help="default list of file extensions to include (url-param 'fext'); blank=all")

|

||||

ap2.add_argument("--rss-sort", metavar="ORD", type=u, default="m", help="default sort order (url-param 'sort'); [\033[32mm\033[0m]=last-modified [\033[32mu\033[0m]=upload-time [\033[32mn\033[0m]=filename [\033[32ms\033[0m]=filesize; Uppercase=oldest-first. Note that upload-time is 0 for non-uploaded files")

|

||||

|

||||

|

||||

def add_db_general(ap, hcores):

|

||||

noidx = APPLESAN_TXT if MACOS else ""

|

||||

ap2 = ap.add_argument_group('general db options')

|

||||

@@ -1381,6 +1403,7 @@ def add_db_general(ap, hcores):

|

||||

ap2.add_argument("--db-act", metavar="SEC", type=float, default=10.0, help="defer any scheduled volume reindexing until \033[33mSEC\033[0m seconds after last db write (uploads, renames, ...)")

|

||||

ap2.add_argument("--srch-time", metavar="SEC", type=int, default=45, help="search deadline -- terminate searches running for more than \033[33mSEC\033[0m seconds")

|

||||

ap2.add_argument("--srch-hits", metavar="N", type=int, default=7999, help="max search results to allow clients to fetch; 125 results will be shown initially")

|

||||

ap2.add_argument("--srch-excl", metavar="PTN", type=u, default="", help="regex: exclude files from search results if the file-URL matches \033[33mPTN\033[0m (case-sensitive). Example: [\033[32mpassword|logs/[0-9]\033[0m] any URL containing 'password' or 'logs/DIGIT' (volflag=srch_excl)")

|

||||

ap2.add_argument("--dotsrch", action="store_true", help="show dotfiles in search results (volflags: dotsrch | nodotsrch)")

|

||||

|

||||

|

||||

@@ -1437,6 +1460,8 @@ def add_ui(ap, retry):

|

||||

ap2.add_argument("--themes", metavar="NUM", type=int, default=8, help="number of themes installed")

|

||||

ap2.add_argument("--au-vol", metavar="0-100", type=int, default=50, choices=range(0, 101), help="default audio/video volume percent")

|

||||

ap2.add_argument("--sort", metavar="C,C,C", type=u, default="href", help="default sort order, comma-separated column IDs (see header tooltips), prefix with '-' for descending. Examples: \033[32mhref -href ext sz ts tags/Album tags/.tn\033[0m (volflag=sort)")

|

||||

ap2.add_argument("--nsort", action="store_true", help="default-enable natural sort of filenames with leading numbers (volflag=nsort)")

|

||||

ap2.add_argument("--hsortn", metavar="N", type=int, default=2, help="number of sorting rules to include in media URLs by default (volflag=hsortn)")

|

||||

ap2.add_argument("--unlist", metavar="REGEX", type=u, default="", help="don't show files matching \033[33mREGEX\033[0m in file list. Purely cosmetic! Does not affect API calls, just the browser. Example: [\033[32m\\.(js|css)$\033[0m] (volflag=unlist)")

|

||||

ap2.add_argument("--favico", metavar="TXT", type=u, default="c 000 none" if retry else "🎉 000 none", help="\033[33mfavicon-text\033[0m [ \033[33mforeground\033[0m [ \033[33mbackground\033[0m ] ], set blank to disable")

|

||||

ap2.add_argument("--mpmc", metavar="URL", type=u, default="", help="change the mediaplayer-toggle mouse cursor; URL to a folder with {2..5}.png inside (or disable with [\033[32m.\033[0m])")

|

||||

@@ -1452,6 +1477,7 @@ def add_ui(ap, retry):

|

||||

ap2.add_argument("--pb-url", metavar="URL", type=u, default="https://github.com/9001/copyparty", help="powered-by link; disable with \033[33m-np\033[0m")

|

||||

ap2.add_argument("--ver", action="store_true", help="show version on the control panel (incompatible with \033[33m-nb\033[0m)")

|

||||

ap2.add_argument("--k304", metavar="NUM", type=int, default=0, help="configure the option to enable/disable k304 on the controlpanel (workaround for buggy reverse-proxies); [\033[32m0\033[0m] = hidden and default-off, [\033[32m1\033[0m] = visible and default-off, [\033[32m2\033[0m] = visible and default-on")

|

||||

ap2.add_argument("--no304", metavar="NUM", type=int, default=0, help="configure the option to enable/disable no304 on the controlpanel (workaround for buggy caching in browsers); [\033[32m0\033[0m] = hidden and default-off, [\033[32m1\033[0m] = visible and default-off, [\033[32m2\033[0m] = visible and default-on")

|

||||

ap2.add_argument("--md-sbf", metavar="FLAGS", type=u, default="downloads forms popups scripts top-navigation-by-user-activation", help="list of capabilities to ALLOW for README.md docs (volflag=md_sbf); see https://developer.mozilla.org/en-US/docs/Web/HTML/Element/iframe#attr-sandbox")

|

||||

ap2.add_argument("--lg-sbf", metavar="FLAGS", type=u, default="downloads forms popups scripts top-navigation-by-user-activation", help="list of capabilities to ALLOW for prologue/epilogue docs (volflag=lg_sbf)")

|

||||

ap2.add_argument("--no-sb-md", action="store_true", help="don't sandbox README/PREADME.md documents (volflags: no_sb_md | sb_md)")

|

||||

@@ -1526,6 +1552,7 @@ def run_argparse(

|

||||

add_db_metadata(ap)

|

||||

add_thumbnail(ap)

|

||||

add_transcoding(ap)

|

||||

add_rss(ap)

|

||||

add_ftp(ap)

|

||||

add_webdav(ap)

|

||||

add_tftp(ap)

|

||||

@@ -1710,7 +1737,7 @@ def main(argv: Optional[list[str]] = None) -> None:

|

||||

except:

|

||||

lprint("\nfailed to disable quick-edit-mode:\n" + min_ex() + "\n")

|

||||

|

||||

if al.ansi:

|

||||

if not al.ansi:

|

||||

al.wintitle = ""

|

||||

|

||||

# propagate implications

|

||||

@@ -1748,6 +1775,9 @@ def main(argv: Optional[list[str]] = None) -> None:

|

||||

if al.ihead:

|

||||

al.ihead = [x.lower() for x in al.ihead]

|

||||

|

||||

if al.ohead:

|

||||

al.ohead = [x.lower() for x in al.ohead]

|

||||

|

||||

if HAVE_SSL:

|

||||

if al.ssl_ver:

|

||||

configure_ssl_ver(al)

|

||||

|

||||

@@ -1,8 +1,8 @@

|

||||

# coding: utf-8

|

||||

|

||||

VERSION = (1, 15, 8)

|

||||

CODENAME = "fill the drives"

|

||||

BUILD_DT = (2024, 10, 16)

|

||||

VERSION = (1, 16, 5)

|

||||

CODENAME = "COPYparty"

|

||||

BUILD_DT = (2024, 12, 11)

|

||||

|

||||

S_VERSION = ".".join(map(str, VERSION))

|

||||

S_BUILD_DT = "{0:04d}-{1:02d}-{2:02d}".format(*BUILD_DT)

|

||||

|

||||

@@ -165,8 +165,11 @@ class Lim(object):

|

||||

self.chk_rem(rem)

|

||||

if sz != -1:

|

||||

self.chk_sz(sz)

|

||||

self.chk_vsz(broker, ptop, sz, volgetter)

|

||||

self.chk_df(abspath, sz) # side effects; keep last-ish

|

||||

else:

|

||||

sz = 0

|

||||

|

||||

self.chk_vsz(broker, ptop, sz, volgetter)

|

||||

self.chk_df(abspath, sz) # side effects; keep last-ish

|

||||

|

||||

ap2, vp2 = self.rot(abspath)

|

||||

if abspath == ap2:

|

||||

@@ -206,7 +209,15 @@ class Lim(object):

|

||||

|

||||

if self.dft < time.time():

|

||||

self.dft = int(time.time()) + 300

|

||||

self.dfv = get_df(abspath)[0] or 0

|

||||

|

||||

df, du, err = get_df(abspath, True)

|

||||

if err:

|

||||

t = "failed to read disk space usage for [%s]: %s"

|

||||

self.log(t % (abspath, err), 3)

|

||||

self.dfv = 0xAAAAAAAAA # 42.6 GiB

|

||||

else:

|

||||

self.dfv = df or 0

|

||||

|

||||

for j in list(self.reg.values()) if self.reg else []:

|

||||

self.dfv -= int(j["size"] / (len(j["hash"]) or 999) * len(j["need"]))

|

||||

|

||||

@@ -356,18 +367,21 @@ class VFS(object):

|

||||

self.ahtml: dict[str, list[str]] = {}

|

||||

self.aadmin: dict[str, list[str]] = {}

|

||||

self.adot: dict[str, list[str]] = {}

|

||||

self.all_vols: dict[str, VFS] = {}

|

||||

self.js_ls = {}

|

||||

self.js_htm = ""

|

||||

|

||||

if realpath:

|

||||

rp = realpath + ("" if realpath.endswith(os.sep) else os.sep)

|

||||

vp = vpath + ("/" if vpath else "")

|

||||

self.histpath = os.path.join(realpath, ".hist") # db / thumbcache

|

||||

self.all_vols = {vpath: self} # flattened recursive

|

||||

self.all_nodes = {vpath: self} # also jumpvols

|

||||

self.all_aps = [(rp, self)]

|

||||

self.all_vps = [(vp, self)]

|

||||

else:

|

||||

self.histpath = ""

|

||||

self.all_vols = {}

|

||||

self.all_nodes = {}

|

||||

self.all_aps = []

|

||||

self.all_vps = []

|

||||

|

||||

@@ -385,9 +399,11 @@ class VFS(object):

|

||||

def get_all_vols(

|

||||

self,

|

||||

vols: dict[str, "VFS"],

|

||||

nodes: dict[str, "VFS"],

|

||||

aps: list[tuple[str, "VFS"]],

|

||||

vps: list[tuple[str, "VFS"]],

|

||||

) -> None:

|

||||

nodes[self.vpath] = self

|

||||

if self.realpath:

|

||||

vols[self.vpath] = self

|

||||

rp = self.realpath

|

||||

@@ -397,7 +413,7 @@ class VFS(object):

|

||||

vps.append((vp, self))

|

||||

|

||||

for v in self.nodes.values():

|

||||

v.get_all_vols(vols, aps, vps)

|

||||

v.get_all_vols(vols, nodes, aps, vps)

|

||||

|

||||

def add(self, src: str, dst: str) -> "VFS":

|

||||

"""get existing, or add new path to the vfs"""

|

||||

@@ -541,15 +557,14 @@ class VFS(object):

|

||||

return self._get_dbv(vrem)

|

||||

|

||||

shv, srem = src

|

||||

return shv, vjoin(srem, vrem)

|

||||

return shv._get_dbv(vjoin(srem, vrem))

|

||||

|

||||

def _get_dbv(self, vrem: str) -> tuple["VFS", str]:

|

||||

dbv = self.dbv

|

||||

if not dbv:

|

||||

return self, vrem

|

||||

|

||||

tv = [self.vpath[len(dbv.vpath) :].lstrip("/"), vrem]

|

||||

vrem = "/".join([x for x in tv if x])

|

||||

vrem = vjoin(self.vpath[len(dbv.vpath) :].lstrip("/"), vrem)

|

||||

return dbv, vrem

|

||||

|

||||

def canonical(self, rem: str, resolve: bool = True) -> str:

|

||||

@@ -581,10 +596,11 @@ class VFS(object):

|

||||

scandir: bool,

|

||||

permsets: list[list[bool]],

|

||||

lstat: bool = False,

|

||||

throw: bool = False,

|

||||

) -> tuple[str, list[tuple[str, os.stat_result]], dict[str, "VFS"]]:

|

||||

"""replaces _ls for certain shares (single-file, or file selection)"""

|

||||

vn, rem = self.shr_src # type: ignore

|

||||

abspath, real, _ = vn.ls(rem, "\n", scandir, permsets, lstat)

|

||||

abspath, real, _ = vn.ls(rem, "\n", scandir, permsets, lstat, throw)

|

||||

real = [x for x in real if os.path.basename(x[0]) in self.shr_files]

|

||||

return abspath, real, {}

|

||||

|

||||

@@ -595,11 +611,12 @@ class VFS(object):

|

||||

scandir: bool,

|

||||

permsets: list[list[bool]],

|

||||

lstat: bool = False,

|

||||

throw: bool = False,

|

||||

) -> tuple[str, list[tuple[str, os.stat_result]], dict[str, "VFS"]]:

|

||||

"""return user-readable [fsdir,real,virt] items at vpath"""

|

||||

virt_vis = {} # nodes readable by user

|

||||

abspath = self.canonical(rem)

|

||||

real = list(statdir(self.log, scandir, lstat, abspath))

|

||||

real = list(statdir(self.log, scandir, lstat, abspath, throw))

|

||||

real.sort()

|

||||

if not rem:

|

||||

# no vfs nodes in the list of real inodes

|

||||

@@ -661,6 +678,10 @@ class VFS(object):

|

||||

"""

|

||||

recursively yields from ./rem;

|

||||

rel is a unix-style user-defined vpath (not vfs-related)

|

||||

|

||||

NOTE: don't invoke this function from a dbv; subvols are only

|

||||

descended into if rem is blank due to the _ls `if not rem:`

|

||||

which intention is to prevent unintended access to subvols

|

||||

"""

|

||||

|

||||

fsroot, vfs_ls, vfs_virt = self.ls(rem, uname, scandir, permsets, lstat=lstat)

|

||||

@@ -901,7 +922,7 @@ class AuthSrv(object):

|

||||

self._reload()

|

||||

return True

|

||||

|

||||

broker.ask("_reload_blocking", False).get()

|

||||

broker.ask("reload", False, True).get()

|

||||

return True

|

||||

|

||||

def _map_volume_idp(

|

||||

@@ -1371,7 +1392,7 @@ class AuthSrv(object):

|

||||

flags[name] = True

|

||||

return

|

||||

|

||||

zs = "mtp on403 on404 xbu xau xiu xbr xar xbd xad xm xban"

|

||||

zs = "mtp on403 on404 xbu xau xiu xbc xac xbr xar xbd xad xm xban"

|

||||

if name not in zs.split():

|

||||

if value is True:

|

||||

t = "└─add volflag [{}] = {} ({})"

|

||||

@@ -1519,10 +1540,11 @@ class AuthSrv(object):

|

||||

|

||||

assert vfs # type: ignore

|

||||

vfs.all_vols = {}

|

||||

vfs.all_nodes = {}

|

||||

vfs.all_aps = []

|

||||

vfs.all_vps = []

|

||||

vfs.get_all_vols(vfs.all_vols, vfs.all_aps, vfs.all_vps)

|

||||

for vol in vfs.all_vols.values():

|

||||

vfs.get_all_vols(vfs.all_vols, vfs.all_nodes, vfs.all_aps, vfs.all_vps)

|

||||

for vol in vfs.all_nodes.values():

|

||||

vol.all_aps.sort(key=lambda x: len(x[0]), reverse=True)

|

||||

vol.all_vps.sort(key=lambda x: len(x[0]), reverse=True)

|

||||

vol.root = vfs

|

||||

@@ -1573,7 +1595,7 @@ class AuthSrv(object):

|

||||

|

||||

vfs.nodes[shr] = vfs.all_vols[shr] = shv

|

||||

for vol in shv.nodes.values():

|

||||

vfs.all_vols[vol.vpath] = vol

|

||||

vfs.all_vols[vol.vpath] = vfs.all_nodes[vol.vpath] = vol

|

||||

vol.get_dbv = vol._get_share_src

|

||||

vol.ls = vol._ls_nope

|

||||

|

||||

@@ -1716,7 +1738,19 @@ class AuthSrv(object):

|

||||

|

||||

self.log("\n\n".join(ta) + "\n", c=3)

|

||||

|

||||

vfs.histtab = {zv.realpath: zv.histpath for zv in vfs.all_vols.values()}

|

||||

rhisttab = {}

|

||||

vfs.histtab = {}

|

||||

for zv in vfs.all_vols.values():

|

||||

histp = zv.histpath

|

||||

is_shr = shr and zv.vpath.split("/")[0] == shr

|

||||

if histp and not is_shr and histp in rhisttab:

|

||||

zv2 = rhisttab[histp]

|

||||

t = "invalid config; multiple volumes share the same histpath (database location):\n histpath: %s\n volume 1: /%s [%s]\n volume 2: %s [%s]"

|

||||

t = t % (histp, zv2.vpath, zv2.realpath, zv.vpath, zv.realpath)

|

||||

self.log(t, 1)

|

||||

raise Exception(t)

|

||||

rhisttab[histp] = zv

|

||||

vfs.histtab[zv.realpath] = histp

|

||||

|

||||

for vol in vfs.all_vols.values():

|

||||

lim = Lim(self.log_func)

|

||||

@@ -1775,12 +1809,12 @@ class AuthSrv(object):

|

||||

vol.lim = lim

|

||||

|

||||

if self.args.no_robots:

|

||||

for vol in vfs.all_vols.values():

|

||||

for vol in vfs.all_nodes.values():

|

||||

# volflag "robots" overrides global "norobots", allowing indexing by search engines for this vol

|

||||

if not vol.flags.get("robots"):

|

||||

vol.flags["norobots"] = True

|

||||

|

||||

for vol in vfs.all_vols.values():

|

||||

for vol in vfs.all_nodes.values():

|

||||

if self.args.no_vthumb:

|

||||

vol.flags["dvthumb"] = True

|

||||

if self.args.no_athumb:

|

||||

@@ -1792,7 +1826,7 @@ class AuthSrv(object):

|

||||

vol.flags["dithumb"] = True

|

||||

|

||||

have_fk = False

|

||||

for vol in vfs.all_vols.values():

|

||||

for vol in vfs.all_nodes.values():

|

||||

fk = vol.flags.get("fk")

|

||||

fka = vol.flags.get("fka")

|

||||

if fka and not fk:

|

||||

@@ -1824,7 +1858,7 @@ class AuthSrv(object):

|

||||

zs = os.path.join(E.cfg, "fk-salt.txt")

|

||||

self.log(t % (fk_len, 16, zs), 3)

|

||||

|

||||

for vol in vfs.all_vols.values():

|

||||

for vol in vfs.all_nodes.values():

|

||||

if "pk" in vol.flags and "gz" not in vol.flags and "xz" not in vol.flags:

|

||||

vol.flags["gz"] = False # def.pk

|

||||

|

||||

@@ -1835,7 +1869,7 @@ class AuthSrv(object):

|

||||

|

||||

all_mte = {}

|

||||

errors = False

|

||||

for vol in vfs.all_vols.values():

|

||||

for vol in vfs.all_nodes.values():

|

||||

if (self.args.e2ds and vol.axs.uwrite) or self.args.e2dsa:

|

||||

vol.flags["e2ds"] = True

|

||||

|

||||

@@ -1846,6 +1880,7 @@ class AuthSrv(object):

|

||||

["no_hash", "nohash"],

|

||||

["no_idx", "noidx"],

|

||||

["og_ua", "og_ua"],

|

||||

["srch_excl", "srch_excl"],

|

||||

]:

|

||||

if vf in vol.flags:

|

||||

ptn = re.compile(vol.flags.pop(vf))

|

||||

@@ -1926,7 +1961,7 @@ class AuthSrv(object):

|

||||

vol.flags[k] = odfusion(getattr(self.args, k), vol.flags[k])

|

||||

|

||||

# append additive args from argv to volflags

|

||||

hooks = "xbu xau xiu xbr xar xbd xad xm xban".split()

|

||||

hooks = "xbu xau xiu xbc xac xbr xar xbd xad xm xban".split()

|

||||

for name in "mtp on404 on403".split() + hooks:

|

||||

self._read_volflag(vol.flags, name, getattr(self.args, name), True)

|

||||

|

||||

@@ -2052,8 +2087,24 @@ class AuthSrv(object):

|

||||

self.log(t.format(mtp), 1)

|

||||

errors = True

|

||||

|

||||

have_daw = False

|

||||

for vol in vfs.all_vols.values():

|

||||

re1: Optional[re.Pattern] = vol.flags.get("srch_excl")

|

||||

excl = [re1.pattern] if re1 else []

|

||||

|

||||

vpaths = []

|

||||

vtop = vol.vpath

|

||||

for vp2 in vfs.all_vols.keys():

|

||||

if vp2.startswith((vtop + "/").lstrip("/")) and vtop != vp2:

|

||||

vpaths.append(re.escape(vp2[len(vtop) :].lstrip("/")))

|

||||

if vpaths:

|

||||

excl.append("^(%s)/" % ("|".join(vpaths),))

|

||||

|

||||

vol.flags["srch_re_dots"] = re.compile("|".join(excl or ["^$"]))

|

||||

excl.extend([r"^\.", r"/\."])

|

||||

vol.flags["srch_re_nodot"] = re.compile("|".join(excl))

|

||||

|

||||

have_daw = False

|

||||

for vol in vfs.all_nodes.values():

|

||||

daw = vol.flags.get("daw") or self.args.daw

|

||||

if daw:

|

||||

vol.flags["daw"] = True

|

||||

@@ -2068,13 +2119,12 @@ class AuthSrv(object):

|

||||

self.log("--smb can only be used when --ah-alg is none", 1)

|

||||

errors = True

|

||||

|

||||

for vol in vfs.all_vols.values():

|

||||

for vol in vfs.all_nodes.values():

|

||||

for k in list(vol.flags.keys()):

|

||||

if re.match("^-[^-]+$", k):

|

||||

vol.flags.pop(k[1:], None)

|

||||

vol.flags.pop(k)

|

||||

|

||||

for vol in vfs.all_vols.values():

|

||||

if vol.flags.get("dots"):

|

||||

for name in vol.axs.uread:

|

||||

vol.axs.udot.add(name)

|

||||

@@ -2216,6 +2266,11 @@ class AuthSrv(object):

|

||||

for x, y in vfs.all_vols.items()

|

||||

if x != shr and not x.startswith(shrs)

|

||||

}

|

||||

vfs.all_nodes = {

|

||||

x: y

|

||||

for x, y in vfs.all_nodes.items()

|

||||

if x != shr and not x.startswith(shrs)

|

||||

}

|

||||

|

||||

assert db and cur and cur2 and shv # type: ignore

|

||||

for row in cur.execute("select * from sh"):

|

||||

@@ -2268,6 +2323,71 @@ class AuthSrv(object):

|

||||

cur.close()

|

||||

db.close()

|

||||

|

||||

self.js_ls = {}

|

||||

self.js_htm = {}

|

||||

for vn in self.vfs.all_nodes.values():

|

||||

vf = vn.flags

|

||||

vn.js_ls = {

|

||||

"idx": "e2d" in vf,

|

||||

"itag": "e2t" in vf,

|

||||

"dnsort": "nsort" in vf,

|

||||

"dhsortn": vf["hsortn"],

|

||||

"dsort": vf["sort"],

|

||||

"dcrop": vf["crop"],

|

||||

"dth3x": vf["th3x"],

|

||||

"u2ts": vf["u2ts"],

|

||||

"frand": bool(vf.get("rand")),

|

||||

"lifetime": vf.get("lifetime") or 0,

|

||||

"unlist": vf.get("unlist") or "",

|

||||

}

|

||||

js_htm = {

|

||||

"s_name": self.args.bname,

|

||||

"have_up2k_idx": "e2d" in vf,

|

||||

"have_acode": not self.args.no_acode,

|

||||

"have_shr": self.args.shr,

|

||||

"have_zip": not self.args.no_zip,

|

||||

"have_mv": not self.args.no_mv,

|

||||

"have_del": not self.args.no_del,

|

||||

"have_unpost": int(self.args.unpost),

|

||||

"have_emp": self.args.emp,

|

||||

"sb_md": "" if "no_sb_md" in vf else (vf.get("md_sbf") or "y"),

|

||||

"txt_ext": self.args.textfiles.replace(",", " "),

|

||||

"def_hcols": list(vf.get("mth") or []),

|

||||

"unlist0": vf.get("unlist") or "",

|

||||

"dgrid": "grid" in vf,

|

||||

"dgsel": "gsel" in vf,

|

||||

"dnsort": "nsort" in vf,

|

||||

"dhsortn": vf["hsortn"],

|

||||

"dsort": vf["sort"],

|

||||

"dcrop": vf["crop"],

|

||||

"dth3x": vf["th3x"],

|

||||

"dvol": self.args.au_vol,

|

||||

"idxh": int(self.args.ih),

|

||||

"themes": self.args.themes,

|

||||

"turbolvl": self.args.turbo,

|

||||

"u2j": self.args.u2j,

|

||||

"u2sz": self.args.u2sz,

|

||||

"u2ts": vf["u2ts"],

|

||||

"frand": bool(vf.get("rand")),

|

||||

"lifetime": vn.js_ls["lifetime"],

|

||||

"u2sort": self.args.u2sort,

|

||||

}

|

||||

vn.js_htm = json.dumps(js_htm)

|

||||

|

||||

vols = list(vfs.all_nodes.values())

|

||||

if enshare:

|

||||

assert shv # type: ignore # !rm

|

||||

vols.append(shv)

|

||||

vols.extend(list(shv.nodes.values()))

|

||||

|

||||

for vol in vols:

|

||||

dbv = vol.get_dbv("")[0]

|

||||

vol.js_ls = vol.js_ls or dbv.js_ls or {}

|

||||

vol.js_htm = vol.js_htm or dbv.js_htm or "{}"

|

||||

|

||||

zs = str(vol.flags.get("tcolor") or self.args.tcolor)

|

||||

vol.flags["tcolor"] = zs.lstrip("#")

|

||||

|

||||

def load_sessions(self, quiet=False) -> None:

|

||||

# mutex me

|

||||

if self.args.no_ses:

|

||||

@@ -2377,7 +2497,7 @@ class AuthSrv(object):

|

||||

self._reload()

|

||||

return True, "new password OK"

|

||||

|

||||

broker.ask("_reload_blocking", False, False).get()

|

||||

broker.ask("reload", False, False).get()

|

||||

return True, "new password OK"

|

||||

|

||||

def setup_chpw(self, acct: dict[str, str]) -> None:

|

||||

@@ -2629,7 +2749,7 @@ class AuthSrv(object):

|

||||

]

|

||||

|

||||

csv = set("i p th_covers zm_on zm_off zs_on zs_off".split())

|

||||

zs = "c ihead mtm mtp on403 on404 xad xar xau xiu xban xbd xbr xbu xm"

|

||||

zs = "c ihead ohead mtm mtp on403 on404 xac xad xar xau xiu xban xbc xbd xbr xbu xm"

|

||||

lst = set(zs.split())

|

||||

askip = set("a v c vc cgen exp_lg exp_md theme".split())

|

||||

fskip = set("exp_lg exp_md mv_re_r mv_re_t rm_re_r rm_re_t".split())

|

||||

|

||||

@@ -43,6 +43,9 @@ class BrokerMp(object):

|

||||

self.procs = []

|

||||

self.mutex = threading.Lock()

|

||||

|

||||

self.retpend: dict[int, Any] = {}

|

||||