Compare commits

416 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

acd32abac5 | ||

|

|

2b47c96cf2 | ||

|

|

1027378bda | ||

|

|

e979d30659 | ||

|

|

574db704cc | ||

|

|

fdb969ea89 | ||

|

|

08977854b3 | ||

|

|

cecac64b68 | ||

|

|

7dabdade2a | ||

|

|

e788f098e2 | ||

|

|

69406d4344 | ||

|

|

d16dd26c65 | ||

|

|

12219c1bea | ||

|

|

118bdcc26e | ||

|

|

78fa96f0f4 | ||

|

|

c7deb63a04 | ||

|

|

4f811eb9e9 | ||

|

|

0b265bd673 | ||

|

|

ee67fabbeb | ||

|

|

b213de7e62 | ||

|

|

7c01505750 | ||

|

|

ae28dfd020 | ||

|

|

2a5a4e785f | ||

|

|

d8bddede6a | ||

|

|

b8a93e74bf | ||

|

|

e60ec94d35 | ||

|

|

84af5fd0a3 | ||

|

|

dbb3edec77 | ||

|

|

d284b46a3e | ||

|

|

9fcb4d222b | ||

|

|

d0bb1ad141 | ||

|

|

b299aaed93 | ||

|

|

abb3224cc5 | ||

|

|

1c66d06702 | ||

|

|

e00e80ae39 | ||

|

|

4f4f106c48 | ||

|

|

a286cc9d55 | ||

|

|

53bb1c719b | ||

|

|

98d5aa17e2 | ||

|

|

aaaa80e4b8 | ||

|

|

e70e926a40 | ||

|

|

e80c1f6d59 | ||

|

|

24de360325 | ||

|

|

e0039bc1e6 | ||

|

|

ae5c4a0109 | ||

|

|

1d367a0da0 | ||

|

|

d285f7ee4a | ||

|

|

37c84021a2 | ||

|

|

8ee9de4291 | ||

|

|

249b63453b | ||

|

|

1c0017d763 | ||

|

|

df51e23639 | ||

|

|

32e71a43b8 | ||

|

|

47a1e6ddfa | ||

|

|

c5f41457bb | ||

|

|

f1e0c44bdd | ||

|

|

9d2e390b6a | ||

|

|

75a58b435d | ||

|

|

f5474d34ac | ||

|

|

c962d2544f | ||

|

|

0b87a4a810 | ||

|

|

1882afb8b6 | ||

|

|

2270c8737a | ||

|

|

d6794955a4 | ||

|

|

f5520f45ef | ||

|

|

9401b5ae13 | ||

|

|

df64a62a03 | ||

|

|

09cea66aa8 | ||

|

|

13cc33e0a5 | ||

|

|

ab36c8c9de | ||

|

|

f85d4ce82f | ||

|

|

6bec4c28ba | ||

|

|

fad1449259 | ||

|

|

86b3b57137 | ||

|

|

b235037dd3 | ||

|

|

3108139d51 | ||

|

|

2ae99ecfa0 | ||

|

|

e8ab53c270 | ||

|

|

5e9bc1127d | ||

|

|

415e61c3c9 | ||

|

|

5152f37ec8 | ||

|

|

0dbeb010cf | ||

|

|

17c465bed7 | ||

|

|

add04478e5 | ||

|

|

6db72d7166 | ||

|

|

868103a9c5 | ||

|

|

0f37718671 | ||

|

|

fa1445df86 | ||

|

|

a783e7071e | ||

|

|

a9919df5af | ||

|

|

b0af31ac35 | ||

|

|

c4c964a685 | ||

|

|

348ec71398 | ||

|

|

a257ccc8b3 | ||

|

|

fcc4296040 | ||

|

|

1684d05d49 | ||

|

|

0006f933a2 | ||

|

|

0484f97c9c | ||

|

|

e430b2567a | ||

|

|

fbc8ee15da | ||

|

|

68a9c05947 | ||

|

|

0a81aba899 | ||

|

|

d2ae822e15 | ||

|

|

fac4b08526 | ||

|

|

3a7b43c663 | ||

|

|

8fcb2d1554 | ||

|

|

590c763659 | ||

|

|

11d1267f8c | ||

|

|

8f5bae95ce | ||

|

|

e6b12ef14c | ||

|

|

b65674618b | ||

|

|

20dca2bea5 | ||

|

|

059e93cdcf | ||

|

|

635ab25013 | ||

|

|

995cd10df8 | ||

|

|

50f3820a6d | ||

|

|

617f3ea861 | ||

|

|

788db47b95 | ||

|

|

5fa8aaabb9 | ||

|

|

89d1af7f33 | ||

|

|

799cf27c5d | ||

|

|

c930d8f773 | ||

|

|

a7f921abb9 | ||

|

|

bc6234e032 | ||

|

|

558bfa4e1e | ||

|

|

5d19f23372 | ||

|

|

27f08cdbfa | ||

|

|

993213e2c0 | ||

|

|

49470c05fa | ||

|

|

ee0a060b79 | ||

|

|

500e3157b9 | ||

|

|

eba86b1d23 | ||

|

|

b69a563fc2 | ||

|

|

a900c36395 | ||

|

|

1d9b324d3e | ||

|

|

539e7b8efe | ||

|

|

50a477ee47 | ||

|

|

7000123a8b | ||

|

|

d48a7d2398 | ||

|

|

389a00ce59 | ||

|

|

7a460de3c2 | ||

|

|

8ea1f4a751 | ||

|

|

1c69ccc6cd | ||

|

|

84b5bbd3b6 | ||

|

|

9ccd327298 | ||

|

|

11df36f3cf | ||

|

|

f62dd0e3cc | ||

|

|

ad18b6e15e | ||

|

|

c00b80ca29 | ||

|

|

92ed4ba3f8 | ||

|

|

7de9775dd9 | ||

|

|

5ce9060e5c | ||

|

|

f727d5cb5a | ||

|

|

4735fb1ebb | ||

|

|

c7d05cc13d | ||

|

|

51c152ff4a | ||

|

|

eeed2a840c | ||

|

|

4aaa111925 | ||

|

|

e31248f018 | ||

|

|

8b4cf022f2 | ||

|

|

4e7455268a | ||

|

|

680f8ae814 | ||

|

|

90555a4cea | ||

|

|

56a62db591 | ||

|

|

cf51997680 | ||

|

|

f05cc18d61 | ||

|

|

5384c2e0f5 | ||

|

|

9bfbf80a0e | ||

|

|

f874d7754f | ||

|

|

a669f79480 | ||

|

|

1c3894743a | ||

|

|

75cdf17df4 | ||

|

|

de7dd1e60a | ||

|

|

0ee574a718 | ||

|

|

faac894706 | ||

|

|

dac2fad48e | ||

|

|

77f624b01e | ||

|

|

e24ffebfc8 | ||

|

|

70d07d1609 | ||

|

|

bfb3303d87 | ||

|

|

660705a436 | ||

|

|

74a3f97671 | ||

|

|

b3e35bb494 | ||

|

|

76adac7c72 | ||

|

|

5dc75ebb67 | ||

|

|

d686ce12b6 | ||

|

|

d3c40a423e | ||

|

|

2fb1e6dab8 | ||

|

|

10430b347f | ||

|

|

e0e3f6ac3e | ||

|

|

c694cbffdc | ||

|

|

bdd0e5d771 | ||

|

|

aa98e427f0 | ||

|

|

daa6f4c94c | ||

|

|

4a76663fb2 | ||

|

|

cebda5028a | ||

|

|

3fa377a580 | ||

|

|

a11c1005a8 | ||

|

|

4a6aea9328 | ||

|

|

4ca041e93e | ||

|

|

52a866a405 | ||

|

|

8b6bd0e6ac | ||

|

|

780fc4639a | ||

|

|

3692fc9d83 | ||

|

|

c2a0b1b4c6 | ||

|

|

21bbdb5419 | ||

|

|

aa1c08962c | ||

|

|

8a5d0399dd | ||

|

|

f2cd0b0c4a | ||

|

|

c2b66bbe73 | ||

|

|

48b957f1d5 | ||

|

|

3683984c8d | ||

|

|

a3431512d8 | ||

|

|

d832b787e7 | ||

|

|

6f75b02723 | ||

|

|

b8241710bd | ||

|

|

d638404b6a | ||

|

|

9362ca3ed9 | ||

|

|

d1a03c6d17 | ||

|

|

c6c31702c2 | ||

|

|

bd2d88c96e | ||

|

|

76b1857e4e | ||

|

|

095bd17d10 | ||

|

|

204bfac3fa | ||

|

|

ac49b0ca93 | ||

|

|

c5b04f6fef | ||

|

|

5c58fda46d | ||

|

|

062730c70c | ||

|

|

cade1990ce | ||

|

|

59b6e61816 | ||

|

|

daff7ff158 | ||

|

|

0862860961 | ||

|

|

1cb24045a0 | ||

|

|

622358b172 | ||

|

|

7998884a9d | ||

|

|

51ddecd101 | ||

|

|

7a35ab1d1e | ||

|

|

48564ba52a | ||

|

|

49efffd740 | ||

|

|

d6ac224c8f | ||

|

|

a772b8c3f2 | ||

|

|

b580953dcd | ||

|

|

d86653c763 | ||

|

|

dded4fca76 | ||

|

|

36365ffa6b | ||

|

|

0f9aeeaa27 | ||

|

|

d8ebcd0ef7 | ||

|

|

6e445487b1 | ||

|

|

6605e461c7 | ||

|

|

40ce4e2275 | ||

|

|

8fef9e363e | ||

|

|

4792c2770d | ||

|

|

87bb49da36 | ||

|

|

1c0071d9ce | ||

|

|

efded35c2e | ||

|

|

1d74240b9a | ||

|

|

098184ff7b | ||

|

|

4083533916 | ||

|

|

feb1acd43a | ||

|

|

a9591db734 | ||

|

|

9ebf148cbe | ||

|

|

a473e5e19a | ||

|

|

5d3034c231 | ||

|

|

c3a895af64 | ||

|

|

cea5aecbf2 | ||

|

|

0e61e70670 | ||

|

|

1e333c0939 | ||

|

|

917b6ec03c | ||

|

|

fe67c52ead | ||

|

|

909c7bee3e | ||

|

|

27ca54d138 | ||

|

|

2147c3a646 | ||

|

|

a99120116f | ||

|

|

802efeaff2 | ||

|

|

9ad3af1ef6 | ||

|

|

715727b811 | ||

|

|

c6eaa7b836 | ||

|

|

c2fceea2a5 | ||

|

|

190e11f7ea | ||

|

|

ad7413a5ff | ||

|

|

903b9e627a | ||

|

|

c5c1e96cf8 | ||

|

|

62fbb04c9d | ||

|

|

728dc62d0b | ||

|

|

2dfe1b1c6b | ||

|

|

35d4a1a6af | ||

|

|

eb3fa5aa6b | ||

|

|

438384425a | ||

|

|

0b6f102436 | ||

|

|

c9b7ec72d8 | ||

|

|

256c7f1789 | ||

|

|

4e5a323c62 | ||

|

|

f4a3bbd237 | ||

|

|

fe73f2d579 | ||

|

|

f79fcc7073 | ||

|

|

4c4b3790c7 | ||

|

|

bd60b464bb | ||

|

|

6bce852765 | ||

|

|

3b19a5a59d | ||

|

|

f024583011 | ||

|

|

1111baacb2 | ||

|

|

1b9c913efb | ||

|

|

3524c36e1b | ||

|

|

cf87cea9f8 | ||

|

|

bfa34404b8 | ||

|

|

0aba5f35bf | ||

|

|

663bc0842a | ||

|

|

7d10c96e73 | ||

|

|

6b2720fab0 | ||

|

|

e74ad5132a | ||

|

|

1f6f89c1fd | ||

|

|

4d55e60980 | ||

|

|

ddaaccd5af | ||

|

|

c20b7dac3d | ||

|

|

1f779d5094 | ||

|

|

715401ca8e | ||

|

|

e7cd922d8b | ||

|

|

187feee0c1 | ||

|

|

49e962a7dc | ||

|

|

633ff601e5 | ||

|

|

331cf37054 | ||

|

|

23e4b9002f | ||

|

|

c0de3c8053 | ||

|

|

a82a3b084a | ||

|

|

67c298e66b | ||

|

|

c110ccb9ae | ||

|

|

0143380306 | ||

|

|

af9000d3c8 | ||

|

|

097d798e5e | ||

|

|

1d9f9f221a | ||

|

|

214a367f48 | ||

|

|

2fb46551a2 | ||

|

|

6bcf330ae0 | ||

|

|

2075a8b18c | ||

|

|

1275ac6c42 | ||

|

|

708f20b7af | ||

|

|

a2c0c708e8 | ||

|

|

2f2c65d91e | ||

|

|

cd5fcc7ca7 | ||

|

|

aa29e7be48 | ||

|

|

93febe34b0 | ||

|

|

f086e6d3c1 | ||

|

|

22e51e1c96 | ||

|

|

63a5336f31 | ||

|

|

bfc6c53cc5 | ||

|

|

236017f310 | ||

|

|

0a1d9b4dfd | ||

|

|

b50d090946 | ||

|

|

00b5db52cf | ||

|

|

24cb30e2c5 | ||

|

|

4549145ab5 | ||

|

|

67b0217754 | ||

|

|

ccae9efdf0 | ||

|

|

59d596b222 | ||

|

|

4878eb2c45 | ||

|

|

7755392f57 | ||

|

|

dc2ea20959 | ||

|

|

8eaea2bd17 | ||

|

|

58e559918f | ||

|

|

f38a3fca5b | ||

|

|

1ea145b384 | ||

|

|

0d9567575a | ||

|

|

e82f176289 | ||

|

|

d4b51c040e | ||

|

|

125d0efbd8 | ||

|

|

3215afc504 | ||

|

|

c73ff3ce1b | ||

|

|

f9c159a051 | ||

|

|

2ab1325c90 | ||

|

|

5b0f7ff506 | ||

|

|

9269bc84f2 | ||

|

|

4e8b651e18 | ||

|

|

65b4f79534 | ||

|

|

5dd43dbc45 | ||

|

|

5f73074c7e | ||

|

|

f5d6ba27b2 | ||

|

|

73fa70b41f | ||

|

|

2a1cda42e7 | ||

|

|

1bd7e31466 | ||

|

|

eb49e1fb4a | ||

|

|

9838c2f0ce | ||

|

|

6041df8370 | ||

|

|

2933dce3ef | ||

|

|

dab377d37b | ||

|

|

f35e41baf1 | ||

|

|

c4083a2942 | ||

|

|

36c20bbe53 | ||

|

|

e34634f5af | ||

|

|

cba9e5b669 | ||

|

|

1f3c46a6b0 | ||

|

|

799a5ffa47 | ||

|

|

b000707c10 | ||

|

|

feba4de1d6 | ||

|

|

951fdb27ca | ||

|

|

9697fb3d84 | ||

|

|

2dbed4500a | ||

|

|

fd9d0e433d | ||

|

|

f096f3ef81 | ||

|

|

cc4a063695 | ||

|

|

b64cabc3c9 | ||

|

|

3dd460717c | ||

|

|

bf658a522b | ||

|

|

e9be7e712d | ||

|

|

e40cd2a809 | ||

|

|

dbabeb9692 | ||

|

|

8dd37d76b0 | ||

|

|

fd475aa358 | ||

|

|

f0988c0e32 | ||

|

|

0632f09bff | ||

|

|

ba599aaca0 | ||

|

|

ff05919e89 | ||

|

|

52e63fa101 | ||

|

|

96ceccd12a | ||

|

|

87994fe006 | ||

|

|

fa12c81a03 | ||

|

|

344ce63455 |

14

.gitignore

vendored

14

.gitignore

vendored

@@ -5,13 +5,16 @@ __pycache__/

|

||||

MANIFEST.in

|

||||

MANIFEST

|

||||

copyparty.egg-info/

|

||||

buildenv/

|

||||

build/

|

||||

dist/

|

||||

sfx/

|

||||

py2/

|

||||

.venv/

|

||||

|

||||

/buildenv/

|

||||

/build/

|

||||

/dist/

|

||||

/py2/

|

||||

/sfx*

|

||||

/unt/

|

||||

/log/

|

||||

|

||||

# ide

|

||||

*.sublime-workspace

|

||||

|

||||

@@ -19,6 +22,7 @@ py2/

|

||||

*.bak

|

||||

|

||||

# derived

|

||||

copyparty/res/COPYING.txt

|

||||

copyparty/web/deps/

|

||||

srv/

|

||||

|

||||

|

||||

25

.vscode/settings.json

vendored

25

.vscode/settings.json

vendored

@@ -23,7 +23,6 @@

|

||||

"terminal.ansiBrightWhite": "#ffffff",

|

||||

},

|

||||

"python.testing.pytestEnabled": false,

|

||||

"python.testing.nosetestsEnabled": false,

|

||||

"python.testing.unittestEnabled": true,

|

||||

"python.testing.unittestArgs": [

|

||||

"-v",

|

||||

@@ -35,18 +34,40 @@

|

||||

"python.linting.pylintEnabled": true,

|

||||

"python.linting.flake8Enabled": true,

|

||||

"python.linting.banditEnabled": true,

|

||||

"python.linting.mypyEnabled": true,

|

||||

"python.linting.mypyArgs": [

|

||||

"--ignore-missing-imports",

|

||||

"--follow-imports=silent",

|

||||

"--show-column-numbers",

|

||||

"--strict"

|

||||

],

|

||||

"python.linting.flake8Args": [

|

||||

"--max-line-length=120",

|

||||

"--ignore=E722,F405,E203,W503,W293,E402",

|

||||

"--ignore=E722,F405,E203,W503,W293,E402,E501,E128",

|

||||

],

|

||||

"python.linting.banditArgs": [

|

||||

"--ignore=B104"

|

||||

],

|

||||

"python.linting.pylintArgs": [

|

||||

"--disable=missing-module-docstring",

|

||||

"--disable=missing-class-docstring",

|

||||

"--disable=missing-function-docstring",

|

||||

"--disable=wrong-import-position",

|

||||

"--disable=raise-missing-from",

|

||||

"--disable=bare-except",

|

||||

"--disable=invalid-name",

|

||||

"--disable=line-too-long",

|

||||

"--disable=consider-using-f-string"

|

||||

],

|

||||

// python3 -m isort --py=27 --profile=black copyparty/

|

||||

"python.formatting.provider": "black",

|

||||

"editor.formatOnSave": true,

|

||||

"[html]": {

|

||||

"editor.formatOnSave": false,

|

||||

},

|

||||

"[css]": {

|

||||

"editor.formatOnSave": false,

|

||||

},

|

||||

"files.associations": {

|

||||

"*.makefile": "makefile"

|

||||

},

|

||||

|

||||

374

README.md

374

README.md

@@ -9,11 +9,12 @@

|

||||

turn your phone or raspi into a portable file server with resumable uploads/downloads using *any* web browser

|

||||

|

||||

* server only needs `py2.7` or `py3.3+`, all dependencies optional

|

||||

* browse/upload with IE4 / netscape4.0 on win3.11 (heh)

|

||||

* *resumable* uploads need `firefox 34+` / `chrome 41+` / `safari 7+` for full speed

|

||||

* code standard: `black`

|

||||

* browse/upload with [IE4](#browser-support) / netscape4.0 on win3.11 (heh)

|

||||

* *resumable* uploads need `firefox 34+` / `chrome 41+` / `safari 7+`

|

||||

|

||||

📷 **screenshots:** [browser](#the-browser) // [upload](#uploading) // [unpost](#unpost) // [thumbnails](#thumbnails) // [search](#searching) // [fsearch](#file-search) // [zip-DL](#zip-downloads) // [md-viewer](#markdown-viewer) // [ie4](#browser-support)

|

||||

try the **[read-only demo server](https://a.ocv.me/pub/demo/)** 👀 running from a basement in finland

|

||||

|

||||

📷 **screenshots:** [browser](#the-browser) // [upload](#uploading) // [unpost](#unpost) // [thumbnails](#thumbnails) // [search](#searching) // [fsearch](#file-search) // [zip-DL](#zip-downloads) // [md-viewer](#markdown-viewer)

|

||||

|

||||

|

||||

## get the app

|

||||

@@ -43,26 +44,33 @@ turn your phone or raspi into a portable file server with resumable uploads/down

|

||||

* [tabs](#tabs) - the main tabs in the ui

|

||||

* [hotkeys](#hotkeys) - the browser has the following hotkeys

|

||||

* [navpane](#navpane) - switching between breadcrumbs or navpane

|

||||

* [thumbnails](#thumbnails) - press `g` to toggle grid-view instead of the file listing

|

||||

* [thumbnails](#thumbnails) - press `g` or `田` to toggle grid-view instead of the file listing

|

||||

* [zip downloads](#zip-downloads) - download folders (or file selections) as `zip` or `tar` files

|

||||

* [uploading](#uploading) - drag files/folders into the web-browser to upload

|

||||

* [file-search](#file-search) - dropping files into the browser also lets you see if they exist on the server

|

||||

* [unpost](#unpost) - undo/delete accidental uploads

|

||||

* [self-destruct](#self-destruct) - uploads can be given a lifetime

|

||||

* [file manager](#file-manager) - cut/paste, rename, and delete files/folders (if you have permission)

|

||||

* [batch rename](#batch-rename) - select some files and press `F2` to bring up the rename UI

|

||||

* [markdown viewer](#markdown-viewer) - and there are *two* editors

|

||||

* [other tricks](#other-tricks)

|

||||

* [searching](#searching) - search by size, date, path/name, mp3-tags, ...

|

||||

* [server config](#server-config) - using arguments or config files, or a mix of both

|

||||

* [qr-code](#qr-code) - print a qr-code [(screenshot)](https://user-images.githubusercontent.com/241032/194728533-6f00849b-c6ac-43c6-9359-83e454d11e00.png) for quick access

|

||||

* [ftp-server](#ftp-server) - an FTP server can be started using `--ftp 3921`

|

||||

* [file indexing](#file-indexing)

|

||||

* [upload rules](#upload-rules) - set upload rules using volume flags

|

||||

* [file indexing](#file-indexing) - enables dedup and music search ++

|

||||

* [exclude-patterns](#exclude-patterns) - to save some time

|

||||

* [filesystem guards](#filesystem-guards) - avoid traversing into other filesystems

|

||||

* [periodic rescan](#periodic-rescan) - filesystem monitoring

|

||||

* [upload rules](#upload-rules) - set upload rules using volflags

|

||||

* [compress uploads](#compress-uploads) - files can be autocompressed on upload

|

||||

* [other flags](#other-flags)

|

||||

* [database location](#database-location) - in-volume (`.hist/up2k.db`, default) or somewhere else

|

||||

* [metadata from audio files](#metadata-from-audio-files) - set `-e2t` to index tags on upload

|

||||

* [file parser plugins](#file-parser-plugins) - provide custom parsers to index additional tags, also see [./bin/mtag/README.md](./bin/mtag/README.md)

|

||||

* [file parser plugins](#file-parser-plugins) - provide custom parsers to index additional tags

|

||||

* [upload events](#upload-events) - trigger a script/program on each upload

|

||||

* [hiding from google](#hiding-from-google) - tell search engines you dont wanna be indexed

|

||||

* [themes](#themes)

|

||||

* [complete examples](#complete-examples)

|

||||

* [browser support](#browser-support) - TLDR: yes

|

||||

* [client examples](#client-examples) - interact with copyparty using non-browser clients

|

||||

@@ -86,6 +94,7 @@ turn your phone or raspi into a portable file server with resumable uploads/down

|

||||

* [optional gpl stuff](#optional-gpl-stuff)

|

||||

* [sfx](#sfx) - the self-contained "binary"

|

||||

* [sfx repack](#sfx-repack) - reduce the size of an sfx by removing features

|

||||

* [copyparty.exe](#copypartyexe)

|

||||

* [install on android](#install-on-android)

|

||||

* [reporting bugs](#reporting-bugs) - ideas for context to include in bug reports

|

||||

* [building](#building)

|

||||

@@ -100,15 +109,17 @@ turn your phone or raspi into a portable file server with resumable uploads/down

|

||||

|

||||

download **[copyparty-sfx.py](https://github.com/9001/copyparty/releases/latest/download/copyparty-sfx.py)** and you're all set!

|

||||

|

||||

running the sfx without arguments (for example doubleclicking it on Windows) will give everyone read/write access to the current folder; see `-h` for help if you want [accounts and volumes](#accounts-and-volumes) etc

|

||||

if you cannot install python, you can use [copyparty.exe](#copypartyexe) instead

|

||||

|

||||

running the sfx without arguments (for example doubleclicking it on Windows) will give everyone read/write access to the current folder; you may want [accounts and volumes](#accounts-and-volumes)

|

||||

|

||||

some recommended options:

|

||||

* `-e2dsa` enables general [file indexing](#file-indexing)

|

||||

* `-e2ts` enables audio metadata indexing (needs either FFprobe or Mutagen), see [optional dependencies](#optional-dependencies)

|

||||

* `-v /mnt/music:/music:r:rw,foo -a foo:bar` shares `/mnt/music` as `/music`, `r`eadable by anyone, and read-write for user `foo`, password `bar`

|

||||

* replace `:r:rw,foo` with `:r,foo` to only make the folder readable by `foo` and nobody else

|

||||

* see [accounts and volumes](#accounts-and-volumes) for the syntax and other permissions (`r`ead, `w`rite, `m`ove, `d`elete, `g`et)

|

||||

* `--ls '**,*,ln,p,r'` to crash on startup if any of the volumes contain a symlink which point outside the volume, as that could give users unintended access

|

||||

* see [accounts and volumes](#accounts-and-volumes) for the syntax and other permissions (`r`ead, `w`rite, `m`ove, `d`elete, `g`et, up`G`et)

|

||||

* `--ls '**,*,ln,p,r'` to crash on startup if any of the volumes contain a symlink which point outside the volume, as that could give users unintended access (see `--help-ls`)

|

||||

|

||||

|

||||

### on servers

|

||||

@@ -157,16 +168,18 @@ feature summary

|

||||

* ☑ volumes (mountpoints)

|

||||

* ☑ [accounts](#accounts-and-volumes)

|

||||

* ☑ [ftp-server](#ftp-server)

|

||||

* ☑ [qr-code](#qr-code) for quick access

|

||||

* upload

|

||||

* ☑ basic: plain multipart, ie6 support

|

||||

* ☑ [up2k](#uploading): js, resumable, multithreaded

|

||||

* ☑ stash: simple PUT filedropper

|

||||

* ☑ [unpost](#unpost): undo/delete accidental uploads

|

||||

* ☑ [self-destruct](#self-destruct) (specified server-side or client-side)

|

||||

* ☑ symlink/discard existing files (content-matching)

|

||||

* download

|

||||

* ☑ single files in browser

|

||||

* ☑ [folders as zip / tar files](#zip-downloads)

|

||||

* ☑ FUSE client (read-only)

|

||||

* ☑ [FUSE client](https://github.com/9001/copyparty/tree/hovudstraum/bin#copyparty-fusepy) (read-only)

|

||||

* browser

|

||||

* ☑ [navpane](#navpane) (directory tree sidebar)

|

||||

* ☑ file manager (cut/paste, delete, [batch-rename](#batch-rename))

|

||||

@@ -174,7 +187,7 @@ feature summary

|

||||

* ☑ image gallery with webm player

|

||||

* ☑ textfile browser with syntax hilighting

|

||||

* ☑ [thumbnails](#thumbnails)

|

||||

* ☑ ...of images using Pillow

|

||||

* ☑ ...of images using Pillow, pyvips, or FFmpeg

|

||||

* ☑ ...of videos using FFmpeg

|

||||

* ☑ ...of audio (spectrograms) using FFmpeg

|

||||

* ☑ cache eviction (max-age; maybe max-size eventually)

|

||||

@@ -202,6 +215,7 @@ project goals / philosophy

|

||||

* inverse linux philosophy -- do all the things, and do an *okay* job

|

||||

* quick drop-in service to get a lot of features in a pinch

|

||||

* there are probably [better alternatives](https://github.com/awesome-selfhosted/awesome-selfhosted) if you have specific/long-term needs

|

||||

* but the resumable multithreaded uploads are p slick ngl

|

||||

* run anywhere, support everything

|

||||

* as many web-browsers and python versions as possible

|

||||

* every browser should at least be able to browse, download, upload files

|

||||

@@ -232,7 +246,6 @@ some improvement ideas

|

||||

|

||||

# bugs

|

||||

|

||||

* Windows: python 3.7 and older cannot read tags with FFprobe, so use Mutagen or upgrade

|

||||

* Windows: python 2.7 cannot index non-ascii filenames with `-e2d`

|

||||

* Windows: python 2.7 cannot handle filenames with mojibake

|

||||

* `--th-ff-jpg` may fix video thumbnails on some FFmpeg versions (macos, some linux)

|

||||

@@ -240,13 +253,27 @@ some improvement ideas

|

||||

|

||||

## general bugs

|

||||

|

||||

* Windows: if the up2k db is on a samba-share or network disk, you'll get unpredictable behavior if the share is disconnected for a bit

|

||||

* Windows: if the `up2k.db` (filesystem index) is on a samba-share or network disk, you'll get unpredictable behavior if the share is disconnected for a bit

|

||||

* use `--hist` or the `hist` volflag (`-v [...]:c,hist=/tmp/foo`) to place the db on a local disk instead

|

||||

* all volumes must exist / be available on startup; up2k (mtp especially) gets funky otherwise

|

||||

* [the database can get stuck](https://github.com/9001/copyparty/issues/10)

|

||||

* has only happened once but that is once too many

|

||||

* luckily not dangerous for file integrity and doesn't really stop uploads or anything like that

|

||||

* but would really appreciate some logs if anyone ever runs into it again

|

||||

* probably more, pls let me know

|

||||

|

||||

## not my bugs

|

||||

|

||||

* [Chrome issue 1317069](https://bugs.chromium.org/p/chromium/issues/detail?id=1317069) -- if you try to upload a folder which contains symlinks by dragging it into the browser, the symlinked files will not get uploaded

|

||||

|

||||

* [Chrome issue 1354816](https://bugs.chromium.org/p/chromium/issues/detail?id=1354816) -- chrome may eat all RAM uploading over plaintext http with `mt` enabled

|

||||

|

||||

* more amusingly, [Chrome issue 1354800](https://bugs.chromium.org/p/chromium/issues/detail?id=1354800) -- chrome may eat all RAM uploading in general (altho you probably won't run into this one)

|

||||

|

||||

* [Chrome issue 1352210](https://bugs.chromium.org/p/chromium/issues/detail?id=1352210) -- plaintext http may be faster at filehashing than https (but also extremely CPU-intensive and likely to run into the above gc bugs)

|

||||

|

||||

* [Firefox issue 1790500](https://bugzilla.mozilla.org/show_bug.cgi?id=1790500) -- sometimes forgets to close filedescriptors during upload so the browser can crash after ~4000 files

|

||||

|

||||

* iPhones: the volume control doesn't work because [apple doesn't want it to](https://developer.apple.com/library/archive/documentation/AudioVideo/Conceptual/Using_HTML5_Audio_Video/Device-SpecificConsiderations/Device-SpecificConsiderations.html#//apple_ref/doc/uid/TP40009523-CH5-SW11)

|

||||

* *future workaround:* enable the equalizer, make it all-zero, and set a negative boost to reduce the volume

|

||||

* "future" because `AudioContext` is broken in the current iOS version (15.1), maybe one day...

|

||||

@@ -260,6 +287,9 @@ some improvement ideas

|

||||

* VirtualBox: sqlite throws `Disk I/O Error` when running in a VM and the up2k database is in a vboxsf

|

||||

* use `--hist` or the `hist` volflag (`-v [...]:c,hist=/tmp/foo`) to place the db inside the vm instead

|

||||

|

||||

* Ubuntu: dragging files from certain folders into firefox or chrome is impossible

|

||||

* due to snap security policies -- see `snap connections firefox` for the allowlist, `removable-media` permits all of `/mnt` and `/media` apparently

|

||||

|

||||

|

||||

# FAQ

|

||||

|

||||

@@ -270,7 +300,7 @@ some improvement ideas

|

||||

* you can also do this with linux filesystem permissions; `chmod 111 music` will make it possible to access files and folders inside the `music` folder but not list the immediate contents -- also works with other software, not just copyparty

|

||||

|

||||

* can I make copyparty download a file to my server if I give it a URL?

|

||||

* not officially, but there is a [terrible hack](https://github.com/9001/copyparty/blob/hovudstraum/bin/mtag/wget.py) which makes it possible

|

||||

* not really, but there is a [terrible hack](https://github.com/9001/copyparty/blob/hovudstraum/bin/mtag/wget.py) which makes it possible

|

||||

|

||||

|

||||

# accounts and volumes

|

||||

@@ -278,6 +308,8 @@ some improvement ideas

|

||||

per-folder, per-user permissions - if your setup is getting complex, consider making a [config file](./docs/example.conf) instead of using arguments

|

||||

* much easier to manage, and you can modify the config at runtime with `systemctl reload copyparty` or more conveniently using the `[reload cfg]` button in the control-panel (if logged in as admin)

|

||||

|

||||

a quick summary can be seen using `--help-accounts`

|

||||

|

||||

configuring accounts/volumes with arguments:

|

||||

* `-a usr:pwd` adds account `usr` with password `pwd`

|

||||

* `-v .::r` adds current-folder `.` as the webroot, `r`eadable by anyone

|

||||

@@ -291,6 +323,7 @@ permissions:

|

||||

* `m` (move): move files/folders *from* this folder

|

||||

* `d` (delete): delete files/folders

|

||||

* `g` (get): only download files, cannot see folder contents or zip/tar

|

||||

* `G` (upget): same as `g` except uploaders get to see their own filekeys (see `fk` in examples below)

|

||||

|

||||

examples:

|

||||

* add accounts named u1, u2, u3 with passwords p1, p2, p3: `-a u1:p1 -a u2:p2 -a u3:p3`

|

||||

@@ -301,17 +334,21 @@ examples:

|

||||

* unauthorized users accessing the webroot can see that the `inc` folder exists, but cannot open it

|

||||

* `u1` can open the `inc` folder, but cannot see the contents, only upload new files to it

|

||||

* `u2` can browse it and move files *from* `/inc` into any folder where `u2` has write-access

|

||||

* make folder `/mnt/ss` available at `/i`, read-write for u1, get-only for everyone else, and enable accesskeys: `-v /mnt/ss:i:rw,u1:g:c,fk=4`

|

||||

* `c,fk=4` sets the `fk` volume-flag to 4, meaning each file gets a 4-character accesskey

|

||||

* `u1` can upload files, browse the folder, and see the generated accesskeys

|

||||

* other users cannot browse the folder, but can access the files if they have the full file URL with the accesskey

|

||||

* make folder `/mnt/ss` available at `/i`, read-write for u1, get-only for everyone else, and enable filekeys: `-v /mnt/ss:i:rw,u1:g:c,fk=4`

|

||||

* `c,fk=4` sets the `fk` (filekey) volflag to 4, meaning each file gets a 4-character accesskey

|

||||

* `u1` can upload files, browse the folder, and see the generated filekeys

|

||||

* other users cannot browse the folder, but can access the files if they have the full file URL with the filekey

|

||||

* replacing the `g` permission with `wg` would let anonymous users upload files, but not see the required filekey to access it

|

||||

* replacing the `g` permission with `wG` would let anonymous users upload files, receiving a working direct link in return

|

||||

|

||||

anyone trying to bruteforce a password gets banned according to `--ban-pw`; default is 24h ban for 9 failed attempts in 1 hour

|

||||

|

||||

|

||||

# the browser

|

||||

|

||||

accessing a copyparty server using a web-browser

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

## tabs

|

||||

@@ -334,7 +371,7 @@ the browser has the following hotkeys (always qwerty)

|

||||

* `I/K` prev/next folder

|

||||

* `M` parent folder (or unexpand current)

|

||||

* `V` toggle folders / textfiles in the navpane

|

||||

* `G` toggle list / [grid view](#thumbnails)

|

||||

* `G` toggle list / [grid view](#thumbnails) -- same as `田` bottom-right

|

||||

* `T` toggle thumbnails / icons

|

||||

* `ESC` close various things

|

||||

* `ctrl-X` cut selected files/folders

|

||||

@@ -355,19 +392,24 @@ the browser has the following hotkeys (always qwerty)

|

||||

* `U/O` skip 10sec back/forward

|

||||

* `0..9` jump to 0%..90%

|

||||

* `P` play/pause (also starts playing the folder)

|

||||

* `Y` download file

|

||||

* when viewing images / playing videos:

|

||||

* `J/L, Left/Right` prev/next file

|

||||

* `Home/End` first/last file

|

||||

* `F` toggle fullscreen

|

||||

* `S` toggle selection

|

||||

* `R` rotate clockwise (shift=ccw)

|

||||

* `Y` download file

|

||||

* `Esc` close viewer

|

||||

* videos:

|

||||

* `U/O` skip 10sec back/forward

|

||||

* `0..9` jump to 0%..90%

|

||||

* `P/K/Space` play/pause

|

||||

* `F` fullscreen

|

||||

* `C` continue playing next video

|

||||

* `V` loop

|

||||

* `M` mute

|

||||

* `C` continue playing next video

|

||||

* `V` loop entire file

|

||||

* `[` loop range (start)

|

||||

* `]` loop range (end)

|

||||

* when the navpane is open:

|

||||

* `A/D` adjust tree width

|

||||

* in the [grid view](#thumbnails):

|

||||

@@ -399,11 +441,13 @@ click the `🌲` or pressing the `B` hotkey to toggle between breadcrumbs path (

|

||||

|

||||

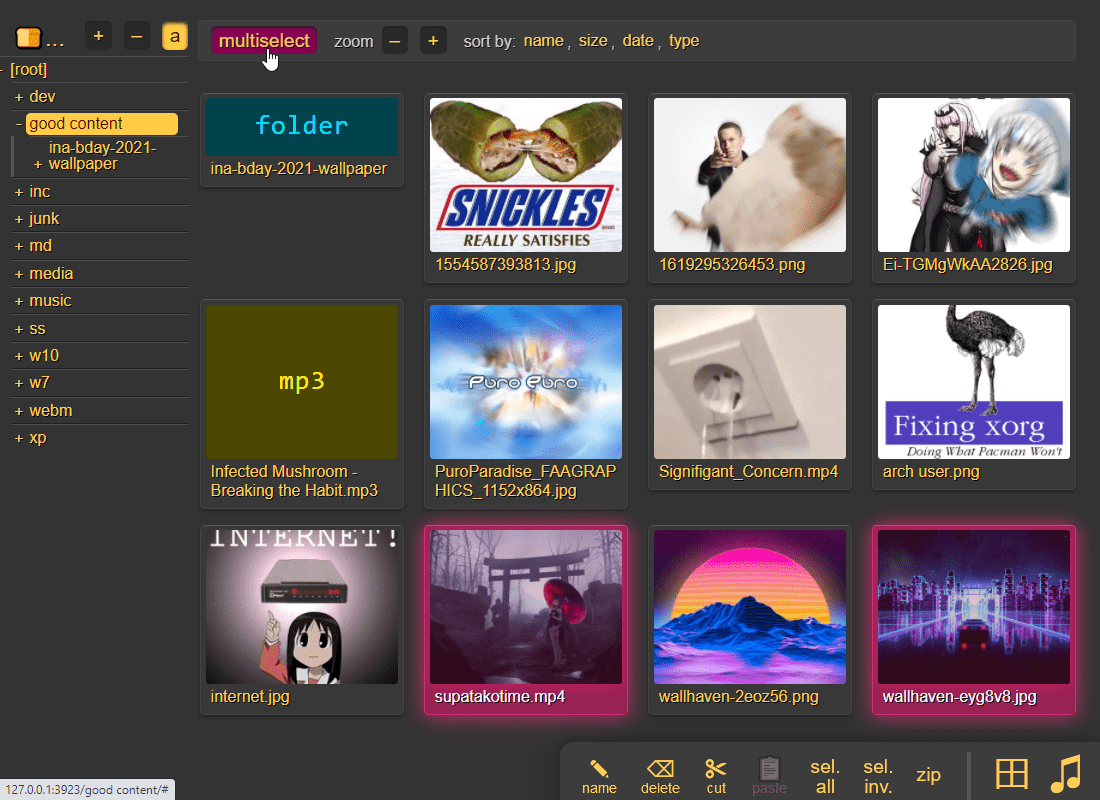

## thumbnails

|

||||

|

||||

press `g` to toggle grid-view instead of the file listing, and `t` toggles icons / thumbnails

|

||||

press `g` or `田` to toggle grid-view instead of the file listing and `t` toggles icons / thumbnails

|

||||

|

||||

|

||||

|

||||

it does static images with Pillow and uses FFmpeg for video files, so you may want to `--no-thumb` or maybe just `--no-vthumb` depending on how dangerous your users are

|

||||

it does static images with Pillow / pyvips / FFmpeg, and uses FFmpeg for video files, so you may want to `--no-thumb` or maybe just `--no-vthumb` depending on how dangerous your users are

|

||||

* pyvips is 3x faster than Pillow, Pillow is 3x faster than FFmpeg

|

||||

* disable thumbnails for specific volumes with volflag `dthumb` for all, or `dvthumb` / `dathumb` / `dithumb` for video/audio/images only

|

||||

|

||||

audio files are covnerted into spectrograms using FFmpeg unless you `--no-athumb` (and some FFmpeg builds may need `--th-ff-swr`)

|

||||

|

||||

@@ -439,13 +483,13 @@ you can also zip a selection of files or folders by clicking them in the browser

|

||||

|

||||

## uploading

|

||||

|

||||

drag files/folders into the web-browser to upload

|

||||

drag files/folders into the web-browser to upload (or use the [command-line uploader](https://github.com/9001/copyparty/tree/hovudstraum/bin#up2kpy))

|

||||

|

||||

this initiates an upload using `up2k`; there are two uploaders available:

|

||||

* `[🎈] bup`, the basic uploader, supports almost every browser since netscape 4.0

|

||||

* `[🚀] up2k`, the fancy one

|

||||

* `[🚀] up2k`, the good / fancy one

|

||||

|

||||

you can also undo/delete uploads by using `[🧯]` [unpost](#unpost)

|

||||

NB: you can undo/delete your own uploads with `[🧯]` [unpost](#unpost)

|

||||

|

||||

up2k has several advantages:

|

||||

* you can drop folders into the browser (files are added recursively)

|

||||

@@ -457,19 +501,19 @@ up2k has several advantages:

|

||||

* much higher speeds than ftp/scp/tarpipe on some internet connections (mainly american ones) thanks to parallel connections

|

||||

* the last-modified timestamp of the file is preserved

|

||||

|

||||

see [up2k](#up2k) for details on how it works

|

||||

see [up2k](#up2k) for details on how it works, or watch a [demo video](https://a.ocv.me/pub/demo/pics-vids/#gf-0f6f5c0d)

|

||||

|

||||

|

||||

|

||||

**protip:** you can avoid scaring away users with [contrib/plugins/minimal-up2k.html](contrib/plugins/minimal-up2k.html) which makes it look [much simpler](https://user-images.githubusercontent.com/241032/118311195-dd6ca380-b4ef-11eb-86f3-75a3ff2e1332.png)

|

||||

|

||||

**protip:** if you enable `favicon` in the `[⚙️] settings` tab (by typing something into the textbox), the icon in the browser tab will indicate upload progress

|

||||

**protip:** if you enable `favicon` in the `[⚙️] settings` tab (by typing something into the textbox), the icon in the browser tab will indicate upload progress -- also, the `[🔔]` and/or `[🔊]` switches enable visible and/or audible notifications on upload completion

|

||||

|

||||

the up2k UI is the epitome of polished inutitive experiences:

|

||||

* "parallel uploads" specifies how many chunks to upload at the same time

|

||||

* `[🏃]` analysis of other files should continue while one is uploading

|

||||

* `[🥔]` shows a simpler UI for faster uploads from slow devices

|

||||

* `[💭]` ask for confirmation before files are added to the queue

|

||||

* `[💤]` sync uploading between other copyparty browser-tabs so only one is active

|

||||

* `[🔎]` switch between upload and [file-search](#file-search) mode

|

||||

* ignore `[🔎]` if you add files by dragging them into the browser

|

||||

|

||||

@@ -481,7 +525,7 @@ and then theres the tabs below it,

|

||||

* plus up to 3 entries each from `[done]` and `[que]` for context

|

||||

* `[que]` is all the files that are still queued

|

||||

|

||||

note that since up2k has to read each file twice, `[🎈 bup]` can *theoretically* be up to 2x faster in some extreme cases (files bigger than your ram, combined with an internet connection faster than the read-speed of your HDD, or if you're uploading from a cuo2duo)

|

||||

note that since up2k has to read each file twice, `[🎈] bup` can *theoretically* be up to 2x faster in some extreme cases (files bigger than your ram, combined with an internet connection faster than the read-speed of your HDD, or if you're uploading from a cuo2duo)

|

||||

|

||||

if you are resuming a massive upload and want to skip hashing the files which already finished, you can enable `turbo` in the `[⚙️] config` tab, but please read the tooltip on that button

|

||||

|

||||

@@ -511,6 +555,17 @@ undo/delete accidental uploads

|

||||

you can unpost even if you don't have regular move/delete access, however only for files uploaded within the past `--unpost` seconds (default 12 hours) and the server must be running with `-e2d`

|

||||

|

||||

|

||||

### self-destruct

|

||||

|

||||

uploads can be given a lifetime, afer which they expire / self-destruct

|

||||

|

||||

the feature must be enabled per-volume with the `lifetime` [upload rule](#upload-rules) which sets the upper limit for how long a file gets to stay on the server

|

||||

|

||||

clients can specify a shorter expiration time using the [up2k ui](#uploading) -- the relevant options become visible upon navigating into a folder with `lifetimes` enabled -- or by using the `life` [upload modifier](#write)

|

||||

|

||||

specifying a custom expiration time client-side will affect the timespan in which unposts are permitted, so keep an eye on the estimates in the up2k ui

|

||||

|

||||

|

||||

## file manager

|

||||

|

||||

cut/paste, rename, and delete files/folders (if you have permission)

|

||||

@@ -592,7 +647,7 @@ and there are *two* editors

|

||||

|

||||

* get a plaintext file listing by adding `?ls=t` to a URL, or a compact colored one with `?ls=v` (for unix terminals)

|

||||

|

||||

* if you are using media hotkeys to switch songs and are getting tired of seeing the OSD popup which Windows doesn't let you disable, consider https://ocv.me/dev/?media-osd-bgone.ps1

|

||||

* if you are using media hotkeys to switch songs and are getting tired of seeing the OSD popup which Windows doesn't let you disable, consider [./contrib/media-osd-bgone.ps1](contrib/#media-osd-bgoneps1)

|

||||

|

||||

* click the bottom-left `π` to open a javascript prompt for debugging

|

||||

|

||||

@@ -615,7 +670,9 @@ path/name queries are space-separated, AND'ed together, and words are negated wi

|

||||

* path: `shibayan -bossa` finds all files where one of the folders contain `shibayan` but filters out any results where `bossa` exists somewhere in the path

|

||||

* name: `demetori styx` gives you [good stuff](https://www.youtube.com/watch?v=zGh0g14ZJ8I&list=PL3A147BD151EE5218&index=9)

|

||||

|

||||

add the argument `-e2ts` to also scan/index tags from music files, which brings us over to:

|

||||

the `raw` field allows for more complex stuff such as `( tags like *nhato* or tags like *taishi* ) and ( not tags like *nhato* or not tags like *taishi* )` which finds all songs by either nhato or taishi, excluding collabs (terrible example, why would you do that)

|

||||

|

||||

for the above example to work, add the commandline argument `-e2ts` to also scan/index tags from music files, which brings us over to:

|

||||

|

||||

|

||||

# server config

|

||||

@@ -626,6 +683,19 @@ using arguments or config files, or a mix of both:

|

||||

* or click the `[reload cfg]` button in the control-panel when logged in as admin

|

||||

|

||||

|

||||

## qr-code

|

||||

|

||||

print a qr-code [(screenshot)](https://user-images.githubusercontent.com/241032/194728533-6f00849b-c6ac-43c6-9359-83e454d11e00.png) for quick access, great between phones on android hotspots which keep changing the subnet

|

||||

|

||||

* `--qr` enables it

|

||||

* `--qrs` does https instead of http

|

||||

* `--qrl lootbox/?pw=hunter2` appends to the url, linking to the `lootbox` folder with password `hunter2`

|

||||

* `--qrz 1` forces 1x zoom instead of autoscaling to fit the terminal size

|

||||

* 1x may render incorrectly on some terminals/fonts, but 2x should always work

|

||||

|

||||

it will use your external ip (default route) unless `--qri` specifies an ip-prefix or domain

|

||||

|

||||

|

||||

## ftp-server

|

||||

|

||||

an FTP server can be started using `--ftp 3921`, and/or `--ftps` for explicit TLS (ftpes)

|

||||

@@ -640,7 +710,9 @@ an FTP server can be started using `--ftp 3921`, and/or `--ftps` for explicit T

|

||||

|

||||

## file indexing

|

||||

|

||||

file indexing relies on two database tables, the up2k filetree (`-e2d`) and the metadata tags (`-e2t`), stored in `.hist/up2k.db`. Configuration can be done through arguments, volume flags, or a mix of both.

|

||||

enables dedup and music search ++

|

||||

|

||||

file indexing relies on two database tables, the up2k filetree (`-e2d`) and the metadata tags (`-e2t`), stored in `.hist/up2k.db`. Configuration can be done through arguments, volflags, or a mix of both.

|

||||

|

||||

through arguments:

|

||||

* `-e2d` enables file indexing on upload

|

||||

@@ -649,8 +721,11 @@ through arguments:

|

||||

* `-e2t` enables metadata indexing on upload

|

||||

* `-e2ts` also scans for tags in all files that don't have tags yet

|

||||

* `-e2tsr` also deletes all existing tags, doing a full reindex

|

||||

* `-e2v` verfies file integrity at startup, comparing hashes from the db

|

||||

* `-e2vu` patches the database with the new hashes from the filesystem

|

||||

* `-e2vp` panics and kills copyparty instead

|

||||

|

||||

the same arguments can be set as volume flags, in addition to `d2d`, `d2ds`, `d2t`, `d2ts` for disabling:

|

||||

the same arguments can be set as volflags, in addition to `d2d`, `d2ds`, `d2t`, `d2ts`, `d2v` for disabling:

|

||||

* `-v ~/music::r:c,e2dsa,e2tsr` does a full reindex of everything on startup

|

||||

* `-v ~/music::r:c,d2d` disables **all** indexing, even if any `-e2*` are on

|

||||

* `-v ~/music::r:c,d2t` disables all `-e2t*` (tags), does not affect `-e2d*`

|

||||

@@ -661,8 +736,11 @@ note:

|

||||

* the parser can finally handle `c,e2dsa,e2tsr` so you no longer have to `c,e2dsa:c,e2tsr`

|

||||

* `e2tsr` is probably always overkill, since `e2ds`/`e2dsa` would pick up any file modifications and `e2ts` would then reindex those, unless there is a new copyparty version with new parsers and the release note says otherwise

|

||||

* the rescan button in the admin panel has no effect unless the volume has `-e2ds` or higher

|

||||

* deduplication is possible on windows if you run copyparty as administrator (not saying you should!)

|

||||

|

||||

to save some time, you can provide a regex pattern for filepaths to only index by filename/path/size/last-modified (and not the hash of the file contents) by setting `--no-hash \.iso$` or the volume-flag `:c,nohash=\.iso$`, this has the following consequences:

|

||||

### exclude-patterns

|

||||

|

||||

to save some time, you can provide a regex pattern for filepaths to only index by filename/path/size/last-modified (and not the hash of the file contents) by setting `--no-hash \.iso$` or the volflag `:c,nohash=\.iso$`, this has the following consequences:

|

||||

* initial indexing is way faster, especially when the volume is on a network disk

|

||||

* makes it impossible to [file-search](#file-search)

|

||||

* if someone uploads the same file contents, the upload will not be detected as a dupe, so it will not get symlinked or rejected

|

||||

@@ -671,12 +749,29 @@ similarly, you can fully ignore files/folders using `--no-idx [...]` and `:c,noi

|

||||

|

||||

if you set `--no-hash [...]` globally, you can enable hashing for specific volumes using flag `:c,nohash=`

|

||||

|

||||

### filesystem guards

|

||||

|

||||

avoid traversing into other filesystems using `--xdev` / volflag `:c,xdev`, skipping any symlinks or bind-mounts to another HDD for example

|

||||

|

||||

and/or you can `--xvol` / `:c,xvol` to ignore all symlinks leaving the volume's top directory, but still allow bind-mounts pointing elsewhere

|

||||

|

||||

**NB: only affects the indexer** -- users can still access anything inside a volume, unless shadowed by another volume

|

||||

|

||||

### periodic rescan

|

||||

|

||||

filesystem monitoring; if copyparty is not the only software doing stuff on your filesystem, you may want to enable periodic rescans to keep the index up to date

|

||||

|

||||

argument `--re-maxage 60` will rescan all volumes every 60 sec, same as volflag `:c,scan=60` to specify it per-volume

|

||||

|

||||

uploads are disabled while a rescan is happening, so rescans will be delayed by `--db-act` (default 10 sec) when there is write-activity going on (uploads, renames, ...)

|

||||

|

||||

|

||||

## upload rules

|

||||

|

||||

set upload rules using volume flags, some examples:

|

||||

set upload rules using volflags, some examples:

|

||||

|

||||

* `:c,sz=1k-3m` sets allowed filesize between 1 KiB and 3 MiB inclusive (suffixes: `b`, `k`, `m`, `g`)

|

||||

* `:c,df=4g` block uploads if there would be less than 4 GiB free disk space afterwards

|

||||

* `:c,nosub` disallow uploading into subdirectories; goes well with `rotn` and `rotf`:

|

||||

* `:c,rotn=1000,2` moves uploads into subfolders, up to 1000 files in each folder before making a new one, two levels deep (must be at least 1)

|

||||

* `:c,rotf=%Y/%m/%d/%H` enforces files to be uploaded into a structure of subfolders according to that date format

|

||||

@@ -695,16 +790,16 @@ you can also set transaction limits which apply per-IP and per-volume, but these

|

||||

|

||||

files can be autocompressed on upload, either on user-request (if config allows) or forced by server-config

|

||||

|

||||

* volume flag `gz` allows gz compression

|

||||

* volume flag `xz` allows lzma compression

|

||||

* volume flag `pk` **forces** compression on all files

|

||||

* volflag `gz` allows gz compression

|

||||

* volflag `xz` allows lzma compression

|

||||

* volflag `pk` **forces** compression on all files

|

||||

* url parameter `pk` requests compression with server-default algorithm

|

||||

* url parameter `gz` or `xz` requests compression with a specific algorithm

|

||||

* url parameter `xz` requests xz compression

|

||||

|

||||

things to note,

|

||||

* the `gz` and `xz` arguments take a single optional argument, the compression level (range 0 to 9)

|

||||

* the `pk` volume flag takes the optional argument `ALGORITHM,LEVEL` which will then be forced for all uploads, for example `gz,9` or `xz,0`

|

||||

* the `pk` volflag takes the optional argument `ALGORITHM,LEVEL` which will then be forced for all uploads, for example `gz,9` or `xz,0`

|

||||

* default compression is gzip level 9

|

||||

* all upload methods except up2k are supported

|

||||

* the files will be indexed after compression, so dupe-detection and file-search will not work as expected

|

||||

@@ -718,13 +813,18 @@ some examples,

|

||||

allows (but does not force) gz compression if client uploads to `/inc?pk` or `/inc?gz` or `/inc?gz=4`

|

||||

|

||||

|

||||

## other flags

|

||||

|

||||

* `:c,magic` enables filetype detection for nameless uploads, same as `--magic`

|

||||

|

||||

|

||||

## database location

|

||||

|

||||

in-volume (`.hist/up2k.db`, default) or somewhere else

|

||||

|

||||

copyparty creates a subfolder named `.hist` inside each volume where it stores the database, thumbnails, and some other stuff

|

||||

|

||||

this can instead be kept in a single place using the `--hist` argument, or the `hist=` volume flag, or a mix of both:

|

||||

this can instead be kept in a single place using the `--hist` argument, or the `hist=` volflag, or a mix of both:

|

||||

* `--hist ~/.cache/copyparty -v ~/music::r:c,hist=-` sets `~/.cache/copyparty` as the default place to put volume info, but `~/music` gets the regular `.hist` subfolder (`-` restores default behavior)

|

||||

|

||||

note:

|

||||

@@ -757,21 +857,31 @@ see the beautiful mess of a dictionary in [mtag.py](https://github.com/9001/copy

|

||||

* avoids pulling any GPL code into copyparty

|

||||

* more importantly runs FFprobe on incoming files which is bad if your FFmpeg has a cve

|

||||

|

||||

`--mtag-to` sets the tag-scan timeout; very high default (60 sec) to cater for zfs and other randomly-freezing filesystems. Lower values like 10 are usually safe, allowing for faster processing of tricky files

|

||||

|

||||

|

||||

## file parser plugins

|

||||

|

||||

provide custom parsers to index additional tags, also see [./bin/mtag/README.md](./bin/mtag/README.md)

|

||||

provide custom parsers to index additional tags, also see [./bin/mtag/README.md](./bin/mtag/README.md)

|

||||

|

||||

copyparty can invoke external programs to collect additional metadata for files using `mtp` (either as argument or volume flag), there is a default timeout of 30sec

|

||||

copyparty can invoke external programs to collect additional metadata for files using `mtp` (either as argument or volflag), there is a default timeout of 60sec, and only files which contain audio get analyzed by default (see ay/an/ad below)

|

||||

|

||||

* `-mtp .bpm=~/bin/audio-bpm.py` will execute `~/bin/audio-bpm.py` with the audio file as argument 1 to provide the `.bpm` tag, if that does not exist in the audio metadata

|

||||

* `-mtp key=f,t5,~/bin/audio-key.py` uses `~/bin/audio-key.py` to get the `key` tag, replacing any existing metadata tag (`f,`), aborting if it takes longer than 5sec (`t5,`)

|

||||

* `-v ~/music::r:c,mtp=.bpm=~/bin/audio-bpm.py:c,mtp=key=f,t5,~/bin/audio-key.py` both as a per-volume config wow this is getting ugly

|

||||

|

||||

*but wait, there's more!* `-mtp` can be used for non-audio files as well using the `a` flag: `ay` only do audio files, `an` only do non-audio files, or `ad` do all files (d as in dontcare)

|

||||

*but wait, there's more!* `-mtp` can be used for non-audio files as well using the `a` flag: `ay` only do audio files (default), `an` only do non-audio files, or `ad` do all files (d as in dontcare)

|

||||

|

||||

* "audio file" also means videos btw, as long as there is an audio stream

|

||||

* `-mtp ext=an,~/bin/file-ext.py` runs `~/bin/file-ext.py` to get the `ext` tag only if file is not audio (`an`)

|

||||

* `-mtp arch,built,ver,orig=an,eexe,edll,~/bin/exe.py` runs `~/bin/exe.py` to get properties about windows-binaries only if file is not audio (`an`) and file extension is exe or dll

|

||||

* if you want to daisychain parsers, use the `p` flag to set processing order

|

||||

* `-mtp foo=p1,~/a.py` runs before `-mtp foo=p2,~/b.py` and will forward all the tags detected so far as json to the stdin of b.py

|

||||

* option `c0` disables capturing of stdout/stderr, so copyparty will not receive any tags from the process at all -- instead the invoked program is free to print whatever to the console, just using copyparty as a launcher

|

||||

* `c1` captures stdout only, `c2` only stderr, and `c3` (default) captures both

|

||||

* you can control how the parser is killed if it times out with option `kt` killing the entire process tree (default), `km` just the main process, or `kn` let it continue running until copyparty is terminated

|

||||

|

||||

if something doesn't work, try `--mtag-v` for verbose error messages

|

||||

|

||||

|

||||

## upload events

|

||||

@@ -779,16 +889,16 @@ copyparty can invoke external programs to collect additional metadata for files

|

||||

trigger a script/program on each upload like so:

|

||||

|

||||

```

|

||||

-v /mnt/inc:inc:w:c,mte=+a1:c,mtp=a1=ad,/usr/bin/notify-send

|

||||

-v /mnt/inc:inc:w:c,mte=+x1:c,mtp=x1=ad,kn,/usr/bin/notify-send

|

||||

```

|

||||

|

||||

so filesystem location `/mnt/inc` shared at `/inc`, write-only for everyone, appending `a1` to the list of tags to index, and using `/usr/bin/notify-send` to "provide" that tag

|

||||

so filesystem location `/mnt/inc` shared at `/inc`, write-only for everyone, appending `x1` to the list of tags to index (`mte`), and using `/usr/bin/notify-send` to "provide" tag `x1` for any filetype (`ad`) with kill-on-timeout disabled (`kn`)

|

||||

|

||||

that'll run the command `notify-send` with the path to the uploaded file as the first and only argument (so on linux it'll show a notification on-screen)

|

||||

|

||||

note that it will only trigger on new unique files, not dupes

|

||||

|

||||

and it will occupy the parsing threads, so fork anything expensive, or if you want to intentionally queue/singlethread you can combine it with `--mtag-mt 1`

|

||||

and it will occupy the parsing threads, so fork anything expensive (or set `kn` to have copyparty fork it for you) -- otoh if you want to intentionally queue/singlethread you can combine it with `--mtag-mt 1`

|

||||

|

||||

if this becomes popular maybe there should be a less janky way to do it actually

|

||||

|

||||

@@ -798,16 +908,48 @@ if this becomes popular maybe there should be a less janky way to do it actually

|

||||

tell search engines you dont wanna be indexed, either using the good old [robots.txt](https://www.robotstxt.org/robotstxt.html) or through copyparty settings:

|

||||

|

||||

* `--no-robots` adds HTTP (`X-Robots-Tag`) and HTML (`<meta>`) headers with `noindex, nofollow` globally

|

||||

* volume-flag `[...]:c,norobots` does the same thing for that single volume

|

||||

* volume-flag `[...]:c,robots` ALLOWS search-engine crawling for that volume, even if `--no-robots` is set globally

|

||||

* volflag `[...]:c,norobots` does the same thing for that single volume

|

||||

* volflag `[...]:c,robots` ALLOWS search-engine crawling for that volume, even if `--no-robots` is set globally

|

||||

|

||||

also, `--force-js` disables the plain HTML folder listing, making things harder to parse for search engines

|

||||

|

||||

|

||||

## themes

|

||||

|

||||

you can change the default theme with `--theme 2`, and add your own themes by modifying `browser.css` or providing your own css to `--css-browser`, then telling copyparty they exist by increasing `--themes`

|

||||

|

||||

<table><tr><td width="33%" align="center"><a href="https://user-images.githubusercontent.com/241032/165864907-17e2ac7d-319d-4f25-8718-2f376f614b51.png"><img src="https://user-images.githubusercontent.com/241032/165867551-fceb35dd-38f0-42bb-bef3-25ba651ca69b.png"></a>

|

||||

0. classic dark</td><td width="33%" align="center"><a href="https://user-images.githubusercontent.com/241032/168644399-68938de5-da9b-445f-8d92-b51c74b5f345.png"><img src="https://user-images.githubusercontent.com/241032/168644404-8e1a2fdc-6e59-4c41-905e-ba5399ed686f.png"></a>

|

||||

2. flat pm-monokai</td><td width="33%" align="center"><a href="https://user-images.githubusercontent.com/241032/165864901-db13a429-a5da-496d-8bc6-ce838547f69d.png"><img src="https://user-images.githubusercontent.com/241032/165867560-aa834aef-58dc-4abe-baef-7e562b647945.png"></a>

|

||||

4. vice</td></tr><tr><td align="center"><a href="https://user-images.githubusercontent.com/241032/165864905-692682eb-6fb4-4d40-b6fe-27d2c7d3e2a7.png"><img src="https://user-images.githubusercontent.com/241032/165867555-080b73b6-6d85-41bb-a7c6-ad277c608365.png"></a>

|

||||

1. classic light</td><td align="center"><a href="https://user-images.githubusercontent.com/241032/168645276-fb02fd19-190a-407a-b8d3-d58fee277e02.png"><img src="https://user-images.githubusercontent.com/241032/168645280-f0662b3c-9764-4875-a2e2-d91cc8199b23.png"></a>

|

||||

3. flat light

|

||||

</td><td align="center"><a href="https://user-images.githubusercontent.com/241032/165864898-10ce7052-a117-4fcf-845b-b56c91687908.png"><img src="https://user-images.githubusercontent.com/241032/165867562-f3003d45-dd2a-4564-8aae-fed44c1ae064.png"></a>

|

||||

5. <a href="https://blog.codinghorror.com/a-tribute-to-the-windows-31-hot-dog-stand-color-scheme/">hotdog stand</a></td></tr></table>

|

||||

|

||||

the classname of the HTML tag is set according to the selected theme, which is used to set colors as css variables ++

|

||||

|

||||

* each theme *generally* has a dark theme (even numbers) and a light theme (odd numbers), showing in pairs

|

||||

* the first theme (theme 0 and 1) is `html.a`, second theme (2 and 3) is `html.b`

|

||||

* if a light theme is selected, `html.y` is set, otherwise `html.z` is

|

||||

* so if the dark edition of the 2nd theme is selected, you use any of `html.b`, `html.z`, `html.bz` to specify rules

|

||||

|

||||

see the top of [./copyparty/web/browser.css](./copyparty/web/browser.css) where the color variables are set, and there's layout-specific stuff near the bottom

|

||||

|

||||

|

||||

## complete examples

|

||||

|

||||

* read-only music server with bpm and key scanning

|

||||

`python copyparty-sfx.py -v /mnt/nas/music:/music:r -e2dsa -e2ts -mtp .bpm=f,audio-bpm.py -mtp key=f,audio-key.py`

|

||||

* read-only music server

|

||||

`python copyparty-sfx.py -v /mnt/nas/music:/music:r -e2dsa -e2ts --no-robots --force-js --theme 2`

|

||||

|

||||

* ...with bpm and key scanning

|

||||

`-mtp .bpm=f,audio-bpm.py -mtp key=f,audio-key.py`

|

||||

|

||||

* ...with a read-write folder for `kevin` whose password is `okgo`

|

||||

`-a kevin:okgo -v /mnt/nas/inc:/inc:rw,kevin`

|

||||

|

||||

* ...with logging to disk

|

||||

`-lo log/cpp-%Y-%m%d-%H%M%S.txt.xz`

|

||||

|

||||

|

||||

# browser support

|

||||

@@ -853,7 +995,8 @@ quick summary of more eccentric web-browsers trying to view a directory index:

|

||||

| **w3m** (0.5.3/macports) | can browse, login, upload at 100kB/s, mkdir/msg |

|

||||

| **netsurf** (3.10/arch) | is basically ie6 with much better css (javascript has almost no effect) |

|

||||

| **opera** (11.60/winxp) | OK: thumbnails, image-viewer, zip-selection, rename/cut/paste. NG: up2k, navpane, markdown, audio |

|

||||

| **ie4** and **netscape** 4.0 | can browse, upload with `?b=u` |

|

||||

| **ie4** and **netscape** 4.0 | can browse, upload with `?b=u`, auth with `&pw=wark` |

|

||||

| **ncsa mosaic** 2.7 | does not get a pass, [pic1](https://user-images.githubusercontent.com/241032/174189227-ae816026-cf6f-4be5-a26e-1b3b072c1b2f.png) - [pic2](https://user-images.githubusercontent.com/241032/174189225-5651c059-5152-46e9-ac26-7e98e497901b.png) |

|

||||

| **SerenityOS** (7e98457) | hits a page fault, works with `?b=u`, file upload not-impl |

|

||||

|

||||

|

||||

@@ -866,7 +1009,9 @@ interact with copyparty using non-browser clients

|

||||

* `var xhr = new XMLHttpRequest(); xhr.open('POST', '//127.0.0.1:3923/msgs?raw'); xhr.send('foo');`

|

||||

|

||||

* curl/wget: upload some files (post=file, chunk=stdin)

|

||||

* `post(){ curl -b cppwd=wark -F act=bput -F f=@"$1" http://127.0.0.1:3923/;}`

|

||||

* `post(){ curl -F act=bput -F f=@"$1" http://127.0.0.1:3923/?pw=wark;}`

|

||||

`post movie.mkv`

|

||||

* `post(){ curl -b cppwd=wark -H rand:8 -T "$1" http://127.0.0.1:3923/;}`

|

||||

`post movie.mkv`

|

||||

* `post(){ wget --header='Cookie: cppwd=wark' --post-file="$1" -O- http://127.0.0.1:3923/?raw;}`

|

||||

`post movie.mkv`

|

||||

@@ -892,7 +1037,9 @@ copyparty returns a truncated sha512sum of your PUT/POST as base64; you can gene

|

||||

b512(){ printf "$((sha512sum||shasum -a512)|sed -E 's/ .*//;s/(..)/\\x\1/g')"|base64|tr '+/' '-_'|head -c44;}

|

||||

b512 <movie.mkv

|

||||

|

||||

you can provide passwords using cookie `cppwd=hunter2`, as a url query `?pw=hunter2`, or with basic-authentication (either as the username or password)

|

||||

you can provide passwords using cookie `cppwd=hunter2`, as a url-param `?pw=hunter2`, or with basic-authentication (either as the username or password)

|

||||

|

||||

NOTE: curl will not send the original filename if you use `-T` combined with url-params! Also, make sure to always leave a trailing slash in URLs unless you want to override the filename

|

||||

|

||||

|

||||

# up2k

|

||||

@@ -910,19 +1057,33 @@ quick outline of the up2k protocol, see [uploading](#uploading) for the web-clie

|

||||

* server writes chunks into place based on the hash

|

||||

* client does another handshake with the hashlist; server replies with OK or a list of chunks to reupload

|

||||

|

||||

up2k has saved a few uploads from becoming corrupted in-transfer already; caught an android phone on wifi redhanded in wireshark with a bitflip, however bup with https would *probably* have noticed as well (thanks to tls also functioning as an integrity check)

|

||||

up2k has saved a few uploads from becoming corrupted in-transfer already;

|

||||

* caught an android phone on wifi redhanded in wireshark with a bitflip, however bup with https would *probably* have noticed as well (thanks to tls also functioning as an integrity check)

|

||||

* also stopped someone from uploading because their ram was bad

|

||||

|

||||

regarding the frequent server log message during uploads;

|

||||

`6.0M 106M/s 2.77G 102.9M/s n948 thank 4/0/3/1 10042/7198 00:01:09`

|

||||

* this chunk was `6 MiB`, uploaded at `106 MiB/s`

|

||||

* on this http connection, `2.77 GiB` transferred, `102.9 MiB/s` average, `948` chunks handled

|

||||

* client says `4` uploads OK, `0` failed, `3` busy, `1` queued, `10042 MiB` total size, `7198 MiB` and `00:01:09` left

|

||||

|

||||

|

||||

## why chunk-hashes

|

||||

|

||||

a single sha512 would be better, right?

|

||||

|

||||

this is due to `crypto.subtle` not providing a streaming api (or the option to seed the sha512 hasher with a starting hash)

|

||||

this is due to `crypto.subtle` [not yet](https://github.com/w3c/webcrypto/issues/73) providing a streaming api (or the option to seed the sha512 hasher with a starting hash)

|

||||

|

||||

as a result, the hashes are much less useful than they could have been (search the server by sha512, provide the sha512 in the response http headers, ...)

|

||||

|

||||