Compare commits

146 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

cafe53c055 | ||

|

|

7673beef72 | ||

|

|

b28bfe64c0 | ||

|

|

135ece3fbd | ||

|

|

bd3640d256 | ||

|

|

fc0405c8f3 | ||

|

|

7df890d964 | ||

|

|

8341041857 | ||

|

|

1b7634932d | ||

|

|

48a3898aa6 | ||

|

|

5d13ebb4ac | ||

|

|

015b87ee99 | ||

|

|

0a48acf6be | ||

|

|

2b6a3afd38 | ||

|

|

18aa82fb2f | ||

|

|

f5407b2997 | ||

|

|

474d5a155b | ||

|

|

afcd98b794 | ||

|

|

4f80e44ff7 | ||

|

|

406e413594 | ||

|

|

033b50ae1b | ||

|

|

bee26e853b | ||

|

|

04a1f7040e | ||

|

|

f9d5bb3b29 | ||

|

|

ca0cd04085 | ||

|

|

999ee2e7bc | ||

|

|

1ff7f968e8 | ||

|

|

3966266207 | ||

|

|

d03e96a392 | ||

|

|

4c843c6df9 | ||

|

|

0896c5295c | ||

|

|

cc0c9839eb | ||

|

|

d0aa20e17c | ||

|

|

1a658dedb7 | ||

|

|

8d376b854c | ||

|

|

490c16b01d | ||

|

|

2437a4e864 | ||

|

|

007d948cb9 | ||

|

|

335fcc8535 | ||

|

|

9eaa9904e0 | ||

|

|

0778da6c4d | ||

|

|

a1bb10012d | ||

|

|

1441ccee4f | ||

|

|

491803d8b7 | ||

|

|

3dcc386b6f | ||

|

|

5aa54d1217 | ||

|

|

88b876027c | ||

|

|

fcc3aa98fd | ||

|

|

f2f5e266b4 | ||

|

|

e17bf8f325 | ||

|

|

d19cb32bf3 | ||

|

|

85a637af09 | ||

|

|

043e3c7dd6 | ||

|

|

8f59afb159 | ||

|

|

77f1e51444 | ||

|

|

22fc4bb938 | ||

|

|

50c7bba6ea | ||

|

|

551d99b71b | ||

|

|

b54b7213a7 | ||

|

|

a14943c8de | ||

|

|

a10cad54fc | ||

|

|

8568b7702a | ||

|

|

5d8cb34885 | ||

|

|

8d248333e8 | ||

|

|

99e2ef7f33 | ||

|

|

e767230383 | ||

|

|

90601314d6 | ||

|

|

9c5eac1274 | ||

|

|

50905439e4 | ||

|

|

a0c1239246 | ||

|

|

b8e851c332 | ||

|

|

baaf2eb24d | ||

|

|

e197895c10 | ||

|

|

cb75efa05d | ||

|

|

8b0cf2c982 | ||

|

|

fc7d9e1f9c | ||

|

|

10caafa34c | ||

|

|

22cc22225a | ||

|

|

22dff4b0e5 | ||

|

|

a00ff2b086 | ||

|

|

e4acddc23b | ||

|

|

2b2d8e4e02 | ||

|

|

5501d49032 | ||

|

|

fa54b2eec4 | ||

|

|

cb0160021f | ||

|

|

93a723d588 | ||

|

|

8ebe1fb5e8 | ||

|

|

2acdf685b1 | ||

|

|

9f122ccd16 | ||

|

|

03be26fafc | ||

|

|

df5d309d6e | ||

|

|

c355f9bd91 | ||

|

|

9c28ba417e | ||

|

|

705b58c741 | ||

|

|

510302d667 | ||

|

|

025a537413 | ||

|

|

60a1ff0fc0 | ||

|

|

f94a0b1bff | ||

|

|

4ccfeeb2cd | ||

|

|

2646f6a4f2 | ||

|

|

b286ab539e | ||

|

|

2cca6e0922 | ||

|

|

db51f1b063 | ||

|

|

d979c47f50 | ||

|

|

e64b87b99b | ||

|

|

b985011a00 | ||

|

|

c2ed2314c8 | ||

|

|

cd496658c3 | ||

|

|

deca082623 | ||

|

|

0ea8bb7c83 | ||

|

|

1fb251a4c2 | ||

|

|

4295923b76 | ||

|

|

572aa4b26c | ||

|

|

b1359f039f | ||

|

|

867d8ee49e | ||

|

|

04c86e8a89 | ||

|

|

bc0cb43ef9 | ||

|

|

769454fdce | ||

|

|

4ee81af8f6 | ||

|

|

8b0e66122f | ||

|

|

8a98efb929 | ||

|

|

b6fd555038 | ||

|

|

7eb413ad51 | ||

|

|

4421d509eb | ||

|

|

793ffd7b01 | ||

|

|

1e22222c60 | ||

|

|

544e0549bc | ||

|

|

83178d0836 | ||

|

|

c44f5f5701 | ||

|

|

138f5bc989 | ||

|

|

e4759f86ef | ||

|

|

d71416437a | ||

|

|

a84c583b2c | ||

|

|

cdacdccdb8 | ||

|

|

d3ccd3f174 | ||

|

|

cb6de0387d | ||

|

|

abff40519d | ||

|

|

55c74ad164 | ||

|

|

673b4f7e23 | ||

|

|

d11e02da49 | ||

|

|

8790f89e08 | ||

|

|

33442026b8 | ||

|

|

03193de6d0 | ||

|

|

8675ff40f3 | ||

|

|

d88889d3fc | ||

|

|

6f244d4335 |

2

.github/pull_request_template.md

vendored

2

.github/pull_request_template.md

vendored

@@ -1,2 +1,2 @@

|

||||

Please include the following text somewhere in this PR description:

|

||||

To show that your contribution is compatible with the MIT License, please include the following text somewhere in this PR description:

|

||||

This PR complies with the DCO; https://developercertificate.org/

|

||||

|

||||

1

.gitignore

vendored

1

.gitignore

vendored

@@ -37,6 +37,7 @@ up.*.txt

|

||||

.hist/

|

||||

scripts/docker/*.out

|

||||

scripts/docker/*.err

|

||||

/perf.*

|

||||

|

||||

# nix build output link

|

||||

result

|

||||

|

||||

10

.vscode/launch.py

vendored

10

.vscode/launch.py

vendored

@@ -30,9 +30,17 @@ except:

|

||||

|

||||

argv = [os.path.expanduser(x) if x.startswith("~") else x for x in argv]

|

||||

|

||||

sfx = ""

|

||||

if len(sys.argv) > 1 and os.path.isfile(sys.argv[1]):

|

||||

sfx = sys.argv[1]

|

||||

sys.argv = [sys.argv[0]] + sys.argv[2:]

|

||||

|

||||

argv += sys.argv[1:]

|

||||

|

||||

if re.search(" -j ?[0-9]", " ".join(argv)):

|

||||

if sfx:

|

||||

argv = [sys.executable, sfx] + argv

|

||||

sp.check_call(argv)

|

||||

elif re.search(" -j ?[0-9]", " ".join(argv)):

|

||||

argv = [sys.executable, "-m", "copyparty"] + argv

|

||||

sp.check_call(argv)

|

||||

else:

|

||||

|

||||

32

.vscode/settings.json

vendored

32

.vscode/settings.json

vendored

@@ -35,34 +35,18 @@

|

||||

"python.linting.flake8Enabled": true,

|

||||

"python.linting.banditEnabled": true,

|

||||

"python.linting.mypyEnabled": true,

|

||||

"python.linting.mypyArgs": [

|

||||

"--ignore-missing-imports",

|

||||

"--follow-imports=silent",

|

||||

"--show-column-numbers",

|

||||

"--strict"

|

||||

],

|

||||

"python.linting.flake8Args": [

|

||||

"--max-line-length=120",

|

||||

"--ignore=E722,F405,E203,W503,W293,E402,E501,E128",

|

||||

"--ignore=E722,F405,E203,W503,W293,E402,E501,E128,E226",

|

||||

],

|

||||

"python.linting.banditArgs": [

|

||||

"--ignore=B104"

|

||||

],

|

||||

"python.linting.pylintArgs": [

|

||||

"--disable=missing-module-docstring",

|

||||

"--disable=missing-class-docstring",

|

||||

"--disable=missing-function-docstring",

|

||||

"--disable=import-outside-toplevel",

|

||||

"--disable=wrong-import-position",

|

||||

"--disable=raise-missing-from",

|

||||

"--disable=bare-except",

|

||||

"--disable=broad-except",

|

||||

"--disable=invalid-name",

|

||||

"--disable=line-too-long",

|

||||

"--disable=consider-using-f-string"

|

||||

"--ignore=B104,B110,B112"

|

||||

],

|

||||

// python3 -m isort --py=27 --profile=black copyparty/

|

||||

"python.formatting.provider": "black",

|

||||

"python.formatting.provider": "none",

|

||||

"[python]": {

|

||||

"editor.defaultFormatter": "ms-python.black-formatter"

|

||||

},

|

||||

"editor.formatOnSave": true,

|

||||

"[html]": {

|

||||

"editor.formatOnSave": false,

|

||||

@@ -74,10 +58,6 @@

|

||||

"files.associations": {

|

||||

"*.makefile": "makefile"

|

||||

},

|

||||

"python.formatting.blackArgs": [

|

||||

"-t",

|

||||

"py27"

|

||||

],

|

||||

"python.linting.enabled": true,

|

||||

"python.pythonPath": "/usr/bin/python3"

|

||||

}

|

||||

195

README.md

195

README.md

@@ -41,6 +41,7 @@ turn almost any device into a file server with resumable uploads/downloads using

|

||||

* [batch rename](#batch-rename) - select some files and press `F2` to bring up the rename UI

|

||||

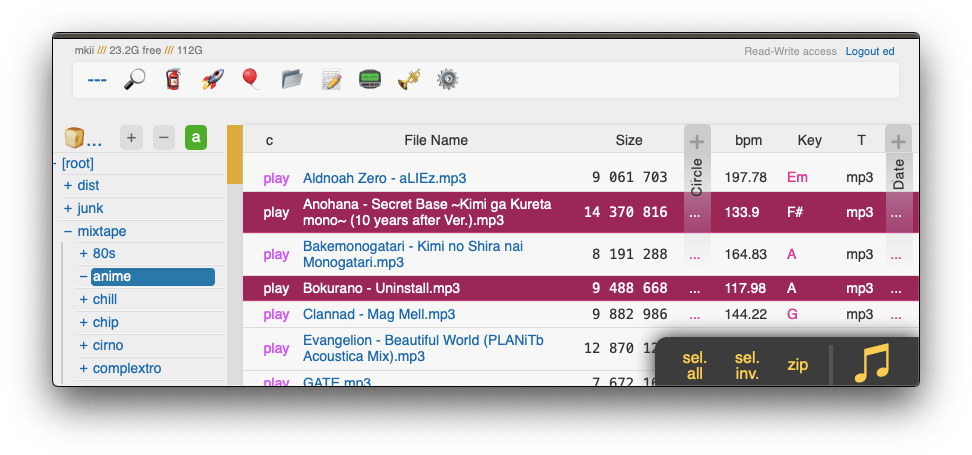

* [media player](#media-player) - plays almost every audio format there is

|

||||

* [audio equalizer](#audio-equalizer) - bass boosted

|

||||

* [fix unreliable playback on android](#fix-unreliable-playback-on-android) - due to phone / app settings

|

||||

* [markdown viewer](#markdown-viewer) - and there are *two* editors

|

||||

* [other tricks](#other-tricks)

|

||||

* [searching](#searching) - search by size, date, path/name, mp3-tags, ...

|

||||

@@ -65,10 +66,15 @@ turn almost any device into a file server with resumable uploads/downloads using

|

||||

* [file parser plugins](#file-parser-plugins) - provide custom parsers to index additional tags

|

||||

* [event hooks](#event-hooks) - trigger a program on uploads, renames etc ([examples](./bin/hooks/))

|

||||

* [upload events](#upload-events) - the older, more powerful approach ([examples](./bin/mtag/))

|

||||

* [handlers](#handlers) - redefine behavior with plugins ([examples](./bin/handlers/))

|

||||

* [hiding from google](#hiding-from-google) - tell search engines you dont wanna be indexed

|

||||

* [themes](#themes)

|

||||

* [complete examples](#complete-examples)

|

||||

* [reverse-proxy](#reverse-proxy) - running copyparty next to other websites

|

||||

* [prometheus](#prometheus) - metrics/stats can be enabled

|

||||

* [packages](#packages) - the party might be closer than you think

|

||||

* [arch package](#arch-package) - now [available on aur](https://aur.archlinux.org/packages/copyparty) maintained by [@icxes](https://github.com/icxes)

|

||||

* [fedora package](#fedora-package) - now [available on copr-pypi](https://copr.fedorainfracloud.org/coprs/g/copr/PyPI/)

|

||||

* [nix package](#nix-package) - `nix profile install github:9001/copyparty`

|

||||

* [nixos module](#nixos-module)

|

||||

* [browser support](#browser-support) - TLDR: yes

|

||||

@@ -79,9 +85,10 @@ turn almost any device into a file server with resumable uploads/downloads using

|

||||

* [iOS shortcuts](#iOS-shortcuts) - there is no iPhone app, but

|

||||

* [performance](#performance) - defaults are usually fine - expect `8 GiB/s` download, `1 GiB/s` upload

|

||||

* [client-side](#client-side) - when uploading files

|

||||

* [security](#security) - some notes on hardening

|

||||

* [security](#security) - there is a [discord server](https://discord.gg/25J8CdTT6G)

|

||||

* [gotchas](#gotchas) - behavior that might be unexpected

|

||||

* [cors](#cors) - cross-site request config

|

||||

* [password hashing](#password-hashing) - you can hash passwords

|

||||

* [https](#https) - both HTTP and HTTPS are accepted

|

||||

* [recovering from crashes](#recovering-from-crashes)

|

||||

* [client crashes](#client-crashes)

|

||||

@@ -101,9 +108,9 @@ turn almost any device into a file server with resumable uploads/downloads using

|

||||

|

||||

just run **[copyparty-sfx.py](https://github.com/9001/copyparty/releases/latest/download/copyparty-sfx.py)** -- that's it! 🎉

|

||||

|

||||

* or install through pypi (python3 only): `python3 -m pip install --user -U copyparty`

|

||||

* or install through pypi: `python3 -m pip install --user -U copyparty`

|

||||

* or if you cannot install python, you can use [copyparty.exe](#copypartyexe) instead

|

||||

* or [install through nix](#nix-package), or [on NixOS](#nixos-module)

|

||||

* or install [on arch](#arch-package) ╱ [on fedora](#fedora-package) ╱ [on NixOS](#nixos-module) ╱ [through nix](#nix-package)

|

||||

* or if you are on android, [install copyparty in termux](#install-on-android)

|

||||

* or if you prefer to [use docker](./scripts/docker/) 🐋 you can do that too

|

||||

* docker has all deps built-in, so skip this step:

|

||||

@@ -123,7 +130,7 @@ enable thumbnails (images/audio/video), media indexing, and audio transcoding by

|

||||

|

||||

running copyparty without arguments (for example doubleclicking it on Windows) will give everyone read/write access to the current folder; you may want [accounts and volumes](#accounts-and-volumes)

|

||||

|

||||

or see [complete windows example](./docs/examples/windows.md)

|

||||

or see [some usage examples](#complete-examples) for inspiration, or the [complete windows example](./docs/examples/windows.md)

|

||||

|

||||

some recommended options:

|

||||

* `-e2dsa` enables general [file indexing](#file-indexing)

|

||||

@@ -275,6 +282,8 @@ server notes:

|

||||

|

||||

* [Firefox issue 1790500](https://bugzilla.mozilla.org/show_bug.cgi?id=1790500) -- entire browser can crash after uploading ~4000 small files

|

||||

|

||||

* Android: music playback randomly stops due to [battery usage settings](#fix-unreliable-playback-on-android)

|

||||

|

||||

* iPhones: the volume control doesn't work because [apple doesn't want it to](https://developer.apple.com/library/archive/documentation/AudioVideo/Conceptual/Using_HTML5_Audio_Video/Device-SpecificConsiderations/Device-SpecificConsiderations.html#//apple_ref/doc/uid/TP40009523-CH5-SW11)

|

||||

* *future workaround:* enable the equalizer, make it all-zero, and set a negative boost to reduce the volume

|

||||

* "future" because `AudioContext` can't maintain a stable playback speed in the current iOS version (15.7), maybe one day...

|

||||

@@ -287,6 +296,7 @@ server notes:

|

||||

|

||||

* VirtualBox: sqlite throws `Disk I/O Error` when running in a VM and the up2k database is in a vboxsf

|

||||

* use `--hist` or the `hist` volflag (`-v [...]:c,hist=/tmp/foo`) to place the db inside the vm instead

|

||||

* also happens on mergerfs, so put the db elsewhere

|

||||

|

||||

* Ubuntu: dragging files from certain folders into firefox or chrome is impossible

|

||||

* due to snap security policies -- see `snap connections firefox` for the allowlist, `removable-media` permits all of `/mnt` and `/media` apparently

|

||||

@@ -300,7 +310,7 @@ upgrade notes

|

||||

* http-api: delete/move is now `POST` instead of `GET`

|

||||

* everything other than `GET` and `HEAD` must pass [cors validation](#cors)

|

||||

* `1.5.0` (2022-12-03): [new chunksize formula](https://github.com/9001/copyparty/commit/54e1c8d261df) for files larger than 128 GiB

|

||||

* **users:** upgrade to the latest [cli uploader](https://github.com/9001/copyparty/blob/hovudstraum/bin/up2k.py) if you use that

|

||||

* **users:** upgrade to the latest [cli uploader](https://github.com/9001/copyparty/blob/hovudstraum/bin/u2c.py) if you use that

|

||||

* **devs:** update third-party up2k clients (if those even exist)

|

||||

|

||||

|

||||

@@ -319,7 +329,7 @@ upgrade notes

|

||||

# accounts and volumes

|

||||

|

||||

per-folder, per-user permissions - if your setup is getting complex, consider making a [config file](./docs/example.conf) instead of using arguments

|

||||

* much easier to manage, and you can modify the config at runtime with `systemctl reload copyparty` or more conveniently using the `[reload cfg]` button in the control-panel (if logged in as admin)

|

||||

* much easier to manage, and you can modify the config at runtime with `systemctl reload copyparty` or more conveniently using the `[reload cfg]` button in the control-panel (if the user has `a`/admin in any volume)

|

||||

* changes to the `[global]` config section requires a restart to take effect

|

||||

|

||||

a quick summary can be seen using `--help-accounts`

|

||||

@@ -338,6 +348,7 @@ permissions:

|

||||

* `d` (delete): delete files/folders

|

||||

* `g` (get): only download files, cannot see folder contents or zip/tar

|

||||

* `G` (upget): same as `g` except uploaders get to see their own filekeys (see `fk` in examples below)

|

||||

* `a` (admin): can see uploader IPs, config-reload

|

||||

|

||||

examples:

|

||||

* add accounts named u1, u2, u3 with passwords p1, p2, p3: `-a u1:p1 -a u2:p2 -a u3:p3`

|

||||

@@ -466,6 +477,7 @@ click the `🌲` or pressing the `B` hotkey to toggle between breadcrumbs path (

|

||||

## thumbnails

|

||||

|

||||

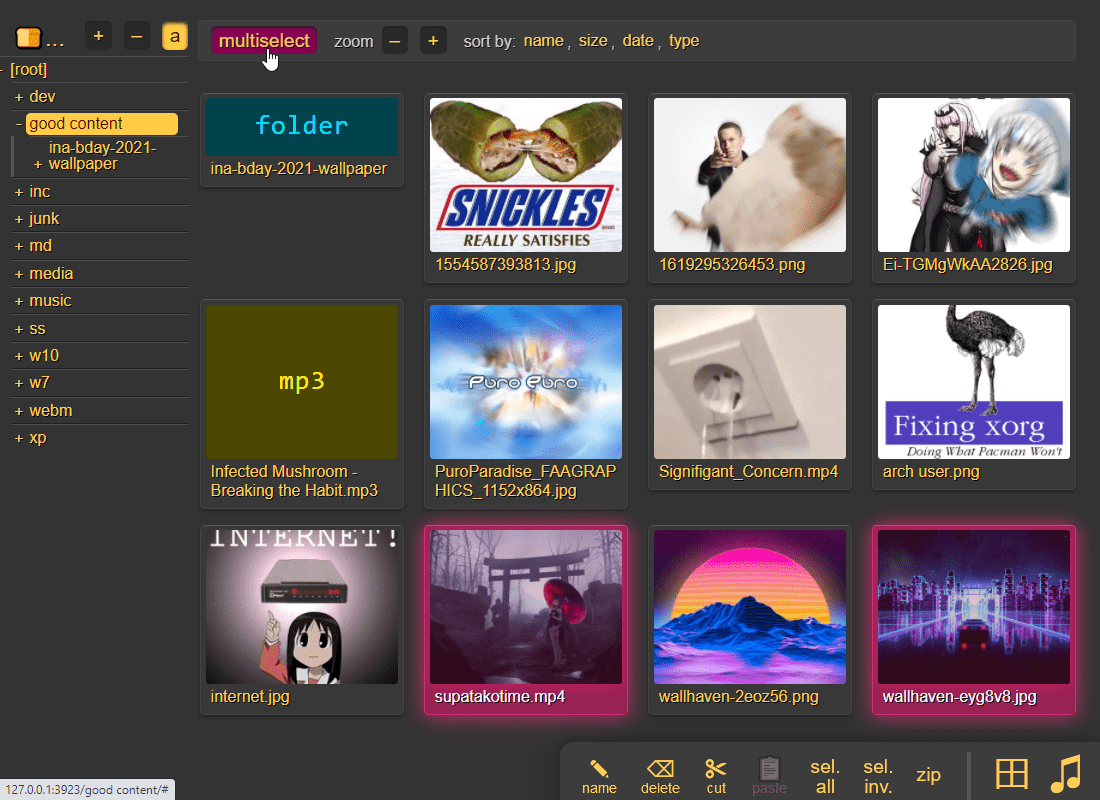

press `g` or `田` to toggle grid-view instead of the file listing and `t` toggles icons / thumbnails

|

||||

* can be made default globally with `--grid` or per-volume with volflag `grid`

|

||||

|

||||

|

||||

|

||||

@@ -476,10 +488,14 @@ it does static images with Pillow / pyvips / FFmpeg, and uses FFmpeg for video f

|

||||

audio files are covnerted into spectrograms using FFmpeg unless you `--no-athumb` (and some FFmpeg builds may need `--th-ff-swr`)

|

||||

|

||||

images with the following names (see `--th-covers`) become the thumbnail of the folder they're in: `folder.png`, `folder.jpg`, `cover.png`, `cover.jpg`

|

||||

* and, if you enable [file indexing](#file-indexing), all remaining folders will also get thumbnails (as long as they contain any pics at all)

|

||||

|

||||

in the grid/thumbnail view, if the audio player panel is open, songs will start playing when clicked

|

||||

* indicated by the audio files having the ▶ icon instead of 💾

|

||||

|

||||

enabling `multiselect` lets you click files to select them, and then shift-click another file for range-select

|

||||

* `multiselect` is mostly intended for phones/tablets, but the `sel` option in the `[⚙️] settings` tab is better suited for desktop use, allowing selection by CTRL-clicking and range-selection with SHIFT-click, all without affecting regular clicking

|

||||

|

||||

|

||||

## zip downloads

|

||||

|

||||

@@ -504,10 +520,14 @@ you can also zip a selection of files or folders by clicking them in the browser

|

||||

|

||||

|

||||

|

||||

cool trick: download a folder by appending url-params `?tar&opus` to transcode all audio files (except aac|m4a|mp3|ogg|opus|wma) to opus before they're added to the archive

|

||||

* super useful if you're 5 minutes away from takeoff and realize you don't have any music on your phone but your server only has flac files and downloading those will burn through all your data + there wouldn't be enough time anyways

|

||||

* and url-params `&j` / `&w` produce jpeg/webm thumbnails/spectrograms instead of the original audio/video/images

|

||||

|

||||

|

||||

## uploading

|

||||

|

||||

drag files/folders into the web-browser to upload (or use the [command-line uploader](https://github.com/9001/copyparty/tree/hovudstraum/bin#up2kpy))

|

||||

drag files/folders into the web-browser to upload (or use the [command-line uploader](https://github.com/9001/copyparty/tree/hovudstraum/bin#u2cpy))

|

||||

|

||||

this initiates an upload using `up2k`; there are two uploaders available:

|

||||

* `[🎈] bup`, the basic uploader, supports almost every browser since netscape 4.0

|

||||

@@ -602,6 +622,7 @@ file selection: click somewhere on the line (not the link itsef), then:

|

||||

* `up/down` to move

|

||||

* `shift-up/down` to move-and-select

|

||||

* `ctrl-shift-up/down` to also scroll

|

||||

* shift-click another line for range-select

|

||||

|

||||

* cut: select some files and `ctrl-x`

|

||||

* paste: `ctrl-v` in another folder

|

||||

@@ -685,7 +706,7 @@ open the `[🎺]` media-player-settings tab to configure it,

|

||||

* `[loop]` keeps looping the folder

|

||||

* `[next]` plays into the next folder

|

||||

* transcode:

|

||||

* `[flac]` convers `flac` and `wav` files into opus

|

||||

* `[flac]` converts `flac` and `wav` files into opus

|

||||

* `[aac]` converts `aac` and `m4a` files into opus

|

||||

* `[oth]` converts all other known formats into opus

|

||||

* `aac|ac3|aif|aiff|alac|alaw|amr|ape|au|dfpwm|dts|flac|gsm|it|m4a|mo3|mod|mp2|mp3|mpc|mptm|mt2|mulaw|ogg|okt|opus|ra|s3m|tak|tta|ulaw|wav|wma|wv|xm|xpk`

|

||||

@@ -701,6 +722,11 @@ can also boost the volume in general, or increase/decrease stereo width (like [c

|

||||

has the convenient side-effect of reducing the pause between songs, so gapless albums play better with the eq enabled (just make it flat)

|

||||

|

||||

|

||||

### fix unreliable playback on android

|

||||

|

||||

due to phone / app settings, android phones may randomly stop playing music when the power saver kicks in, especially at the end of an album -- you can fix it by [disabling power saving](https://user-images.githubusercontent.com/241032/235262123-c328cca9-3930-4948-bd18-3949b9fd3fcf.png) in the [app settings](https://user-images.githubusercontent.com/241032/235262121-2ffc51ae-7821-4310-a322-c3b7a507890c.png) of the browser you use for music streaming (preferably a dedicated one)

|

||||

|

||||

|

||||

## markdown viewer

|

||||

|

||||

and there are *two* editors

|

||||

@@ -758,7 +784,7 @@ for the above example to work, add the commandline argument `-e2ts` to also scan

|

||||

using arguments or config files, or a mix of both:

|

||||

* config files (`-c some.conf`) can set additional commandline arguments; see [./docs/example.conf](docs/example.conf) and [./docs/example2.conf](docs/example2.conf)

|

||||

* `kill -s USR1` (same as `systemctl reload copyparty`) to reload accounts and volumes from config files without restarting

|

||||

* or click the `[reload cfg]` button in the control-panel when logged in as admin

|

||||

* or click the `[reload cfg]` button in the control-panel if the user has `a`/admin in any volume

|

||||

* changes to the `[global]` config section requires a restart to take effect

|

||||

|

||||

|

||||

@@ -919,14 +945,13 @@ through arguments:

|

||||

* `--xlink` enables deduplication across volumes

|

||||

|

||||

the same arguments can be set as volflags, in addition to `d2d`, `d2ds`, `d2t`, `d2ts`, `d2v` for disabling:

|

||||

* `-v ~/music::r:c,e2dsa,e2tsr` does a full reindex of everything on startup

|

||||

* `-v ~/music::r:c,e2ds,e2tsr` does a full reindex of everything on startup

|

||||

* `-v ~/music::r:c,d2d` disables **all** indexing, even if any `-e2*` are on

|

||||

* `-v ~/music::r:c,d2t` disables all `-e2t*` (tags), does not affect `-e2d*`

|

||||

* `-v ~/music::r:c,d2ds` disables on-boot scans; only index new uploads

|

||||

* `-v ~/music::r:c,d2ts` same except only affecting tags

|

||||

|

||||

note:

|

||||

* the parser can finally handle `c,e2dsa,e2tsr` so you no longer have to `c,e2dsa:c,e2tsr`

|

||||

* `e2tsr` is probably always overkill, since `e2ds`/`e2dsa` would pick up any file modifications and `e2ts` would then reindex those, unless there is a new copyparty version with new parsers and the release note says otherwise

|

||||

* the rescan button in the admin panel has no effect unless the volume has `-e2ds` or higher

|

||||

* deduplication is possible on windows if you run copyparty as administrator (not saying you should!)

|

||||

@@ -948,7 +973,11 @@ avoid traversing into other filesystems using `--xdev` / volflag `:c,xdev`, ski

|

||||

|

||||

and/or you can `--xvol` / `:c,xvol` to ignore all symlinks leaving the volume's top directory, but still allow bind-mounts pointing elsewhere

|

||||

|

||||

**NB: only affects the indexer** -- users can still access anything inside a volume, unless shadowed by another volume

|

||||

* symlinks are permitted with `xvol` if they point into another volume where the user has the same level of access

|

||||

|

||||

these options will reduce performance; unlikely worst-case estimates are 14% reduction for directory listings, 35% for download-as-tar

|

||||

|

||||

as of copyparty v1.7.0 these options also prevent file access at runtime -- in previous versions it was just hints for the indexer

|

||||

|

||||

### periodic rescan

|

||||

|

||||

@@ -965,6 +994,8 @@ set upload rules using volflags, some examples:

|

||||

|

||||

* `:c,sz=1k-3m` sets allowed filesize between 1 KiB and 3 MiB inclusive (suffixes: `b`, `k`, `m`, `g`)

|

||||

* `:c,df=4g` block uploads if there would be less than 4 GiB free disk space afterwards

|

||||

* `:c,vmaxb=1g` block uploads if total volume size would exceed 1 GiB afterwards

|

||||

* `:c,vmaxn=4k` block uploads if volume would contain more than 4096 files afterwards

|

||||

* `:c,nosub` disallow uploading into subdirectories; goes well with `rotn` and `rotf`:

|

||||

* `:c,rotn=1000,2` moves uploads into subfolders, up to 1000 files in each folder before making a new one, two levels deep (must be at least 1)

|

||||

* `:c,rotf=%Y/%m/%d/%H` enforces files to be uploaded into a structure of subfolders according to that date format

|

||||

@@ -978,6 +1009,9 @@ you can also set transaction limits which apply per-IP and per-volume, but these

|

||||

* `:c,maxn=250,3600` allows 250 files over 1 hour from each IP (tracked per-volume)

|

||||

* `:c,maxb=1g,300` allows 1 GiB total over 5 minutes from each IP (tracked per-volume)

|

||||

|

||||

notes:

|

||||

* `vmaxb` and `vmaxn` requires either the `e2ds` volflag or `-e2dsa` global-option

|

||||

|

||||

|

||||

## compress uploads

|

||||

|

||||

@@ -1108,6 +1142,13 @@ note that this is way more complicated than the new [event hooks](#event-hooks)

|

||||

note that it will occupy the parsing threads, so fork anything expensive (or set `kn` to have copyparty fork it for you) -- otoh if you want to intentionally queue/singlethread you can combine it with `--mtag-mt 1`

|

||||

|

||||

|

||||

## handlers

|

||||

|

||||

redefine behavior with plugins ([examples](./bin/handlers/))

|

||||

|

||||

replace 404 and 403 errors with something completely different (that's it for now)

|

||||

|

||||

|

||||

## hiding from google

|

||||

|

||||

tell search engines you dont wanna be indexed, either using the good old [robots.txt](https://www.robotstxt.org/robotstxt.html) or through copyparty settings:

|

||||

@@ -1144,9 +1185,33 @@ see the top of [./copyparty/web/browser.css](./copyparty/web/browser.css) where

|

||||

|

||||

## complete examples

|

||||

|

||||

* [running on windows](./docs/examples/windows.md)

|

||||

* see [running on windows](./docs/examples/windows.md) for a fancy windows setup

|

||||

|

||||

* read-only music server

|

||||

* or use any of the examples below, just replace `python copyparty-sfx.py` with `copyparty.exe` if you're using the exe edition

|

||||

|

||||

* allow anyone to download or upload files into the current folder:

|

||||

`python copyparty-sfx.py`

|

||||

|

||||

* enable searching and music indexing with `-e2dsa -e2ts`

|

||||

|

||||

* start an FTP server on port 3921 with `--ftp 3921`

|

||||

|

||||

* announce it on your LAN with `-z` so it appears in windows/Linux file managers

|

||||

|

||||

* anyone can upload, but nobody can see any files (even the uploader):

|

||||

`python copyparty-sfx.py -e2dsa -v .::w`

|

||||

|

||||

* block uploads if there's less than 4 GiB free disk space with `--df 4`

|

||||

|

||||

* show a popup on new uploads with `--xau bin/hooks/notify.py`

|

||||

|

||||

* anyone can upload, and receive "secret" links for each upload they do:

|

||||

`python copyparty-sfx.py -e2dsa -v .::wG:c,fk=8`

|

||||

|

||||

* anyone can browse, only `kevin` (password `okgo`) can upload/move/delete files:

|

||||

`python copyparty-sfx.py -e2dsa -a kevin:okgo -v .::r:rwmd,kevin`

|

||||

|

||||

* read-only music server:

|

||||

`python copyparty-sfx.py -v /mnt/nas/music:/music:r -e2dsa -e2ts --no-robots --force-js --theme 2`

|

||||

|

||||

* ...with bpm and key scanning

|

||||

@@ -1169,6 +1234,7 @@ you can either:

|

||||

* if copyparty says `incorrect --rp-loc or webserver config; expected vpath starting with [...]` it's likely because the webserver is stripping away the proxy location from the request URLs -- see the `ProxyPass` in the apache example below

|

||||

|

||||

some reverse proxies (such as [Caddy](https://caddyserver.com/)) can automatically obtain a valid https/tls certificate for you, and some support HTTP/2 and QUIC which could be a nice speed boost

|

||||

* **warning:** nginx-QUIC is still experimental and can make uploads much slower, so HTTP/2 is recommended for now

|

||||

|

||||

example webserver configs:

|

||||

|

||||

@@ -1176,6 +1242,74 @@ example webserver configs:

|

||||

* [apache2 config](contrib/apache/copyparty.conf) -- location-based

|

||||

|

||||

|

||||

## prometheus

|

||||

|

||||

metrics/stats can be enabled at URL `/.cpr/metrics` for grafana / prometheus / etc (openmetrics 1.0.0)

|

||||

|

||||

must be enabled with `--stats` since it reduces startup time a tiny bit, and you probably want `-e2dsa` too

|

||||

|

||||

the endpoint is only accessible by `admin` accounts, meaning the `a` in `rwmda` in the following example commandline: `python3 -m copyparty -a ed:wark -v /mnt/nas::rwmda,ed --stats -e2dsa`

|

||||

|

||||

follow a guide for setting up `node_exporter` except have it read from copyparty instead; example `/etc/prometheus/prometheus.yml` below

|

||||

|

||||

```yaml

|

||||

scrape_configs:

|

||||

- job_name: copyparty

|

||||

metrics_path: /.cpr/metrics

|

||||

basic_auth:

|

||||

password: wark

|

||||

static_configs:

|

||||

- targets: ['192.168.123.1:3923']

|

||||

```

|

||||

|

||||

currently the following metrics are available,

|

||||

* `cpp_uptime_seconds`

|

||||

* `cpp_bans` number of banned IPs

|

||||

|

||||

and these are available per-volume only:

|

||||

* `cpp_disk_size_bytes` total HDD size

|

||||

* `cpp_disk_free_bytes` free HDD space

|

||||

|

||||

and these are per-volume and `total`:

|

||||

* `cpp_vol_bytes` size of all files in volume

|

||||

* `cpp_vol_files` number of files

|

||||

* `cpp_dupe_bytes` disk space presumably saved by deduplication

|

||||

* `cpp_dupe_files` number of dupe files

|

||||

* `cpp_unf_bytes` currently unfinished / incoming uploads

|

||||

|

||||

some of the metrics have additional requirements to function correctly,

|

||||

* `cpp_vol_*` requires either the `e2ds` volflag or `-e2dsa` global-option

|

||||

|

||||

the following options are available to disable some of the metrics:

|

||||

* `--nos-hdd` disables `cpp_disk_*` which can prevent spinning up HDDs

|

||||

* `--nos-vol` disables `cpp_vol_*` which reduces server startup time

|

||||

* `--nos-dup` disables `cpp_dupe_*` which reduces the server load caused by prometheus queries

|

||||

* `--nos-unf` disables `cpp_unf_*` for no particular purpose

|

||||

|

||||

|

||||

# packages

|

||||

|

||||

the party might be closer than you think

|

||||

|

||||

|

||||

## arch package

|

||||

|

||||

now [available on aur](https://aur.archlinux.org/packages/copyparty) maintained by [@icxes](https://github.com/icxes)

|

||||

|

||||

|

||||

## fedora package

|

||||

|

||||

now [available on copr-pypi](https://copr.fedorainfracloud.org/coprs/g/copr/PyPI/) , maintained autonomously -- [track record](https://copr.fedorainfracloud.org/coprs/g/copr/PyPI/package/python-copyparty/) seems OK

|

||||

|

||||

```bash

|

||||

dnf copr enable @copr/PyPI

|

||||

dnf install python3-copyparty # just a minimal install, or...

|

||||

dnf install python3-{copyparty,pillow,argon2-cffi,pyftpdlib,pyOpenSSL} ffmpeg-free # with recommended deps

|

||||

```

|

||||

|

||||

this *may* also work on RHEL but [I'm not paying IBM to verify that](https://www.jeffgeerling.com/blog/2023/dear-red-hat-are-you-dumb)

|

||||

|

||||

|

||||

## nix package

|

||||

|

||||

`nix profile install github:9001/copyparty`

|

||||

@@ -1350,10 +1484,10 @@ interact with copyparty using non-browser clients

|

||||

* `(printf 'PUT /junk?pw=wark HTTP/1.1\r\n\r\n'; cat movie.mkv) | nc 127.0.0.1 3923`

|

||||

* `(printf 'PUT / HTTP/1.1\r\n\r\n'; cat movie.mkv) >/dev/tcp/127.0.0.1/3923`

|

||||

|

||||

* python: [up2k.py](https://github.com/9001/copyparty/blob/hovudstraum/bin/up2k.py) is a command-line up2k client [(webm)](https://ocv.me/stuff/u2cli.webm)

|

||||

* python: [u2c.py](https://github.com/9001/copyparty/blob/hovudstraum/bin/u2c.py) is a command-line up2k client [(webm)](https://ocv.me/stuff/u2cli.webm)

|

||||

* file uploads, file-search, [folder sync](#folder-sync), autoresume of aborted/broken uploads

|

||||

* can be downloaded from copyparty: controlpanel -> connect -> [up2k.py](http://127.0.0.1:3923/.cpr/a/up2k.py)

|

||||

* see [./bin/README.md#up2kpy](bin/README.md#up2kpy)

|

||||

* can be downloaded from copyparty: controlpanel -> connect -> [u2c.py](http://127.0.0.1:3923/.cpr/a/u2c.py)

|

||||

* see [./bin/README.md#u2cpy](bin/README.md#u2cpy)

|

||||

|

||||

* FUSE: mount a copyparty server as a local filesystem

|

||||

* cross-platform python client available in [./bin/](bin/)

|

||||

@@ -1376,11 +1510,11 @@ NOTE: curl will not send the original filename if you use `-T` combined with url

|

||||

|

||||

sync folders to/from copyparty

|

||||

|

||||

the commandline uploader [up2k.py](https://github.com/9001/copyparty/tree/hovudstraum/bin#up2kpy) with `--dr` is the best way to sync a folder to copyparty; verifies checksums and does files in parallel, and deletes unexpected files on the server after upload has finished which makes file-renames really cheap (it'll rename serverside and skip uploading)

|

||||

the commandline uploader [u2c.py](https://github.com/9001/copyparty/tree/hovudstraum/bin#u2cpy) with `--dr` is the best way to sync a folder to copyparty; verifies checksums and does files in parallel, and deletes unexpected files on the server after upload has finished which makes file-renames really cheap (it'll rename serverside and skip uploading)

|

||||

|

||||

alternatively there is [rclone](./docs/rclone.md) which allows for bidirectional sync and is *way* more flexible (stream files straight from sftp/s3/gcs to copyparty, ...), although there is no integrity check and it won't work with files over 100 MiB if copyparty is behind cloudflare

|

||||

|

||||

* starting from rclone v1.63 (currently [in beta](https://beta.rclone.org/?filter=latest)), rclone will also be faster than up2k.py

|

||||

* starting from rclone v1.63 (currently [in beta](https://beta.rclone.org/?filter=latest)), rclone will also be faster than u2c.py

|

||||

|

||||

|

||||

## mount as drive

|

||||

@@ -1447,7 +1581,7 @@ when uploading files,

|

||||

* chrome is recommended, at least compared to firefox:

|

||||

* up to 90% faster when hashing, especially on SSDs

|

||||

* up to 40% faster when uploading over extremely fast internets

|

||||

* but [up2k.py](https://github.com/9001/copyparty/blob/hovudstraum/bin/up2k.py) can be 40% faster than chrome again

|

||||

* but [u2c.py](https://github.com/9001/copyparty/blob/hovudstraum/bin/u2c.py) can be 40% faster than chrome again

|

||||

|

||||

* if you're cpu-bottlenecked, or the browser is maxing a cpu core:

|

||||

* up to 30% faster uploads if you hide the upload status list by switching away from the `[🚀]` up2k ui-tab (or closing it)

|

||||

@@ -1458,10 +1592,13 @@ when uploading files,

|

||||

|

||||

# security

|

||||

|

||||

there is a [discord server](https://discord.gg/25J8CdTT6G) with an `@everyone` for all important updates (at the lack of better ideas)

|

||||

|

||||

some notes on hardening

|

||||

|

||||

* set `--rproxy 0` if your copyparty is directly facing the internet (not through a reverse-proxy)

|

||||

* cors doesn't work right otherwise

|

||||

* if you allow anonymous uploads or otherwise don't trust the contents of a volume, you can prevent XSS with volflag `nohtml`

|

||||

|

||||

safety profiles:

|

||||

|

||||

@@ -1517,12 +1654,28 @@ by default, except for `GET` and `HEAD` operations, all requests must either:

|

||||

cors can be configured with `--acao` and `--acam`, or the protections entirely disabled with `--allow-csrf`

|

||||

|

||||

|

||||

## password hashing

|

||||

|

||||

you can hash passwords before putting them into config files / providing them as arguments; see `--help-pwhash` for all the details

|

||||

|

||||

`--ah-alg argon2` enables it, and if you have any plaintext passwords then it'll print the hashed versions on startup so you can replace them

|

||||

|

||||

optionally also specify `--ah-cli` to enter an interactive mode where it will hash passwords without ever writing the plaintext ones to disk

|

||||

|

||||

the default configs take about 0.4 sec and 256 MiB RAM to process a new password on a decent laptop

|

||||

|

||||

|

||||

## https

|

||||

|

||||

both HTTP and HTTPS are accepted by default, but letting a [reverse proxy](#reverse-proxy) handle the https/tls/ssl would be better (probably more secure by default)

|

||||

|

||||

copyparty doesn't speak HTTP/2 or QUIC, so using a reverse proxy would solve that as well

|

||||

|

||||

if [cfssl](https://github.com/cloudflare/cfssl/releases/latest) is installed, copyparty will automatically create a CA and server-cert on startup

|

||||

* the certs are written to `--crt-dir` for distribution, see `--help` for the other `--crt` options

|

||||

* this will be a self-signed certificate so you must install your `ca.pem` into all your browsers/devices

|

||||

* if you want to avoid the hassle of distributing certs manually, please consider using a reverse proxy

|

||||

|

||||

|

||||

# recovering from crashes

|

||||

|

||||

@@ -1559,6 +1712,8 @@ mandatory deps:

|

||||

|

||||

install these to enable bonus features

|

||||

|

||||

enable hashed passwords in config: `argon2-cffi`

|

||||

|

||||

enable ftp-server:

|

||||

* for just plaintext FTP, `pyftpdlib` (is built into the SFX)

|

||||

* with TLS encryption, `pyftpdlib pyopenssl`

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

# [`up2k.py`](up2k.py)

|

||||

# [`u2c.py`](u2c.py)

|

||||

* command-line up2k client [(webm)](https://ocv.me/stuff/u2cli.webm)

|

||||

* file uploads, file-search, autoresume of aborted/broken uploads

|

||||

* sync local folder to server

|

||||

|

||||

35

bin/handlers/README.md

Normal file

35

bin/handlers/README.md

Normal file

@@ -0,0 +1,35 @@

|

||||

replace the standard 404 / 403 responses with plugins

|

||||

|

||||

|

||||

# usage

|

||||

|

||||

load plugins either globally with `--on404 ~/dev/copyparty/bin/handlers/sorry.py` or for a specific volume with `:c,on404=~/handlers/sorry.py`

|

||||

|

||||

|

||||

# api

|

||||

|

||||

each plugin must define a `main()` which takes 3 arguments;

|

||||

|

||||

* `cli` is an instance of [copyparty/httpcli.py](https://github.com/9001/copyparty/blob/hovudstraum/copyparty/httpcli.py) (the monstrosity itself)

|

||||

* `vn` is the VFS which overlaps with the requested URL, and

|

||||

* `rem` is the URL remainder below the VFS mountpoint

|

||||

* so `vn.vpath + rem` == `cli.vpath` == original request

|

||||

|

||||

|

||||

# examples

|

||||

|

||||

## on404

|

||||

|

||||

* [sorry.py](answer.py) replies with a custom message instead of the usual 404

|

||||

* [nooo.py](nooo.py) replies with an endless noooooooooooooo

|

||||

* [never404.py](never404.py) 100% guarantee that 404 will never be a thing again as it automatically creates dummy files whenever necessary

|

||||

* [caching-proxy.py](caching-proxy.py) transforms copyparty into a squid/varnish knockoff

|

||||

|

||||

## on403

|

||||

|

||||

* [ip-ok.py](ip-ok.py) disables security checks if client-ip is 1.2.3.4

|

||||

|

||||

|

||||

# notes

|

||||

|

||||

* on403 only works for trivial stuff (basic http access) since I haven't been able to think of any good usecases for it (was just easy to add while doing on404)

|

||||

36

bin/handlers/caching-proxy.py

Executable file

36

bin/handlers/caching-proxy.py

Executable file

@@ -0,0 +1,36 @@

|

||||

# assume each requested file exists on another webserver and

|

||||

# download + mirror them as they're requested

|

||||

# (basically pretend we're warnish)

|

||||

|

||||

import os

|

||||

import requests

|

||||

|

||||

from typing import TYPE_CHECKING

|

||||

|

||||

if TYPE_CHECKING:

|

||||

from copyparty.httpcli import HttpCli

|

||||

|

||||

|

||||

def main(cli: "HttpCli", vn, rem):

|

||||

url = "https://mirrors.edge.kernel.org/alpine/" + rem

|

||||

abspath = os.path.join(vn.realpath, rem)

|

||||

|

||||

# sneaky trick to preserve a requests-session between downloads

|

||||

# so it doesn't have to spend ages reopening https connections;

|

||||

# luckily we can stash it inside the copyparty client session,

|

||||

# name just has to be definitely unused so "hacapo_req_s" it is

|

||||

req_s = getattr(cli.conn, "hacapo_req_s", None) or requests.Session()

|

||||

setattr(cli.conn, "hacapo_req_s", req_s)

|

||||

|

||||

try:

|

||||

os.makedirs(os.path.dirname(abspath), exist_ok=True)

|

||||

with req_s.get(url, stream=True, timeout=69) as r:

|

||||

r.raise_for_status()

|

||||

with open(abspath, "wb", 64 * 1024) as f:

|

||||

for buf in r.iter_content(chunk_size=64 * 1024):

|

||||

f.write(buf)

|

||||

except:

|

||||

os.unlink(abspath)

|

||||

return "false"

|

||||

|

||||

return "retry"

|

||||

6

bin/handlers/ip-ok.py

Executable file

6

bin/handlers/ip-ok.py

Executable file

@@ -0,0 +1,6 @@

|

||||

# disable permission checks and allow access if client-ip is 1.2.3.4

|

||||

|

||||

|

||||

def main(cli, vn, rem):

|

||||

if cli.ip == "1.2.3.4":

|

||||

return "allow"

|

||||

11

bin/handlers/never404.py

Executable file

11

bin/handlers/never404.py

Executable file

@@ -0,0 +1,11 @@

|

||||

# create a dummy file and let copyparty return it

|

||||

|

||||

|

||||

def main(cli, vn, rem):

|

||||

print("hello", cli.ip)

|

||||

|

||||

abspath = vn.canonical(rem)

|

||||

with open(abspath, "wb") as f:

|

||||

f.write(b"404? not on MY watch!")

|

||||

|

||||

return "retry"

|

||||

16

bin/handlers/nooo.py

Executable file

16

bin/handlers/nooo.py

Executable file

@@ -0,0 +1,16 @@

|

||||

# reply with an endless "noooooooooooooooooooooooo"

|

||||

|

||||

|

||||

def say_no():

|

||||

yield b"n"

|

||||

while True:

|

||||

yield b"o" * 4096

|

||||

|

||||

|

||||

def main(cli, vn, rem):

|

||||

cli.send_headers(None, 404, "text/plain")

|

||||

|

||||

for chunk in say_no():

|

||||

cli.s.sendall(chunk)

|

||||

|

||||

return "false"

|

||||

7

bin/handlers/sorry.py

Executable file

7

bin/handlers/sorry.py

Executable file

@@ -0,0 +1,7 @@

|

||||

# sends a custom response instead of the usual 404

|

||||

|

||||

|

||||

def main(cli, vn, rem):

|

||||

msg = f"sorry {cli.ip} but {cli.vpath} doesn't exist"

|

||||

|

||||

return str(cli.reply(msg.encode("utf-8"), 404, "text/plain"))

|

||||

@@ -37,6 +37,10 @@ def main():

|

||||

if "://" not in url:

|

||||

url = "https://" + url

|

||||

|

||||

proto = url.split("://")[0].lower()

|

||||

if proto not in ("http", "https", "ftp", "ftps"):

|

||||

raise Exception("bad proto {}".format(proto))

|

||||

|

||||

os.chdir(inf["ap"])

|

||||

|

||||

name = url.split("?")[0].split("/")[-1]

|

||||

|

||||

@@ -24,6 +24,15 @@ these do not have any problematic dependencies at all:

|

||||

* also available as an [event hook](../hooks/wget.py)

|

||||

|

||||

|

||||

## dangerous plugins

|

||||

|

||||

plugins in this section should only be used with appropriate precautions:

|

||||

|

||||

* [very-bad-idea.py](./very-bad-idea.py) combined with [meadup.js](https://github.com/9001/copyparty/blob/hovudstraum/contrib/plugins/meadup.js) converts copyparty into a janky yet extremely flexible chromecast clone

|

||||

* also adds a virtual keyboard by @steinuil to the basic-upload tab for comfy couch crowd control

|

||||

* anything uploaded through the [android app](https://github.com/9001/party-up) (files or links) are executed on the server, meaning anyone can infect your PC with malware... so protect this with a password and keep it on a LAN!

|

||||

|

||||

|

||||

# dependencies

|

||||

|

||||

run [`install-deps.sh`](install-deps.sh) to build/install most dependencies required by these programs (supports windows/linux/macos)

|

||||

|

||||

@@ -1,6 +1,11 @@

|

||||

#!/usr/bin/env python3

|

||||

|

||||

"""

|

||||

WARNING -- DANGEROUS PLUGIN --

|

||||

if someone is able to upload files to a copyparty which is

|

||||

running this plugin, they can execute malware on your machine

|

||||

so please keep this on a LAN and protect it with a password

|

||||

|

||||

use copyparty as a chromecast replacement:

|

||||

* post a URL and it will open in the default browser

|

||||

* upload a file and it will open in the default application

|

||||

@@ -10,16 +15,17 @@ use copyparty as a chromecast replacement:

|

||||

|

||||

the android app makes it a breeze to post pics and links:

|

||||

https://github.com/9001/party-up/releases

|

||||

(iOS devices have to rely on the web-UI)

|

||||

|

||||

goes without saying, but this is HELLA DANGEROUS,

|

||||

GIVES RCE TO ANYONE WHO HAVE UPLOAD PERMISSIONS

|

||||

iOS devices can use the web-UI or the shortcut instead:

|

||||

https://github.com/9001/copyparty#ios-shortcuts

|

||||

|

||||

example copyparty config to use this:

|

||||

--urlform save,get -v.::w:c,e2d,e2t,mte=+a1:c,mtp=a1=ad,kn,c0,bin/mtag/very-bad-idea.py

|

||||

example copyparty config to use this;

|

||||

lets the user "kevin" with password "hunter2" use this plugin:

|

||||

-a kevin:hunter2 --urlform save,get -v.::w,kevin:c,e2d,e2t,mte=+a1:c,mtp=a1=ad,kn,c0,bin/mtag/very-bad-idea.py

|

||||

|

||||

recommended deps:

|

||||

apt install xdotool libnotify-bin

|

||||

apt install xdotool libnotify-bin mpv

|

||||

python3 -m pip install --user -U streamlink yt-dlp

|

||||

https://github.com/9001/copyparty/blob/hovudstraum/contrib/plugins/meadup.js

|

||||

|

||||

and you probably want `twitter-unmute.user.js` from the res folder

|

||||

@@ -63,8 +69,10 @@ set -e

|

||||

EOF

|

||||

chmod 755 /usr/local/bin/chromium-browser

|

||||

|

||||

# start the server (note: replace `-v.::rw:` with `-v.::w:` to disallow retrieving uploaded stuff)

|

||||

cd ~/Downloads; python3 copyparty-sfx.py --urlform save,get -v.::rw:c,e2d,e2t,mte=+a1:c,mtp=a1=ad,kn,very-bad-idea.py

|

||||

# start the server

|

||||

# note 1: replace hunter2 with a better password to access the server

|

||||

# note 2: replace `-v.::rw` with `-v.::w` to disallow retrieving uploaded stuff

|

||||

cd ~/Downloads; python3 copyparty-sfx.py -a kevin:hunter2 --urlform save,get -v.::rw,kevin:c,e2d,e2t,mte=+a1:c,mtp=a1=ad,kn,very-bad-idea.py

|

||||

|

||||

"""

|

||||

|

||||

@@ -72,11 +80,23 @@ cd ~/Downloads; python3 copyparty-sfx.py --urlform save,get -v.::rw:c,e2d,e2t,mt

|

||||

import os

|

||||

import sys

|

||||

import time

|

||||

import shutil

|

||||

import subprocess as sp

|

||||

from urllib.parse import unquote_to_bytes as unquote

|

||||

from urllib.parse import quote

|

||||

|

||||

have_mpv = shutil.which("mpv")

|

||||

have_vlc = shutil.which("vlc")

|

||||

|

||||

|

||||

def main():

|

||||

if len(sys.argv) > 2 and sys.argv[1] == "x":

|

||||

# invoked on commandline for testing;

|

||||

# python3 very-bad-idea.py x msg=https://youtu.be/dQw4w9WgXcQ

|

||||

txt = " ".join(sys.argv[2:])

|

||||

txt = quote(txt.replace(" ", "+"))

|

||||

return open_post(txt.encode("utf-8"))

|

||||

|

||||

fp = os.path.abspath(sys.argv[1])

|

||||

with open(fp, "rb") as f:

|

||||

txt = f.read(4096)

|

||||

@@ -92,7 +112,7 @@ def open_post(txt):

|

||||

try:

|

||||

k, v = txt.split(" ", 1)

|

||||

except:

|

||||

open_url(txt)

|

||||

return open_url(txt)

|

||||

|

||||

if k == "key":

|

||||

sp.call(["xdotool", "key"] + v.split(" "))

|

||||

@@ -128,6 +148,17 @@ def open_url(txt):

|

||||

# else:

|

||||

# sp.call(["xdotool", "getactivewindow", "windowminimize"]) # minimizes the focused windo

|

||||

|

||||

# mpv is probably smart enough to use streamlink automatically

|

||||

if try_mpv(txt):

|

||||

print("mpv got it")

|

||||

return

|

||||

|

||||

# or maybe streamlink would be a good choice to open this

|

||||

if try_streamlink(txt):

|

||||

print("streamlink got it")

|

||||

return

|

||||

|

||||

# nope,

|

||||

# close any error messages:

|

||||

sp.call(["xdotool", "search", "--name", "Error", "windowclose"])

|

||||

# sp.call(["xdotool", "key", "ctrl+alt+d"]) # doesnt work at all

|

||||

@@ -136,4 +167,39 @@ def open_url(txt):

|

||||

sp.call(["xdg-open", txt])

|

||||

|

||||

|

||||

def try_mpv(url):

|

||||

t0 = time.time()

|

||||

try:

|

||||

print("trying mpv...")

|

||||

sp.check_call(["mpv", "--fs", url])

|

||||

return True

|

||||

except:

|

||||

# if it ran for 15 sec it probably succeeded and terminated

|

||||

t = time.time()

|

||||

return t - t0 > 15

|

||||

|

||||

|

||||

def try_streamlink(url):

|

||||

t0 = time.time()

|

||||

try:

|

||||

import streamlink

|

||||

|

||||

print("trying streamlink...")

|

||||

streamlink.Streamlink().resolve_url(url)

|

||||

|

||||

if have_mpv:

|

||||

args = "-m streamlink -p mpv -a --fs"

|

||||

else:

|

||||

args = "-m streamlink"

|

||||

|

||||

cmd = [sys.executable] + args.split() + [url, "best"]

|

||||

t0 = time.time()

|

||||

sp.check_call(cmd)

|

||||

return True

|

||||

except:

|

||||

# if it ran for 10 sec it probably succeeded and terminated

|

||||

t = time.time()

|

||||

return t - t0 > 10

|

||||

|

||||

|

||||

main()

|

||||

|

||||

@@ -65,6 +65,10 @@ def main():

|

||||

if "://" not in url:

|

||||

url = "https://" + url

|

||||

|

||||

proto = url.split("://")[0].lower()

|

||||

if proto not in ("http", "https", "ftp", "ftps"):

|

||||

raise Exception("bad proto {}".format(proto))

|

||||

|

||||

os.chdir(fdir)

|

||||

|

||||

name = url.split("?")[0].split("/")[-1]

|

||||

|

||||

@@ -1,19 +1,20 @@

|

||||

#!/usr/bin/env python3

|

||||

from __future__ import print_function, unicode_literals

|

||||

|

||||

S_VERSION = "1.6"

|

||||

S_BUILD_DT = "2023-04-20"

|

||||

S_VERSION = "1.10"

|

||||

S_BUILD_DT = "2023-08-15"

|

||||

|

||||

"""

|

||||

up2k.py: upload to copyparty

|

||||

u2c.py: upload to copyparty

|

||||

2021, ed <irc.rizon.net>, MIT-Licensed

|

||||

https://github.com/9001/copyparty/blob/hovudstraum/bin/up2k.py

|

||||

https://github.com/9001/copyparty/blob/hovudstraum/bin/u2c.py

|

||||

|

||||

- dependencies: requests

|

||||

- supports python 2.6, 2.7, and 3.3 through 3.12

|

||||

- if something breaks just try again and it'll autoresume

|

||||

"""

|

||||

|

||||

import re

|

||||

import os

|

||||

import sys

|

||||

import stat

|

||||

@@ -21,6 +22,7 @@ import math

|

||||

import time

|

||||

import atexit

|

||||

import signal

|

||||

import socket

|

||||

import base64

|

||||

import hashlib

|

||||

import platform

|

||||

@@ -58,6 +60,7 @@ PY2 = sys.version_info < (3,)

|

||||

if PY2:

|

||||

from Queue import Queue

|

||||

from urllib import quote, unquote

|

||||

from urlparse import urlsplit, urlunsplit

|

||||

|

||||

sys.dont_write_bytecode = True

|

||||

bytes = str

|

||||

@@ -65,6 +68,7 @@ else:

|

||||

from queue import Queue

|

||||

from urllib.parse import unquote_to_bytes as unquote

|

||||

from urllib.parse import quote_from_bytes as quote

|

||||

from urllib.parse import urlsplit, urlunsplit

|

||||

|

||||

unicode = str

|

||||

|

||||

@@ -337,6 +341,32 @@ class CTermsize(object):

|

||||

ss = CTermsize()

|

||||

|

||||

|

||||

def undns(url):

|

||||

usp = urlsplit(url)

|

||||

hn = usp.hostname

|

||||

gai = None

|

||||

eprint("resolving host [{0}] ...".format(hn), end="")

|

||||

try:

|

||||

gai = socket.getaddrinfo(hn, None)

|

||||

hn = gai[0][4][0]

|

||||

except KeyboardInterrupt:

|

||||

raise

|

||||

except:

|

||||

t = "\n\033[31mfailed to resolve upload destination host;\033[0m\ngai={0}\n"

|

||||

eprint(t.format(repr(gai)))

|

||||

raise

|

||||

|

||||

if usp.port:

|

||||

hn = "{0}:{1}".format(hn, usp.port)

|

||||

if usp.username or usp.password:

|

||||

hn = "{0}:{1}@{2}".format(usp.username, usp.password, hn)

|

||||

|

||||

usp = usp._replace(netloc=hn)

|

||||

url = urlunsplit(usp)

|

||||

eprint(" {0}".format(url))

|

||||

return url

|

||||

|

||||

|

||||

def _scd(err, top):

|

||||

"""non-recursive listing of directory contents, along with stat() info"""

|

||||

with os.scandir(top) as dh:

|

||||

@@ -382,10 +412,11 @@ def walkdir(err, top, seen):

|

||||

err.append((ap, str(ex)))

|

||||

|

||||

|

||||

def walkdirs(err, tops):

|

||||

def walkdirs(err, tops, excl):

|

||||

"""recursive statdir for a list of tops, yields [top, relpath, stat]"""

|

||||

sep = "{0}".format(os.sep).encode("ascii")

|

||||

if not VT100:

|

||||

excl = excl.replace("/", r"\\")

|

||||

za = []

|

||||

for td in tops:

|

||||

try:

|

||||

@@ -402,6 +433,8 @@ def walkdirs(err, tops):

|

||||

za = [x.replace(b"/", b"\\") for x in za]

|

||||

tops = za

|

||||

|

||||

ptn = re.compile(excl.encode("utf-8") or b"\n")

|

||||

|

||||

for top in tops:

|

||||

isdir = os.path.isdir(top)

|

||||

if top[-1:] == sep:

|

||||

@@ -414,6 +447,8 @@ def walkdirs(err, tops):

|

||||

|

||||

if isdir:

|

||||

for ap, inf in walkdir(err, top, []):

|

||||

if ptn.match(ap):

|

||||

continue

|

||||

yield stop, ap[len(stop) :].lstrip(sep), inf

|

||||

else:

|

||||

d, n = top.rsplit(sep, 1)

|

||||

@@ -625,7 +660,7 @@ class Ctl(object):

|

||||

nfiles = 0

|

||||

nbytes = 0

|

||||

err = []

|

||||

for _, _, inf in walkdirs(err, ar.files):

|

||||

for _, _, inf in walkdirs(err, ar.files, ar.x):

|

||||

if stat.S_ISDIR(inf.st_mode):

|

||||

continue

|

||||

|

||||

@@ -667,7 +702,7 @@ class Ctl(object):

|

||||

if ar.te:

|

||||

req_ses.verify = ar.te

|

||||

|

||||

self.filegen = walkdirs([], ar.files)

|

||||

self.filegen = walkdirs([], ar.files, ar.x)

|

||||

self.recheck = [] # type: list[File]

|

||||

|

||||

if ar.safe:

|

||||

@@ -853,7 +888,7 @@ class Ctl(object):

|

||||

print(" ls ~{0}".format(srd))

|

||||

zb = self.ar.url.encode("utf-8")

|

||||

zb += quotep(rd.replace(b"\\", b"/"))

|

||||

r = req_ses.get(zb + b"?ls&dots", headers=headers)

|

||||

r = req_ses.get(zb + b"?ls<&dots", headers=headers)

|

||||

if not r:

|

||||

raise Exception("HTTP {0}".format(r.status_code))

|

||||

|

||||

@@ -931,7 +966,7 @@ class Ctl(object):

|

||||

|

||||

upath = file.abs.decode("utf-8", "replace")

|

||||

if not VT100:

|

||||

upath = upath[4:]

|

||||

upath = upath.lstrip("\\?")

|

||||

|

||||

hs, sprs = handshake(self.ar, file, search)

|

||||

if search:

|

||||

@@ -1068,11 +1103,13 @@ source file/folder selection uses rsync syntax, meaning that:

|

||||

ap.add_argument("-v", action="store_true", help="verbose")

|

||||

ap.add_argument("-a", metavar="PASSWORD", help="password or $filepath")

|

||||

ap.add_argument("-s", action="store_true", help="file-search (disables upload)")

|

||||

ap.add_argument("-x", type=unicode, metavar="REGEX", default="", help="skip file if filesystem-abspath matches REGEX, example: '.*/\.hist/.*'")

|

||||

ap.add_argument("--ok", action="store_true", help="continue even if some local files are inaccessible")

|

||||

ap.add_argument("--version", action="store_true", help="show version and exit")

|

||||

|

||||

ap = app.add_argument_group("compatibility")

|

||||

ap.add_argument("--cls", action="store_true", help="clear screen before start")

|

||||

ap.add_argument("--rh", type=int, metavar="TRIES", default=0, help="resolve server hostname before upload (good for buggy networks, but TLS certs will break)")

|

||||

|

||||

ap = app.add_argument_group("folder sync")

|

||||

ap.add_argument("--dl", action="store_true", help="delete local files after uploading")

|

||||

@@ -1083,7 +1120,7 @@ source file/folder selection uses rsync syntax, meaning that:

|

||||

ap.add_argument("-j", type=int, metavar="THREADS", default=4, help="parallel connections")

|

||||

ap.add_argument("-J", type=int, metavar="THREADS", default=hcores, help="num cpu-cores to use for hashing; set 0 or 1 for single-core hashing")

|

||||

ap.add_argument("-nh", action="store_true", help="disable hashing while uploading")

|

||||

ap.add_argument("-ns", action="store_true", help="no status panel (for slow consoles)")

|

||||

ap.add_argument("-ns", action="store_true", help="no status panel (for slow consoles and macos)")

|

||||

ap.add_argument("--safe", action="store_true", help="use simple fallback approach")

|

||||

ap.add_argument("-z", action="store_true", help="ZOOMIN' (skip uploading files if they exist at the destination with the ~same last-modified timestamp, so same as yolo / turbo with date-chk but even faster)")

|

||||

|

||||

@@ -1096,7 +1133,7 @@ source file/folder selection uses rsync syntax, meaning that:

|

||||

ar = app.parse_args()

|

||||

finally:

|

||||

if EXE and not sys.argv[1:]:

|

||||

print("*** hit enter to exit ***")

|

||||

eprint("*** hit enter to exit ***")

|

||||

try:

|

||||

input()

|

||||

except:

|

||||

@@ -1129,8 +1166,18 @@ source file/folder selection uses rsync syntax, meaning that:

|

||||

with open(fn, "rb") as f:

|

||||

ar.a = f.read().decode("utf-8").strip()

|

||||

|

||||

for n in range(ar.rh):

|

||||

try:

|

||||

ar.url = undns(ar.url)

|

||||

break

|

||||

except KeyboardInterrupt:

|

||||

raise

|

||||

except:

|

||||

if n > ar.rh - 2:

|

||||

raise

|

||||

|

||||

if ar.cls:

|

||||

print("\x1b\x5b\x48\x1b\x5b\x32\x4a\x1b\x5b\x33\x4a", end="")

|

||||

eprint("\x1b\x5b\x48\x1b\x5b\x32\x4a\x1b\x5b\x33\x4a", end="")

|

||||

|

||||

ctl = Ctl(ar)

|

||||

|

||||

@@ -1,14 +1,44 @@

|

||||

#!/bin/bash

|

||||

set -e

|

||||

|

||||

cat >/dev/null <<'EOF'

|

||||

|

||||

NOTE: copyparty is now able to do this automatically;

|

||||

however you may wish to use this script instead if

|

||||

you have specific needs (or if copyparty breaks)

|

||||

|

||||

this script generates a new self-signed TLS certificate and

|

||||

replaces the default insecure one that comes with copyparty

|

||||

|

||||

as it is trivial to impersonate a copyparty server using the

|

||||

default certificate, it is highly recommended to do this

|

||||

|

||||

this will create a self-signed CA, and a Server certificate

|

||||

which gets signed by that CA -- you can run it multiple times

|

||||

with different server-FQDNs / IPs to create additional certs

|

||||

for all your different servers / (non-)copyparty services

|

||||

|

||||

EOF

|

||||

|

||||

|

||||

# ca-name and server-fqdn

|

||||

ca_name="$1"

|

||||

srv_fqdn="$2"

|

||||

|

||||

[ -z "$srv_fqdn" ] && {

|

||||

echo "need arg 1: ca name"

|

||||

echo "need arg 2: server fqdn and/or IPs, comma-separated"

|

||||

echo "optional arg 3: if set, write cert into copyparty cfg"

|

||||

[ -z "$srv_fqdn" ] && { cat <<'EOF'

|

||||

need arg 1: ca name

|

||||

need arg 2: server fqdn and/or IPs, comma-separated

|

||||

optional arg 3: if set, write cert into copyparty cfg

|

||||

|

||||

example:

|

||||

./cfssl.sh PartyCo partybox.local y

|

||||

EOF

|

||||

exit 1

|

||||

}

|

||||

|

||||

|

||||

command -v cfssljson 2>/dev/null || {

|

||||

echo please install cfssl and try again

|

||||

exit 1

|

||||

}

|

||||

|

||||

@@ -59,12 +89,14 @@ show() {

|

||||

}

|

||||

show ca.pem

|

||||

show "$srv_fqdn.pem"

|

||||

|

||||

echo

|

||||

echo "successfully generated new certificates"

|

||||

|

||||

# write cert into copyparty config

|

||||

[ -z "$3" ] || {

|

||||

mkdir -p ~/.config/copyparty

|

||||

cat "$srv_fqdn".{key,pem} ca.pem >~/.config/copyparty/cert.pem

|

||||

echo "successfully replaced copyparty certificate"

|

||||

}

|

||||

|

||||

|

||||

|

||||

@@ -138,6 +138,7 @@ in {

|

||||

"d" (delete): permanently delete files and folders

|

||||

"g" (get): download files, but cannot see folder contents

|

||||