Compare commits

50 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

ce3cab0295 | ||

|

|

c784e5285e | ||

|

|

2bf9055cae | ||

|

|

8aba5aed4f | ||

|

|

0ce7cf5e10 | ||

|

|

96edcbccd7 | ||

|

|

4603afb6de | ||

|

|

56317b00af | ||

|

|

cacec9c1f3 | ||

|

|

44ee07f0b2 | ||

|

|

6a8d5e1731 | ||

|

|

d9962f65b3 | ||

|

|

119e88d87b | ||

|

|

71d9e010d9 | ||

|

|

5718caa957 | ||

|

|

efd8a32ed6 | ||

|

|

b22d700e16 | ||

|

|

ccdacea0c4 | ||

|

|

4bdcbc1cb5 | ||

|

|

833c6cf2ec | ||

|

|

dd6dbdd90a | ||

|

|

63013cc565 | ||

|

|

912402364a | ||

|

|

159f51b12b | ||

|

|

7678a91b0e | ||

|

|

b13899c63d | ||

|

|

3a0d882c5e | ||

|

|

cb81f0ad6d | ||

|

|

518bacf628 | ||

|

|

ca63b03e55 | ||

|

|

cecef88d6b | ||

|

|

7ffd805a03 | ||

|

|

a7e2a0c981 | ||

|

|

2a570bb4ca | ||

|

|

5ca8f0706d | ||

|

|

a9b4436cdc | ||

|

|

5f91999512 | ||

|

|

9f000beeaf | ||

|

|

ff0a71f212 | ||

|

|

22dfc6ec24 | ||

|

|

48147c079e | ||

|

|

d715479ef6 | ||

|

|

fc8298c468 | ||

|

|

e94ca5dc91 | ||

|

|

114b71b751 | ||

|

|

b2770a2087 | ||

|

|

cba1878bb2 | ||

|

|

a2e037d6af | ||

|

|

65a2b6a223 | ||

|

|

9ed799e803 |

44

README.md

44

README.md

@@ -47,6 +47,7 @@ turn almost any device into a file server with resumable uploads/downloads using

|

||||

* [file manager](#file-manager) - cut/paste, rename, and delete files/folders (if you have permission)

|

||||

* [shares](#shares) - share a file or folder by creating a temporary link

|

||||

* [batch rename](#batch-rename) - select some files and press `F2` to bring up the rename UI

|

||||

* [rss feeds](#rss-feeds) - monitor a folder with your RSS reader

|

||||

* [media player](#media-player) - plays almost every audio format there is

|

||||

* [audio equalizer](#audio-equalizer) - and [dynamic range compressor](https://en.wikipedia.org/wiki/Dynamic_range_compression)

|

||||

* [fix unreliable playback on android](#fix-unreliable-playback-on-android) - due to phone / app settings

|

||||

@@ -219,7 +220,7 @@ also see [comparison to similar software](./docs/versus.md)

|

||||

* upload

|

||||

* ☑ basic: plain multipart, ie6 support

|

||||

* ☑ [up2k](#uploading): js, resumable, multithreaded

|

||||

* **no filesize limit!** ...unless you use Cloudflare, then it's 383.9 GiB

|

||||

* **no filesize limit!** even on Cloudflare

|

||||

* ☑ stash: simple PUT filedropper

|

||||

* ☑ filename randomizer

|

||||

* ☑ write-only folders

|

||||

@@ -427,7 +428,7 @@ configuring accounts/volumes with arguments:

|

||||

|

||||

permissions:

|

||||

* `r` (read): browse folder contents, download files, download as zip/tar, see filekeys/dirkeys

|

||||

* `w` (write): upload files, move files *into* this folder

|

||||

* `w` (write): upload files, move/copy files *into* this folder

|

||||

* `m` (move): move files/folders *from* this folder

|

||||

* `d` (delete): delete files/folders

|

||||

* `.` (dots): user can ask to show dotfiles in directory listings

|

||||

@@ -507,7 +508,8 @@ the browser has the following hotkeys (always qwerty)

|

||||

* `ESC` close various things

|

||||

* `ctrl-K` delete selected files/folders

|

||||

* `ctrl-X` cut selected files/folders

|

||||

* `ctrl-V` paste

|

||||

* `ctrl-C` copy selected files/folders to clipboard

|

||||

* `ctrl-V` paste (move/copy)

|

||||

* `Y` download selected files

|

||||

* `F2` [rename](#batch-rename) selected file/folder

|

||||

* when a file/folder is selected (in not-grid-view):

|

||||

@@ -576,6 +578,7 @@ click the `🌲` or pressing the `B` hotkey to toggle between breadcrumbs path (

|

||||

|

||||

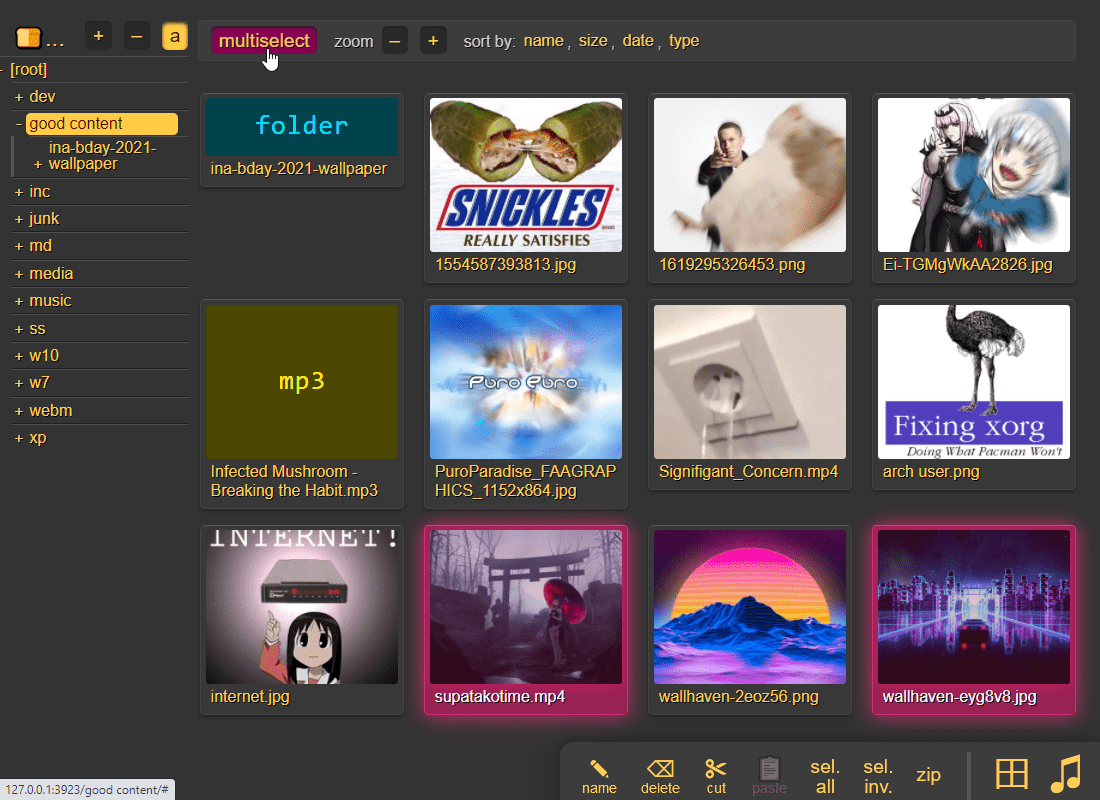

press `g` or `田` to toggle grid-view instead of the file listing and `t` toggles icons / thumbnails

|

||||

* can be made default globally with `--grid` or per-volume with volflag `grid`

|

||||

* enable by adding `?imgs` to a link, or disable with `?imgs=0`

|

||||

|

||||

|

||||

|

||||

@@ -654,7 +657,7 @@ up2k has several advantages:

|

||||

* uploads resume if you reboot your browser or pc, just upload the same files again

|

||||

* server detects any corruption; the client reuploads affected chunks

|

||||

* the client doesn't upload anything that already exists on the server

|

||||

* no filesize limit unless imposed by a proxy, for example Cloudflare, which blocks uploads over 383.9 GiB

|

||||

* no filesize limit, even when a proxy limits the request size (for example Cloudflare)

|

||||

* much higher speeds than ftp/scp/tarpipe on some internet connections (mainly american ones) thanks to parallel connections

|

||||

* the last-modified timestamp of the file is preserved

|

||||

|

||||

@@ -690,6 +693,8 @@ note that since up2k has to read each file twice, `[🎈] bup` can *theoreticall

|

||||

|

||||

if you are resuming a massive upload and want to skip hashing the files which already finished, you can enable `turbo` in the `[⚙️] config` tab, but please read the tooltip on that button

|

||||

|

||||

if the server is behind a proxy which imposes a request-size limit, you can configure up2k to sneak below the limit with server-option `--u2sz` (the default is 96 MiB to support Cloudflare)

|

||||

|

||||

|

||||

### file-search

|

||||

|

||||

@@ -753,10 +758,11 @@ file selection: click somewhere on the line (not the link itself), then:

|

||||

* shift-click another line for range-select

|

||||

|

||||

* cut: select some files and `ctrl-x`

|

||||

* copy: select some files and `ctrl-c`

|

||||

* paste: `ctrl-v` in another folder

|

||||

* rename: `F2`

|

||||

|

||||

you can move files across browser tabs (cut in one tab, paste in another)

|

||||

you can copy/move files across browser tabs (cut/copy in one tab, paste in another)

|

||||

|

||||

|

||||

## shares

|

||||

@@ -843,6 +849,30 @@ or a mix of both:

|

||||

the metadata keys you can use in the format field are the ones in the file-browser table header (whatever is collected with `-mte` and `-mtp`)

|

||||

|

||||

|

||||

## rss feeds

|

||||

|

||||

monitor a folder with your RSS reader , optionally recursive

|

||||

|

||||

must be enabled per-volume with volflag `rss` or globally with `--rss`

|

||||

|

||||

the feed includes itunes metadata for use with podcast readers such as [AntennaPod](https://antennapod.org/)

|

||||

|

||||

a feed example: https://cd.ocv.me/a/d2/d22/?rss&fext=mp3

|

||||

|

||||

url parameters:

|

||||

|

||||

* `pw=hunter2` for password auth

|

||||

* `recursive` to also include subfolders

|

||||

* `title=foo` changes the feed title (default: folder name)

|

||||

* `fext=mp3,opus` only include mp3 and opus files (default: all)

|

||||

* `nf=30` only show the first 30 results (default: 250)

|

||||

* `sort=m` sort by mtime (file last-modified), newest first (default)

|

||||

* `u` = upload-time; NOTE: non-uploaded files have upload-time `0`

|

||||

* `n` = filename

|

||||

* `a` = filesize

|

||||

* uppercase = reverse-sort; `M` = oldest file first

|

||||

|

||||

|

||||

## media player

|

||||

|

||||

plays almost every audio format there is (if the server has FFmpeg installed for on-demand transcoding)

|

||||

@@ -1657,6 +1687,7 @@ scrape_configs:

|

||||

currently the following metrics are available,

|

||||

* `cpp_uptime_seconds` time since last copyparty restart

|

||||

* `cpp_boot_unixtime_seconds` same but as an absolute timestamp

|

||||

* `cpp_active_dl` number of active downloads

|

||||

* `cpp_http_conns` number of open http(s) connections

|

||||

* `cpp_http_reqs` number of http(s) requests handled

|

||||

* `cpp_sus_reqs` number of 403/422/malicious requests

|

||||

@@ -1906,6 +1937,9 @@ quick summary of more eccentric web-browsers trying to view a directory index:

|

||||

| **ie4** and **netscape** 4.0 | can browse, upload with `?b=u`, auth with `&pw=wark` |

|

||||

| **ncsa mosaic** 2.7 | does not get a pass, [pic1](https://user-images.githubusercontent.com/241032/174189227-ae816026-cf6f-4be5-a26e-1b3b072c1b2f.png) - [pic2](https://user-images.githubusercontent.com/241032/174189225-5651c059-5152-46e9-ac26-7e98e497901b.png) |

|

||||

| **SerenityOS** (7e98457) | hits a page fault, works with `?b=u`, file upload not-impl |

|

||||

| **nintendo 3ds** | can browse, upload, view thumbnails (thx bnjmn) |

|

||||

|

||||

<p align="center"><img src="https://github.com/user-attachments/assets/88deab3d-6cad-4017-8841-2f041472b853" /></p>

|

||||

|

||||

|

||||

# client examples

|

||||

|

||||

@@ -2,7 +2,7 @@ standalone programs which are executed by copyparty when an event happens (uploa

|

||||

|

||||

these programs either take zero arguments, or a filepath (the affected file), or a json message with filepath + additional info

|

||||

|

||||

run copyparty with `--help-hooks` for usage details / hook type explanations (xm/xbu/xau/xiu/xbr/xar/xbd/xad/xban)

|

||||

run copyparty with `--help-hooks` for usage details / hook type explanations (xm/xbu/xau/xiu/xbc/xac/xbr/xar/xbd/xad/xban)

|

||||

|

||||

> **note:** in addition to event hooks (the stuff described here), copyparty has another api to run your programs/scripts while providing way more information such as audio tags / video codecs / etc and optionally daisychaining data between scripts in a processing pipeline; if that's what you want then see [mtp plugins](../mtag/) instead

|

||||

|

||||

|

||||

@@ -393,7 +393,8 @@ class Gateway(object):

|

||||

if r.status != 200:

|

||||

self.closeconn()

|

||||

info("http error %s reading dir %r", r.status, web_path)

|

||||

raise FuseOSError(errno.ENOENT)

|

||||

err = errno.ENOENT if r.status == 404 else errno.EIO

|

||||

raise FuseOSError(err)

|

||||

|

||||

ctype = r.getheader("Content-Type", "")

|

||||

if ctype == "application/json":

|

||||

@@ -1128,7 +1129,7 @@ def main():

|

||||

|

||||

# dircache is always a boost,

|

||||

# only want to disable it for tests etc,

|

||||

cdn = 9 # max num dirs; 0=disable

|

||||

cdn = 24 # max num dirs; keep larger than max dir depth; 0=disable

|

||||

cds = 1 # numsec until an entry goes stale

|

||||

|

||||

where = "local directory"

|

||||

|

||||

161

bin/u2c.py

161

bin/u2c.py

@@ -1,8 +1,8 @@

|

||||

#!/usr/bin/env python3

|

||||

from __future__ import print_function, unicode_literals

|

||||

|

||||

S_VERSION = "2.2"

|

||||

S_BUILD_DT = "2024-10-13"

|

||||

S_VERSION = "2.6"

|

||||

S_BUILD_DT = "2024-11-10"

|

||||

|

||||

"""

|

||||

u2c.py: upload to copyparty

|

||||

@@ -62,6 +62,9 @@ else:

|

||||

|

||||

unicode = str

|

||||

|

||||

|

||||

WTF8 = "replace" if PY2 else "surrogateescape"

|

||||

|

||||

VT100 = platform.system() != "Windows"

|

||||

|

||||

|

||||

@@ -151,6 +154,7 @@ class HCli(object):

|

||||

self.tls = tls

|

||||

self.verify = ar.te or not ar.td

|

||||

self.conns = []

|

||||

self.hconns = []

|

||||

if tls:

|

||||

import ssl

|

||||

|

||||

@@ -170,7 +174,7 @@ class HCli(object):

|

||||

"User-Agent": "u2c/%s" % (S_VERSION,),

|

||||

}

|

||||

|

||||

def _connect(self):

|

||||

def _connect(self, timeout):

|

||||

args = {}

|

||||

if PY37:

|

||||

args["blocksize"] = 1048576

|

||||

@@ -182,9 +186,11 @@ class HCli(object):

|

||||

if self.ctx:

|

||||

args = {"context": self.ctx}

|

||||

|

||||

return C(self.addr, self.port, timeout=999, **args)

|

||||

return C(self.addr, self.port, timeout=timeout, **args)

|

||||

|

||||

def req(self, meth, vpath, hdrs, body=None, ctype=None):

|

||||

now = time.time()

|

||||

|

||||

hdrs.update(self.base_hdrs)

|

||||

if self.ar.a:

|

||||

hdrs["PW"] = self.ar.a

|

||||

@@ -195,7 +201,11 @@ class HCli(object):

|

||||

0 if not body else body.len if hasattr(body, "len") else len(body)

|

||||

)

|

||||

|

||||

c = self.conns.pop() if self.conns else self._connect()

|

||||

# large timeout for handshakes (safededup)

|

||||

conns = self.hconns if ctype == MJ else self.conns

|

||||

while conns and self.ar.cxp < now - conns[0][0]:

|

||||

conns.pop(0)[1].close()

|

||||

c = conns.pop()[1] if conns else self._connect(999 if ctype == MJ else 128)

|

||||

try:

|

||||

c.request(meth, vpath, body, hdrs)

|

||||

if PY27:

|

||||

@@ -204,8 +214,15 @@ class HCli(object):

|

||||

rsp = c.getresponse()

|

||||

|

||||

data = rsp.read()

|

||||

self.conns.append(c)

|

||||

conns.append((time.time(), c))

|

||||

return rsp.status, data.decode("utf-8")

|

||||

except http_client.BadStatusLine:

|

||||

if self.ar.cxp > 4:

|

||||

t = "\nWARNING: --cxp probably too high; reducing from %d to 4"

|

||||

print(t % (self.ar.cxp,))

|

||||

self.ar.cxp = 4

|

||||

c.close()

|

||||

raise

|

||||

except:

|

||||

c.close()

|

||||

raise

|

||||

@@ -228,7 +245,7 @@ class File(object):

|

||||

self.lmod = lmod # type: float

|

||||

|

||||

self.abs = os.path.join(top, rel) # type: bytes

|

||||

self.name = self.rel.split(b"/")[-1].decode("utf-8", "replace") # type: str

|

||||

self.name = self.rel.split(b"/")[-1].decode("utf-8", WTF8) # type: str

|

||||

|

||||

# set by get_hashlist

|

||||

self.cids = [] # type: list[tuple[str, int, int]] # [ hash, ofs, sz ]

|

||||

@@ -267,10 +284,41 @@ class FileSlice(object):

|

||||

raise Exception(9)

|

||||

tlen += clen

|

||||

|

||||

self.len = tlen

|

||||

self.len = self.tlen = tlen

|

||||

self.cdr = self.car + self.len

|

||||

self.ofs = 0 # type: int

|

||||

self.f = open(file.abs, "rb", 512 * 1024)

|

||||

|

||||

self.f = None

|

||||

self.seek = self._seek0

|

||||

self.read = self._read0

|

||||

|

||||

def subchunk(self, maxsz, nth):

|

||||

if self.tlen <= maxsz:

|

||||

return -1

|

||||

|

||||

if not nth:

|

||||

self.car0 = self.car

|

||||

self.cdr0 = self.cdr

|

||||

|

||||

self.car = self.car0 + maxsz * nth

|

||||

if self.car >= self.cdr0:

|

||||

return -2

|

||||

|

||||

self.cdr = self.car + min(self.cdr0 - self.car, maxsz)

|

||||

self.len = self.cdr - self.car

|

||||

self.seek(0)

|

||||

return nth

|

||||

|

||||

def unsub(self):

|

||||

self.car = self.car0

|

||||

self.cdr = self.cdr0

|

||||

self.len = self.tlen

|

||||

|

||||

def _open(self):

|

||||

self.seek = self._seek

|

||||

self.read = self._read

|

||||

|

||||

self.f = open(self.file.abs, "rb", 512 * 1024)

|

||||

self.f.seek(self.car)

|

||||

|

||||

# https://stackoverflow.com/questions/4359495/what-is-exactly-a-file-like-object-in-python

|

||||

@@ -282,10 +330,15 @@ class FileSlice(object):

|

||||

except:

|

||||

pass # py27 probably

|

||||

|

||||

def close(self, *a, **ka):

|

||||

return # until _open

|

||||

|

||||

def tell(self):

|

||||

return self.ofs

|

||||

|

||||

def seek(self, ofs, wh=0):

|

||||

def _seek(self, ofs, wh=0):

|

||||

assert self.f # !rm

|

||||

|

||||

if wh == 1:

|

||||

ofs = self.ofs + ofs

|

||||

elif wh == 2:

|

||||

@@ -299,12 +352,22 @@ class FileSlice(object):

|

||||

self.ofs = ofs

|

||||

self.f.seek(self.car + ofs)

|

||||

|

||||

def read(self, sz):

|

||||

def _read(self, sz):

|

||||

assert self.f # !rm

|

||||

|

||||

sz = min(sz, self.len - self.ofs)

|

||||

ret = self.f.read(sz)

|

||||

self.ofs += len(ret)

|

||||

return ret

|

||||

|

||||

def _seek0(self, ofs, wh=0):

|

||||

self._open()

|

||||

return self.seek(ofs, wh)

|

||||

|

||||

def _read0(self, sz):

|

||||

self._open()

|

||||

return self.read(sz)

|

||||

|

||||

|

||||

class MTHash(object):

|

||||

def __init__(self, cores):

|

||||

@@ -557,13 +620,17 @@ def walkdir(err, top, excl, seen):

|

||||

for ap, inf in sorted(statdir(err, top)):

|

||||

if excl.match(ap):

|

||||

continue

|

||||

yield ap, inf

|

||||

if stat.S_ISDIR(inf.st_mode):

|

||||

yield ap, inf

|

||||

try:

|

||||

for x in walkdir(err, ap, excl, seen):

|

||||

yield x

|

||||

except Exception as ex:

|

||||

err.append((ap, str(ex)))

|

||||

elif stat.S_ISREG(inf.st_mode):

|

||||

yield ap, inf

|

||||

else:

|

||||

err.append((ap, "irregular filetype 0%o" % (inf.st_mode,)))

|

||||

|

||||

|

||||

def walkdirs(err, tops, excl):

|

||||

@@ -609,11 +676,12 @@ def walkdirs(err, tops, excl):

|

||||

|

||||

# mostly from copyparty/util.py

|

||||

def quotep(btxt):

|

||||

# type: (bytes) -> bytes

|

||||

quot1 = quote(btxt, safe=b"/")

|

||||

if not PY2:

|

||||

quot1 = quot1.encode("ascii")

|

||||

|

||||

return quot1.replace(b" ", b"+") # type: ignore

|

||||

return quot1.replace(b" ", b"%20") # type: ignore

|

||||

|

||||

|

||||

# from copyparty/util.py

|

||||

@@ -641,7 +709,7 @@ def up2k_chunksize(filesize):

|

||||

while True:

|

||||

for mul in [1, 2]:

|

||||

nchunks = math.ceil(filesize * 1.0 / chunksize)

|

||||

if nchunks <= 256 or (chunksize >= 32 * 1024 * 1024 and nchunks < 4096):

|

||||

if nchunks <= 256 or (chunksize >= 32 * 1024 * 1024 and nchunks <= 4096):

|

||||

return chunksize

|

||||

|

||||

chunksize += stepsize

|

||||

@@ -720,7 +788,7 @@ def handshake(ar, file, search):

|

||||

url = file.url

|

||||

else:

|

||||

if b"/" in file.rel:

|

||||

url = quotep(file.rel.rsplit(b"/", 1)[0]).decode("utf-8", "replace")

|

||||

url = quotep(file.rel.rsplit(b"/", 1)[0]).decode("utf-8")

|

||||

else:

|

||||

url = ""

|

||||

url = ar.vtop + url

|

||||

@@ -766,15 +834,15 @@ def handshake(ar, file, search):

|

||||

if search:

|

||||

return r["hits"], False

|

||||

|

||||

file.url = r["purl"]

|

||||

file.url = quotep(r["purl"].encode("utf-8", WTF8)).decode("utf-8")

|

||||

file.name = r["name"]

|

||||

file.wark = r["wark"]

|

||||

|

||||

return r["hash"], r["sprs"]

|

||||

|

||||

|

||||

def upload(fsl, stats):

|

||||

# type: (FileSlice, str) -> None

|

||||

def upload(fsl, stats, maxsz):

|

||||

# type: (FileSlice, str, int) -> None

|

||||

"""upload a range of file data, defined by one or more `cid` (chunk-hash)"""

|

||||

|

||||

ctxt = fsl.cids[0]

|

||||

@@ -792,21 +860,34 @@ def upload(fsl, stats):

|

||||

if stats:

|

||||

headers["X-Up2k-Stat"] = stats

|

||||

|

||||

nsub = 0

|

||||

try:

|

||||

sc, txt = web.req("POST", fsl.file.url, headers, fsl, MO)

|

||||

while nsub != -1:

|

||||

nsub = fsl.subchunk(maxsz, nsub)

|

||||

if nsub == -2:

|

||||

return

|

||||

if nsub >= 0:

|

||||

headers["X-Up2k-Subc"] = str(maxsz * nsub)

|

||||

headers.pop(CLEN, None)

|

||||

nsub += 1

|

||||

|

||||

if sc == 400:

|

||||

if (

|

||||

"already being written" in txt

|

||||

or "already got that" in txt

|

||||

or "only sibling chunks" in txt

|

||||

):

|

||||

fsl.file.nojoin = 1

|

||||

sc, txt = web.req("POST", fsl.file.url, headers, fsl, MO)

|

||||

|

||||

if sc >= 400:

|

||||

raise Exception("http %s: %s" % (sc, txt))

|

||||

if sc == 400:

|

||||

if (

|

||||

"already being written" in txt

|

||||

or "already got that" in txt

|

||||

or "only sibling chunks" in txt

|

||||

):

|

||||

fsl.file.nojoin = 1

|

||||

|

||||

if sc >= 400:

|

||||

raise Exception("http %s: %s" % (sc, txt))

|

||||

finally:

|

||||

fsl.f.close()

|

||||

if fsl.f:

|

||||

fsl.f.close()

|

||||

if nsub != -1:

|

||||

fsl.unsub()

|

||||

|

||||

|

||||

class Ctl(object):

|

||||

@@ -938,7 +1019,7 @@ class Ctl(object):

|

||||

print(" %d up %s" % (ncs - nc, cid))

|

||||

stats = "%d/0/0/%d" % (nf, self.nfiles - nf)

|

||||

fslice = FileSlice(file, [cid])

|

||||

upload(fslice, stats)

|

||||

upload(fslice, stats, self.ar.szm)

|

||||

|

||||

print(" ok!")

|

||||

if file.recheck:

|

||||

@@ -1057,7 +1138,7 @@ class Ctl(object):

|

||||

print(" ls ~{0}".format(srd))

|

||||

zt = (

|

||||

self.ar.vtop,

|

||||

quotep(rd.replace(b"\\", b"/")).decode("utf-8", "replace"),

|

||||

quotep(rd.replace(b"\\", b"/")).decode("utf-8"),

|

||||

)

|

||||

sc, txt = web.req("GET", "%s%s?ls<&dots" % zt, {})

|

||||

if sc >= 400:

|

||||

@@ -1066,13 +1147,16 @@ class Ctl(object):

|

||||

j = json.loads(txt)

|

||||

for f in j["dirs"] + j["files"]:

|

||||

rfn = f["href"].split("?")[0].rstrip("/")

|

||||

ls[unquote(rfn.encode("utf-8", "replace"))] = f

|

||||

ls[unquote(rfn.encode("utf-8", WTF8))] = f

|

||||

except Exception as ex:

|

||||

print(" mkdir ~{0} ({1})".format(srd, ex))

|

||||

|

||||

if self.ar.drd:

|

||||

dp = os.path.join(top, rd)

|

||||

lnodes = set(os.listdir(dp))

|

||||

try:

|

||||

lnodes = set(os.listdir(dp))

|

||||

except:

|

||||

lnodes = list(ls) # fs eio; don't delete

|

||||

if ptn:

|

||||

zs = dp.replace(sep, b"/").rstrip(b"/") + b"/"

|

||||

zls = [zs + x for x in lnodes]

|

||||

@@ -1080,7 +1164,7 @@ class Ctl(object):

|

||||

lnodes = [x.split(b"/")[-1] for x in zls]

|

||||

bnames = [x for x in ls if x not in lnodes and x != b".hist"]

|

||||

vpath = self.ar.url.split("://")[-1].split("/", 1)[-1]

|

||||

names = [x.decode("utf-8", "replace") for x in bnames]

|

||||

names = [x.decode("utf-8", WTF8) for x in bnames]

|

||||

locs = [vpath + srd + "/" + x for x in names]

|

||||

while locs:

|

||||

req = locs

|

||||

@@ -1286,7 +1370,7 @@ class Ctl(object):

|

||||

self._check_if_done()

|

||||

continue

|

||||

|

||||

njoin = (self.ar.sz * 1024 * 1024) // chunksz

|

||||

njoin = self.ar.sz // chunksz

|

||||

cs = hs[:]

|

||||

while cs:

|

||||

fsl = FileSlice(file, cs[:1])

|

||||

@@ -1338,7 +1422,7 @@ class Ctl(object):

|

||||

)

|

||||

|

||||

try:

|

||||

upload(fsl, stats)

|

||||

upload(fsl, stats, self.ar.szm)

|

||||

except Exception as ex:

|

||||

t = "upload failed, retrying: %s #%s+%d (%s)\n"

|

||||

eprint(t % (file.name, cids[0][:8], len(cids) - 1, ex))

|

||||

@@ -1427,8 +1511,10 @@ source file/folder selection uses rsync syntax, meaning that:

|

||||

ap.add_argument("-j", type=int, metavar="CONNS", default=2, help="parallel connections")

|

||||

ap.add_argument("-J", type=int, metavar="CORES", default=hcores, help="num cpu-cores to use for hashing; set 0 or 1 for single-core hashing")

|

||||

ap.add_argument("--sz", type=int, metavar="MiB", default=64, help="try to make each POST this big")

|

||||

ap.add_argument("--szm", type=int, metavar="MiB", default=96, help="max size of each POST (default is cloudflare max)")

|

||||

ap.add_argument("-nh", action="store_true", help="disable hashing while uploading")

|

||||

ap.add_argument("-ns", action="store_true", help="no status panel (for slow consoles and macos)")

|

||||

ap.add_argument("--cxp", type=float, metavar="SEC", default=57, help="assume http connections expired after SEConds")

|

||||

ap.add_argument("--cd", type=float, metavar="SEC", default=5, help="delay before reattempting a failed handshake/upload")

|

||||

ap.add_argument("--safe", action="store_true", help="use simple fallback approach")

|

||||

ap.add_argument("-z", action="store_true", help="ZOOMIN' (skip uploading files if they exist at the destination with the ~same last-modified timestamp, so same as yolo / turbo with date-chk but even faster)")

|

||||

@@ -1454,6 +1540,9 @@ source file/folder selection uses rsync syntax, meaning that:

|

||||

if ar.dr:

|

||||

ar.ow = True

|

||||

|

||||

ar.sz *= 1024 * 1024

|

||||

ar.szm *= 1024 * 1024

|

||||

|

||||

ar.x = "|".join(ar.x or [])

|

||||

|

||||

setattr(ar, "wlist", ar.url == "-")

|

||||

|

||||

@@ -1,6 +1,6 @@

|

||||

# Maintainer: icxes <dev.null@need.moe>

|

||||

pkgname=copyparty

|

||||

pkgver="1.15.6"

|

||||

pkgver="1.15.10"

|

||||

pkgrel=1

|

||||

pkgdesc="File server with accelerated resumable uploads, dedup, WebDAV, FTP, TFTP, zeroconf, media indexer, thumbnails++"

|

||||

arch=("any")

|

||||

@@ -21,7 +21,7 @@ optdepends=("ffmpeg: thumbnails for videos, images (slower) and audio, music tag

|

||||

)

|

||||

source=("https://github.com/9001/${pkgname}/releases/download/v${pkgver}/${pkgname}-${pkgver}.tar.gz")

|

||||

backup=("etc/${pkgname}.d/init" )

|

||||

sha256sums=("abb5c1705cd80ea553d647d4a7b35b5e1dac5a517200551bcca79aa199f30875")

|

||||

sha256sums=("070d5bdebe57c247427ceea6b9029f93097e4b8996cabfc59d0ec248d063b993")

|

||||

|

||||

build() {

|

||||

cd "${srcdir}/${pkgname}-${pkgver}"

|

||||

|

||||

@@ -1,5 +1,5 @@

|

||||

{

|

||||

"url": "https://github.com/9001/copyparty/releases/download/v1.15.6/copyparty-sfx.py",

|

||||

"version": "1.15.6",

|

||||

"hash": "sha256-0ikt3jv9/XT/w/ew+R4rZxF6s7LwNhUvUYYIZtkQqbk="

|

||||

"url": "https://github.com/9001/copyparty/releases/download/v1.15.10/copyparty-sfx.py",

|

||||

"version": "1.15.10",

|

||||

"hash": "sha256-9InxXpCfgnsvcNdRwWhQ74TpI24Osdr0lN0IwIULL3I="

|

||||

}

|

||||

@@ -80,6 +80,7 @@ web/deps/prismd.css

|

||||

web/deps/scp.woff2

|

||||

web/deps/sha512.ac.js

|

||||

web/deps/sha512.hw.js

|

||||

web/iiam.gif

|

||||

web/md.css

|

||||

web/md.html

|

||||

web/md.js

|

||||

|

||||

@@ -50,6 +50,8 @@ from .util import (

|

||||

PARTFTPY_VER,

|

||||

PY_DESC,

|

||||

PYFTPD_VER,

|

||||

RAM_AVAIL,

|

||||

RAM_TOTAL,

|

||||

SQLITE_VER,

|

||||

UNPLICATIONS,

|

||||

Daemon,

|

||||

@@ -684,6 +686,8 @@ def get_sects():

|

||||

\033[36mxbu\033[35m executes CMD before a file upload starts

|

||||

\033[36mxau\033[35m executes CMD after a file upload finishes

|

||||

\033[36mxiu\033[35m executes CMD after all uploads finish and volume is idle

|

||||

\033[36mxbc\033[35m executes CMD before a file copy

|

||||

\033[36mxac\033[35m executes CMD after a file copy

|

||||

\033[36mxbr\033[35m executes CMD before a file rename/move

|

||||

\033[36mxar\033[35m executes CMD after a file rename/move

|

||||

\033[36mxbd\033[35m executes CMD before a file delete

|

||||

@@ -1017,7 +1021,7 @@ def add_upload(ap):

|

||||

ap2.add_argument("--sparse", metavar="MiB", type=int, default=4, help="windows-only: minimum size of incoming uploads through up2k before they are made into sparse files")

|

||||

ap2.add_argument("--turbo", metavar="LVL", type=int, default=0, help="configure turbo-mode in up2k client; [\033[32m-1\033[0m] = forbidden/always-off, [\033[32m0\033[0m] = default-off and warn if enabled, [\033[32m1\033[0m] = default-off, [\033[32m2\033[0m] = on, [\033[32m3\033[0m] = on and disable datecheck")

|

||||

ap2.add_argument("--u2j", metavar="JOBS", type=int, default=2, help="web-client: number of file chunks to upload in parallel; 1 or 2 is good for low-latency (same-country) connections, 4-8 for android clients, 16 for cross-atlantic (max=64)")

|

||||

ap2.add_argument("--u2sz", metavar="N,N,N", type=u, default="1,64,96", help="web-client: default upload chunksize (MiB); sets \033[33mmin,default,max\033[0m in the settings gui. Each HTTP POST will aim for this size. Cloudflare max is 96. Big values are good for cross-atlantic but may increase HDD fragmentation on some FS. Disable this optimization with [\033[32m1,1,1\033[0m]")

|

||||

ap2.add_argument("--u2sz", metavar="N,N,N", type=u, default="1,64,96", help="web-client: default upload chunksize (MiB); sets \033[33mmin,default,max\033[0m in the settings gui. Each HTTP POST will aim for \033[33mdefault\033[0m, and never exceed \033[33mmax\033[0m. Cloudflare max is 96. Big values are good for cross-atlantic but may increase HDD fragmentation on some FS. Disable this optimization with [\033[32m1,1,1\033[0m]")

|

||||

ap2.add_argument("--u2sort", metavar="TXT", type=u, default="s", help="upload order; [\033[32ms\033[0m]=smallest-first, [\033[32mn\033[0m]=alphabetical, [\033[32mfs\033[0m]=force-s, [\033[32mfn\033[0m]=force-n -- alphabetical is a bit slower on fiber/LAN but makes it easier to eyeball if everything went fine")

|

||||

ap2.add_argument("--write-uplog", action="store_true", help="write POST reports to textfiles in working-directory")

|

||||

|

||||

@@ -1037,7 +1041,7 @@ def add_network(ap):

|

||||

else:

|

||||

ap2.add_argument("--freebind", action="store_true", help="allow listening on IPs which do not yet exist, for example if the network interfaces haven't finished going up. Only makes sense for IPs other than '0.0.0.0', '127.0.0.1', '::', and '::1'. May require running as root (unless net.ipv6.ip_nonlocal_bind)")

|

||||

ap2.add_argument("--s-thead", metavar="SEC", type=int, default=120, help="socket timeout (read request header)")

|

||||

ap2.add_argument("--s-tbody", metavar="SEC", type=float, default=186.0, help="socket timeout (read/write request/response bodies). Use 60 on fast servers (default is extremely safe). Disable with 0 if reverse-proxied for a 2%% speed boost")

|

||||

ap2.add_argument("--s-tbody", metavar="SEC", type=float, default=128.0, help="socket timeout (read/write request/response bodies). Use 60 on fast servers (default is extremely safe). Disable with 0 if reverse-proxied for a 2%% speed boost")

|

||||

ap2.add_argument("--s-rd-sz", metavar="B", type=int, default=256*1024, help="socket read size in bytes (indirectly affects filesystem writes; recommendation: keep equal-to or lower-than \033[33m--iobuf\033[0m)")

|

||||

ap2.add_argument("--s-wr-sz", metavar="B", type=int, default=256*1024, help="socket write size in bytes")

|

||||

ap2.add_argument("--s-wr-slp", metavar="SEC", type=float, default=0.0, help="debug: socket write delay in seconds")

|

||||

@@ -1201,6 +1205,8 @@ def add_hooks(ap):

|

||||

ap2.add_argument("--xbu", metavar="CMD", type=u, action="append", help="execute \033[33mCMD\033[0m before a file upload starts")

|

||||

ap2.add_argument("--xau", metavar="CMD", type=u, action="append", help="execute \033[33mCMD\033[0m after a file upload finishes")

|

||||

ap2.add_argument("--xiu", metavar="CMD", type=u, action="append", help="execute \033[33mCMD\033[0m after all uploads finish and volume is idle")

|

||||

ap2.add_argument("--xbc", metavar="CMD", type=u, action="append", help="execute \033[33mCMD\033[0m before a file copy")

|

||||

ap2.add_argument("--xac", metavar="CMD", type=u, action="append", help="execute \033[33mCMD\033[0m after a file copy")

|

||||

ap2.add_argument("--xbr", metavar="CMD", type=u, action="append", help="execute \033[33mCMD\033[0m before a file move/rename")

|

||||

ap2.add_argument("--xar", metavar="CMD", type=u, action="append", help="execute \033[33mCMD\033[0m after a file move/rename")

|

||||

ap2.add_argument("--xbd", metavar="CMD", type=u, action="append", help="execute \033[33mCMD\033[0m before a file delete")

|

||||

@@ -1233,6 +1239,7 @@ def add_optouts(ap):

|

||||

ap2.add_argument("--no-dav", action="store_true", help="disable webdav support")

|

||||

ap2.add_argument("--no-del", action="store_true", help="disable delete operations")

|

||||

ap2.add_argument("--no-mv", action="store_true", help="disable move/rename operations")

|

||||

ap2.add_argument("--no-cp", action="store_true", help="disable copy operations")

|

||||

ap2.add_argument("-nth", action="store_true", help="no title hostname; don't show \033[33m--name\033[0m in <title>")

|

||||

ap2.add_argument("-nih", action="store_true", help="no info hostname -- don't show in UI")

|

||||

ap2.add_argument("-nid", action="store_true", help="no info disk-usage -- don't show in UI")

|

||||

@@ -1307,6 +1314,7 @@ def add_logging(ap):

|

||||

ap2.add_argument("--log-conn", action="store_true", help="debug: print tcp-server msgs")

|

||||

ap2.add_argument("--log-htp", action="store_true", help="debug: print http-server threadpool scaling")

|

||||

ap2.add_argument("--ihead", metavar="HEADER", type=u, action='append', help="print request \033[33mHEADER\033[0m; [\033[32m*\033[0m]=all")

|

||||

ap2.add_argument("--ohead", metavar="HEADER", type=u, action='append', help="print response \033[33mHEADER\033[0m; [\033[32m*\033[0m]=all")

|

||||

ap2.add_argument("--lf-url", metavar="RE", type=u, default=r"^/\.cpr/|\?th=[wj]$|/\.(_|ql_|DS_Store$|localized$)", help="dont log URLs matching regex \033[33mRE\033[0m")

|

||||

|

||||

|

||||

@@ -1315,9 +1323,12 @@ def add_admin(ap):

|

||||

ap2.add_argument("--no-reload", action="store_true", help="disable ?reload=cfg (reload users/volumes/volflags from config file)")

|

||||

ap2.add_argument("--no-rescan", action="store_true", help="disable ?scan (volume reindexing)")

|

||||

ap2.add_argument("--no-stack", action="store_true", help="disable ?stack (list all stacks)")

|

||||

ap2.add_argument("--dl-list", metavar="LVL", type=int, default=2, help="who can see active downloads in the controlpanel? [\033[32m0\033[0m]=nobody, [\033[32m1\033[0m]=admins, [\033[32m2\033[0m]=everyone")

|

||||

|

||||

|

||||

def add_thumbnail(ap):

|

||||

th_ram = (RAM_AVAIL or RAM_TOTAL or 9) * 0.6

|

||||

th_ram = int(max(min(th_ram, 6), 1) * 10) / 10

|

||||

ap2 = ap.add_argument_group('thumbnail options')

|

||||

ap2.add_argument("--no-thumb", action="store_true", help="disable all thumbnails (volflag=dthumb)")

|

||||

ap2.add_argument("--no-vthumb", action="store_true", help="disable video thumbnails (volflag=dvthumb)")

|

||||

@@ -1325,7 +1336,7 @@ def add_thumbnail(ap):

|

||||

ap2.add_argument("--th-size", metavar="WxH", default="320x256", help="thumbnail res (volflag=thsize)")

|

||||

ap2.add_argument("--th-mt", metavar="CORES", type=int, default=CORES, help="num cpu cores to use for generating thumbnails")

|

||||

ap2.add_argument("--th-convt", metavar="SEC", type=float, default=60.0, help="conversion timeout in seconds (volflag=convt)")

|

||||

ap2.add_argument("--th-ram-max", metavar="GB", type=float, default=6.0, help="max memory usage (GiB) permitted by thumbnailer; not very accurate")

|

||||

ap2.add_argument("--th-ram-max", metavar="GB", type=float, default=th_ram, help="max memory usage (GiB) permitted by thumbnailer; not very accurate")

|

||||

ap2.add_argument("--th-crop", metavar="TXT", type=u, default="y", help="crop thumbnails to 4:3 or keep dynamic height; client can override in UI unless force. [\033[32my\033[0m]=crop, [\033[32mn\033[0m]=nocrop, [\033[32mfy\033[0m]=force-y, [\033[32mfn\033[0m]=force-n (volflag=crop)")

|

||||

ap2.add_argument("--th-x3", metavar="TXT", type=u, default="n", help="show thumbs at 3x resolution; client can override in UI unless force. [\033[32my\033[0m]=yes, [\033[32mn\033[0m]=no, [\033[32mfy\033[0m]=force-yes, [\033[32mfn\033[0m]=force-no (volflag=th3x)")

|

||||

ap2.add_argument("--th-dec", metavar="LIBS", default="vips,pil,ff", help="image decoders, in order of preference")

|

||||

@@ -1357,6 +1368,14 @@ def add_transcoding(ap):

|

||||

ap2.add_argument("--ac-maxage", metavar="SEC", type=int, default=86400, help="delete cached transcode output after \033[33mSEC\033[0m seconds")

|

||||

|

||||

|

||||

def add_rss(ap):

|

||||

ap2 = ap.add_argument_group('RSS options')

|

||||

ap2.add_argument("--rss", action="store_true", help="enable RSS output (experimental)")

|

||||

ap2.add_argument("--rss-nf", metavar="HITS", type=int, default=250, help="default number of files to return (url-param 'nf')")

|

||||

ap2.add_argument("--rss-fext", metavar="E,E", type=u, default="", help="default list of file extensions to include (url-param 'fext'); blank=all")

|

||||

ap2.add_argument("--rss-sort", metavar="ORD", type=u, default="m", help="default sort order (url-param 'sort'); [\033[32mm\033[0m]=last-modified [\033[32mu\033[0m]=upload-time [\033[32mn\033[0m]=filename [\033[32ms\033[0m]=filesize; Uppercase=oldest-first. Note that upload-time is 0 for non-uploaded files")

|

||||

|

||||

|

||||

def add_db_general(ap, hcores):

|

||||

noidx = APPLESAN_TXT if MACOS else ""

|

||||

ap2 = ap.add_argument_group('general db options')

|

||||

@@ -1452,6 +1471,7 @@ def add_ui(ap, retry):

|

||||

ap2.add_argument("--pb-url", metavar="URL", type=u, default="https://github.com/9001/copyparty", help="powered-by link; disable with \033[33m-np\033[0m")

|

||||

ap2.add_argument("--ver", action="store_true", help="show version on the control panel (incompatible with \033[33m-nb\033[0m)")

|

||||

ap2.add_argument("--k304", metavar="NUM", type=int, default=0, help="configure the option to enable/disable k304 on the controlpanel (workaround for buggy reverse-proxies); [\033[32m0\033[0m] = hidden and default-off, [\033[32m1\033[0m] = visible and default-off, [\033[32m2\033[0m] = visible and default-on")

|

||||

ap2.add_argument("--no304", metavar="NUM", type=int, default=0, help="configure the option to enable/disable no304 on the controlpanel (workaround for buggy caching in browsers); [\033[32m0\033[0m] = hidden and default-off, [\033[32m1\033[0m] = visible and default-off, [\033[32m2\033[0m] = visible and default-on")

|

||||

ap2.add_argument("--md-sbf", metavar="FLAGS", type=u, default="downloads forms popups scripts top-navigation-by-user-activation", help="list of capabilities to ALLOW for README.md docs (volflag=md_sbf); see https://developer.mozilla.org/en-US/docs/Web/HTML/Element/iframe#attr-sandbox")

|

||||

ap2.add_argument("--lg-sbf", metavar="FLAGS", type=u, default="downloads forms popups scripts top-navigation-by-user-activation", help="list of capabilities to ALLOW for prologue/epilogue docs (volflag=lg_sbf)")

|

||||

ap2.add_argument("--no-sb-md", action="store_true", help="don't sandbox README/PREADME.md documents (volflags: no_sb_md | sb_md)")

|

||||

@@ -1526,6 +1546,7 @@ def run_argparse(

|

||||

add_db_metadata(ap)

|

||||

add_thumbnail(ap)

|

||||

add_transcoding(ap)

|

||||

add_rss(ap)

|

||||

add_ftp(ap)

|

||||

add_webdav(ap)

|

||||

add_tftp(ap)

|

||||

@@ -1748,6 +1769,9 @@ def main(argv: Optional[list[str]] = None) -> None:

|

||||

if al.ihead:

|

||||

al.ihead = [x.lower() for x in al.ihead]

|

||||

|

||||

if al.ohead:

|

||||

al.ohead = [x.lower() for x in al.ohead]

|

||||

|

||||

if HAVE_SSL:

|

||||

if al.ssl_ver:

|

||||

configure_ssl_ver(al)

|

||||

|

||||

@@ -1,8 +1,8 @@

|

||||

# coding: utf-8

|

||||

|

||||

VERSION = (1, 15, 7)

|

||||

CODENAME = "fill the drives"

|

||||

BUILD_DT = (2024, 10, 14)

|

||||

VERSION = (1, 16, 0)

|

||||

CODENAME = "COPYparty"

|

||||

BUILD_DT = (2024, 11, 10)

|

||||

|

||||

S_VERSION = ".".join(map(str, VERSION))

|

||||

S_BUILD_DT = "{0:04d}-{1:02d}-{2:02d}".format(*BUILD_DT)

|

||||

|

||||

@@ -66,6 +66,7 @@ if PY2:

|

||||

LEELOO_DALLAS = "leeloo_dallas"

|

||||

|

||||

SEE_LOG = "see log for details"

|

||||

SEESLOG = " (see serverlog for details)"

|

||||

SSEELOG = " ({})".format(SEE_LOG)

|

||||

BAD_CFG = "invalid config; {}".format(SEE_LOG)

|

||||

SBADCFG = " ({})".format(BAD_CFG)

|

||||

@@ -164,8 +165,11 @@ class Lim(object):

|

||||

self.chk_rem(rem)

|

||||

if sz != -1:

|

||||

self.chk_sz(sz)

|

||||

self.chk_vsz(broker, ptop, sz, volgetter)

|

||||

self.chk_df(abspath, sz) # side effects; keep last-ish

|

||||

else:

|

||||

sz = 0

|

||||

|

||||

self.chk_vsz(broker, ptop, sz, volgetter)

|

||||

self.chk_df(abspath, sz) # side effects; keep last-ish

|

||||

|

||||

ap2, vp2 = self.rot(abspath)

|

||||

if abspath == ap2:

|

||||

@@ -205,7 +209,15 @@ class Lim(object):

|

||||

|

||||

if self.dft < time.time():

|

||||

self.dft = int(time.time()) + 300

|

||||

self.dfv = get_df(abspath)[0] or 0

|

||||

|

||||

df, du, err = get_df(abspath, True)

|

||||

if err:

|

||||

t = "failed to read disk space usage for [%s]: %s"

|

||||

self.log(t % (abspath, err), 3)

|

||||

self.dfv = 0xAAAAAAAAA # 42.6 GiB

|

||||

else:

|

||||

self.dfv = df or 0

|

||||

|

||||

for j in list(self.reg.values()) if self.reg else []:

|

||||

self.dfv -= int(j["size"] / (len(j["hash"]) or 999) * len(j["need"]))

|

||||

|

||||

@@ -540,15 +552,14 @@ class VFS(object):

|

||||

return self._get_dbv(vrem)

|

||||

|

||||

shv, srem = src

|

||||

return shv, vjoin(srem, vrem)

|

||||

return shv._get_dbv(vjoin(srem, vrem))

|

||||

|

||||

def _get_dbv(self, vrem: str) -> tuple["VFS", str]:

|

||||

dbv = self.dbv

|

||||

if not dbv:

|

||||

return self, vrem

|

||||

|

||||

tv = [self.vpath[len(dbv.vpath) :].lstrip("/"), vrem]

|

||||

vrem = "/".join([x for x in tv if x])

|

||||

vrem = vjoin(self.vpath[len(dbv.vpath) :].lstrip("/"), vrem)

|

||||

return dbv, vrem

|

||||

|

||||

def canonical(self, rem: str, resolve: bool = True) -> str:

|

||||

@@ -580,10 +591,11 @@ class VFS(object):

|

||||

scandir: bool,

|

||||

permsets: list[list[bool]],

|

||||

lstat: bool = False,

|

||||

throw: bool = False,

|

||||

) -> tuple[str, list[tuple[str, os.stat_result]], dict[str, "VFS"]]:

|

||||

"""replaces _ls for certain shares (single-file, or file selection)"""

|

||||

vn, rem = self.shr_src # type: ignore

|

||||

abspath, real, _ = vn.ls(rem, "\n", scandir, permsets, lstat)

|

||||

abspath, real, _ = vn.ls(rem, "\n", scandir, permsets, lstat, throw)

|

||||

real = [x for x in real if os.path.basename(x[0]) in self.shr_files]

|

||||

return abspath, real, {}

|

||||

|

||||

@@ -594,11 +606,12 @@ class VFS(object):

|

||||

scandir: bool,

|

||||

permsets: list[list[bool]],

|

||||

lstat: bool = False,

|

||||

throw: bool = False,

|

||||

) -> tuple[str, list[tuple[str, os.stat_result]], dict[str, "VFS"]]:

|

||||

"""return user-readable [fsdir,real,virt] items at vpath"""

|

||||

virt_vis = {} # nodes readable by user

|

||||

abspath = self.canonical(rem)

|

||||

real = list(statdir(self.log, scandir, lstat, abspath))

|

||||

real = list(statdir(self.log, scandir, lstat, abspath, throw))

|

||||

real.sort()

|

||||

if not rem:

|

||||

# no vfs nodes in the list of real inodes

|

||||

@@ -660,6 +673,10 @@ class VFS(object):

|

||||

"""

|

||||

recursively yields from ./rem;

|

||||

rel is a unix-style user-defined vpath (not vfs-related)

|

||||

|

||||

NOTE: don't invoke this function from a dbv; subvols are only

|

||||

descended into if rem is blank due to the _ls `if not rem:`

|

||||

which intention is to prevent unintended access to subvols

|

||||

"""

|

||||

|

||||

fsroot, vfs_ls, vfs_virt = self.ls(rem, uname, scandir, permsets, lstat=lstat)

|

||||

@@ -900,7 +917,7 @@ class AuthSrv(object):

|

||||

self._reload()

|

||||

return True

|

||||

|

||||

broker.ask("_reload_blocking", False).get()

|

||||

broker.ask("reload", False, True).get()

|

||||

return True

|

||||

|

||||

def _map_volume_idp(

|

||||

@@ -1370,7 +1387,7 @@ class AuthSrv(object):

|

||||

flags[name] = True

|

||||

return

|

||||

|

||||

zs = "mtp on403 on404 xbu xau xiu xbr xar xbd xad xm xban"

|

||||

zs = "mtp on403 on404 xbu xau xiu xbc xac xbr xar xbd xad xm xban"

|

||||

if name not in zs.split():

|

||||

if value is True:

|

||||

t = "└─add volflag [{}] = {} ({})"

|

||||

@@ -1925,7 +1942,7 @@ class AuthSrv(object):

|

||||

vol.flags[k] = odfusion(getattr(self.args, k), vol.flags[k])

|

||||

|

||||

# append additive args from argv to volflags

|

||||

hooks = "xbu xau xiu xbr xar xbd xad xm xban".split()

|

||||

hooks = "xbu xau xiu xbc xac xbr xar xbd xad xm xban".split()

|

||||

for name in "mtp on404 on403".split() + hooks:

|

||||

self._read_volflag(vol.flags, name, getattr(self.args, name), True)

|

||||

|

||||

@@ -2376,7 +2393,7 @@ class AuthSrv(object):

|

||||

self._reload()

|

||||

return True, "new password OK"

|

||||

|

||||

broker.ask("_reload_blocking", False, False).get()

|

||||

broker.ask("reload", False, False).get()

|

||||

return True, "new password OK"

|

||||

|

||||

def setup_chpw(self, acct: dict[str, str]) -> None:

|

||||

@@ -2628,7 +2645,7 @@ class AuthSrv(object):

|

||||

]

|

||||

|

||||

csv = set("i p th_covers zm_on zm_off zs_on zs_off".split())

|

||||

zs = "c ihead mtm mtp on403 on404 xad xar xau xiu xban xbd xbr xbu xm"

|

||||

zs = "c ihead ohead mtm mtp on403 on404 xac xad xar xau xiu xban xbc xbd xbr xbu xm"

|

||||

lst = set(zs.split())

|

||||

askip = set("a v c vc cgen exp_lg exp_md theme".split())

|

||||

fskip = set("exp_lg exp_md mv_re_r mv_re_t rm_re_r rm_re_t".split())

|

||||

|

||||

@@ -43,6 +43,9 @@ class BrokerMp(object):

|

||||

self.procs = []

|

||||

self.mutex = threading.Lock()

|

||||

|

||||

self.retpend: dict[int, Any] = {}

|

||||

self.retpend_mutex = threading.Lock()

|

||||

|

||||

self.num_workers = self.args.j or CORES

|

||||

self.log("broker", "booting {} subprocesses".format(self.num_workers))

|

||||

for n in range(1, self.num_workers + 1):

|

||||

@@ -54,6 +57,8 @@ class BrokerMp(object):

|

||||

self.procs.append(proc)

|

||||

proc.start()

|

||||

|

||||

Daemon(self.periodic, "mp-periodic")

|

||||

|

||||

def shutdown(self) -> None:

|

||||

self.log("broker", "shutting down")

|

||||

for n, proc in enumerate(self.procs):

|

||||

@@ -90,8 +95,10 @@ class BrokerMp(object):

|

||||

self.log(*args)

|

||||

|

||||

elif dest == "retq":

|

||||

# response from previous ipc call

|

||||

raise Exception("invalid broker_mp usage")

|

||||

with self.retpend_mutex:

|

||||

retq = self.retpend.pop(retq_id)

|

||||

|

||||

retq.put(args[0])

|

||||

|

||||

else:

|

||||

# new ipc invoking managed service in hub

|

||||

@@ -109,7 +116,6 @@ class BrokerMp(object):

|

||||

proc.q_pend.put((retq_id, "retq", rv))

|

||||

|

||||

def ask(self, dest: str, *args: Any) -> Union[ExceptionalQueue, NotExQueue]:

|

||||

|

||||

# new non-ipc invoking managed service in hub

|

||||

obj = self.hub

|

||||

for node in dest.split("."):

|

||||

@@ -121,17 +127,30 @@ class BrokerMp(object):

|

||||

retq.put(rv)

|

||||

return retq

|

||||

|

||||

def wask(self, dest: str, *args: Any) -> list[Union[ExceptionalQueue, NotExQueue]]:

|

||||

# call from hub to workers

|

||||

ret = []

|

||||

for p in self.procs:

|

||||

retq = ExceptionalQueue(1)

|

||||

retq_id = id(retq)

|

||||

with self.retpend_mutex:

|

||||

self.retpend[retq_id] = retq

|

||||

|

||||

p.q_pend.put((retq_id, dest, list(args)))

|

||||

ret.append(retq)

|

||||

return ret

|

||||

|

||||

def say(self, dest: str, *args: Any) -> None:

|

||||

"""

|

||||

send message to non-hub component in other process,

|

||||

returns a Queue object which eventually contains the response if want_retval

|

||||

(not-impl here since nothing uses it yet)

|

||||

"""

|

||||

if dest == "listen":

|

||||

if dest == "httpsrv.listen":

|

||||

for p in self.procs:

|

||||

p.q_pend.put((0, dest, [args[0], len(self.procs)]))

|

||||

|

||||

elif dest == "set_netdevs":

|

||||

elif dest == "httpsrv.set_netdevs":

|

||||

for p in self.procs:

|

||||

p.q_pend.put((0, dest, list(args)))

|

||||

|

||||

@@ -140,3 +159,19 @@ class BrokerMp(object):

|

||||

|

||||

else:

|

||||

raise Exception("what is " + str(dest))

|

||||

|

||||

def periodic(self) -> None:

|

||||

while True:

|

||||

time.sleep(1)

|

||||

|

||||

tdli = {}

|

||||

tdls = {}

|

||||

qs = self.wask("httpsrv.read_dls")

|

||||

for q in qs:

|

||||

qr = q.get()

|

||||

dli, dls = qr

|

||||

tdli.update(dli)

|

||||

tdls.update(dls)

|

||||

tdl = (tdli, tdls)

|

||||

for p in self.procs:

|

||||

p.q_pend.put((0, "httpsrv.write_dls", tdl))

|

||||

|

||||

@@ -82,37 +82,38 @@ class MpWorker(BrokerCli):

|

||||

while True:

|

||||

retq_id, dest, args = self.q_pend.get()

|

||||

|

||||

# self.logw("work: [{}]".format(d[0]))

|

||||

if dest == "retq":

|

||||

# response from previous ipc call

|

||||

with self.retpend_mutex:

|

||||

retq = self.retpend.pop(retq_id)

|

||||

|

||||

retq.put(args)

|

||||

continue

|

||||

|

||||

if dest == "shutdown":

|

||||

self.httpsrv.shutdown()

|

||||

self.logw("ok bye")

|

||||

sys.exit(0)

|

||||

return

|

||||

|

||||

elif dest == "reload":

|

||||

if dest == "reload":

|

||||

self.logw("mpw.asrv reloading")

|

||||

self.asrv.reload()

|

||||

self.logw("mpw.asrv reloaded")

|

||||

continue

|

||||

|

||||

elif dest == "reload_sessions":

|

||||

if dest == "reload_sessions":

|

||||

with self.asrv.mutex:

|

||||

self.asrv.load_sessions()

|

||||

continue

|

||||

|

||||

elif dest == "listen":

|

||||

self.httpsrv.listen(args[0], args[1])

|

||||

obj = self

|

||||

for node in dest.split("."):

|

||||

obj = getattr(obj, node)

|

||||

|

||||

elif dest == "set_netdevs":

|

||||

self.httpsrv.set_netdevs(args[0])

|

||||

|

||||

elif dest == "retq":

|

||||

# response from previous ipc call

|

||||

with self.retpend_mutex:

|

||||

retq = self.retpend.pop(retq_id)

|

||||

|

||||

retq.put(args)

|

||||

|

||||

else:

|

||||

raise Exception("what is " + str(dest))

|

||||

rv = obj(*args) # type: ignore

|

||||

if retq_id:

|

||||

self.say("retq", rv, retq_id=retq_id)

|

||||

|

||||

def ask(self, dest: str, *args: Any) -> Union[ExceptionalQueue, NotExQueue]:

|

||||

retq = ExceptionalQueue(1)

|

||||

@@ -123,5 +124,5 @@ class MpWorker(BrokerCli):

|

||||

self.q_yield.put((retq_id, dest, list(args)))

|

||||

return retq

|

||||

|

||||

def say(self, dest: str, *args: Any) -> None:

|

||||

self.q_yield.put((0, dest, list(args)))

|

||||

def say(self, dest: str, *args: Any, retq_id=0) -> None:

|

||||

self.q_yield.put((retq_id, dest, list(args)))

|

||||

|

||||

@@ -53,11 +53,11 @@ class BrokerThr(BrokerCli):

|

||||

return NotExQueue(obj(*args)) # type: ignore

|

||||

|

||||

def say(self, dest: str, *args: Any) -> None:

|

||||

if dest == "listen":

|

||||

if dest == "httpsrv.listen":

|

||||

self.httpsrv.listen(args[0], 1)

|

||||

return

|

||||

|

||||

if dest == "set_netdevs":

|

||||

if dest == "httpsrv.set_netdevs":

|

||||

self.httpsrv.set_netdevs(args[0])

|

||||

return

|

||||

|

||||

|

||||

@@ -46,6 +46,7 @@ def vf_bmap() -> dict[str, str]:

|

||||

"og_no_head",

|

||||

"og_s_title",

|

||||

"rand",

|

||||

"rss",

|

||||

"xdev",

|

||||

"xlink",

|

||||

"xvol",

|

||||

@@ -102,10 +103,12 @@ def vf_cmap() -> dict[str, str]:

|

||||

"mte",

|

||||

"mth",

|

||||

"mtp",

|

||||

"xac",

|

||||

"xad",

|

||||

"xar",

|

||||

"xau",

|

||||

"xban",

|

||||

"xbc",

|

||||

"xbd",

|

||||

"xbr",

|

||||

"xbu",

|

||||

@@ -211,6 +214,8 @@ flagcats = {

|

||||

"xbu=CMD": "execute CMD before a file upload starts",

|

||||

"xau=CMD": "execute CMD after a file upload finishes",

|

||||

"xiu=CMD": "execute CMD after all uploads finish and volume is idle",

|

||||

"xbc=CMD": "execute CMD before a file copy",

|

||||

"xac=CMD": "execute CMD after a file copy",

|

||||

"xbr=CMD": "execute CMD before a file rename/move",

|

||||

"xar=CMD": "execute CMD after a file rename/move",

|

||||

"xbd=CMD": "execute CMD before a file delete",

|

||||

|

||||

@@ -296,6 +296,7 @@ class FtpFs(AbstractedFS):

|

||||

self.uname,

|

||||

not self.args.no_scandir,

|

||||

[[True, False], [False, True]],

|

||||

throw=True,

|

||||

)

|

||||

vfs_ls = [x[0] for x in vfs_ls1]

|

||||

vfs_ls.extend(vfs_virt.keys())

|

||||

|

||||

@@ -2,7 +2,6 @@

|

||||

from __future__ import print_function, unicode_literals

|

||||

|

||||

import argparse # typechk

|

||||

import calendar

|

||||

import copy

|

||||

import errno

|

||||

import gzip

|

||||

@@ -19,7 +18,6 @@ import threading # typechk

|

||||

import time

|

||||

import uuid

|

||||

from datetime import datetime

|

||||

from email.utils import parsedate

|

||||

from operator import itemgetter

|

||||

|

||||

import jinja2 # typechk

|

||||

@@ -107,6 +105,7 @@ from .util import (

|

||||

unquotep,

|

||||

vjoin,

|

||||

vol_san,

|

||||

vroots,

|

||||

vsplit,

|

||||

wrename,

|

||||

wunlink,

|

||||

@@ -127,10 +126,17 @@ _ = (argparse, threading)

|

||||

|

||||

NO_CACHE = {"Cache-Control": "no-cache"}

|

||||

|

||||

ALL_COOKIES = "k304 no304 js idxh dots cppwd cppws".split()

|

||||

|

||||

H_CONN_KEEPALIVE = "Connection: Keep-Alive"

|

||||

H_CONN_CLOSE = "Connection: Close"

|

||||

|

||||

LOGUES = [[0, ".prologue.html"], [1, ".epilogue.html"]]

|

||||

|

||||

READMES = [[0, ["preadme.md", "PREADME.md"]], [1, ["readme.md", "README.md"]]]

|

||||

|

||||

RSS_SORT = {"m": "mt", "u": "at", "n": "fn", "s": "sz"}

|

||||

|

||||

|

||||

class HttpCli(object):

|

||||

"""

|

||||

@@ -180,6 +186,7 @@ class HttpCli(object):

|

||||

self.rem = " "

|

||||

self.vpath = " "

|

||||

self.vpaths = " "

|

||||

self.dl_id = ""

|

||||

self.gctx = " " # additional context for garda

|

||||

self.trailing_slash = True

|

||||

self.uname = " "

|

||||

@@ -631,7 +638,7 @@ class HttpCli(object):

|

||||

avn.can_access("", self.uname) if avn else [False] * 8

|

||||

)

|

||||

self.avn = avn

|

||||

self.vn = vn

|

||||

self.vn = vn # note: do not dbv due to walk/zipgen

|

||||

self.rem = rem

|

||||

|

||||

self.s.settimeout(self.args.s_tbody or None)

|

||||

@@ -720,6 +727,11 @@ class HttpCli(object):

|

||||

except Pebkac:

|

||||

return False

|

||||

|

||||

finally:

|

||||

if self.dl_id:

|

||||

self.conn.hsrv.dli.pop(self.dl_id, None)

|

||||

self.conn.hsrv.dls.pop(self.dl_id, None)

|

||||

|

||||

def dip(self) -> str:

|

||||

if self.args.plain_ip:

|

||||

return self.ip.replace(":", ".")

|

||||

@@ -790,11 +802,11 @@ class HttpCli(object):

|

||||

|

||||

def k304(self) -> bool:

|

||||

k304 = self.cookies.get("k304")

|

||||

return (

|

||||

k304 == "y"

|

||||

or (self.args.k304 == 2 and k304 != "n")

|

||||

or ("; Trident/" in self.ua and not k304)

|

||||

)

|

||||

return k304 == "y" or (self.args.k304 == 2 and k304 != "n")

|

||||

|

||||

def no304(self) -> bool:

|

||||

no304 = self.cookies.get("no304")

|

||||

return no304 == "y" or (self.args.no304 == 2 and no304 != "n")

|

||||

|

||||

def _build_html_head(self, maybe_html: Any, kv: dict[str, Any]) -> None:

|

||||

html = str(maybe_html)

|

||||

@@ -829,25 +841,28 @@ class HttpCli(object):

|

||||

) -> None:

|

||||

response = ["%s %s %s" % (self.http_ver, status, HTTPCODE[status])]

|

||||

|

||||

if length is not None:

|

||||

response.append("Content-Length: " + unicode(length))

|

||||

|

||||

if status == 304 and self.k304():

|

||||

self.keepalive = False

|

||||

|

||||

# close if unknown length, otherwise take client's preference

|

||||

response.append("Connection: " + ("Keep-Alive" if self.keepalive else "Close"))

|

||||

response.append("Date: " + formatdate())

|

||||

|

||||

# headers{} overrides anything set previously

|

||||

if headers:

|

||||

self.out_headers.update(headers)

|

||||

|

||||

# default to utf8 html if no content-type is set

|

||||

if not mime:

|

||||

mime = self.out_headers.get("Content-Type") or "text/html; charset=utf-8"

|

||||

if status == 304:

|

||||

self.out_headers.pop("Content-Length", None)

|

||||

self.out_headers.pop("Content-Type", None)

|

||||

self.out_headerlist.clear()

|

||||

if self.k304():

|

||||

self.keepalive = False

|

||||

else:

|

||||

if length is not None:

|

||||

response.append("Content-Length: " + unicode(length))

|

||||

|

||||

self.out_headers["Content-Type"] = mime

|

||||

if mime:

|

||||

self.out_headers["Content-Type"] = mime

|

||||

elif "Content-Type" not in self.out_headers:

|

||||

self.out_headers["Content-Type"] = "text/html; charset=utf-8"

|

||||

|

||||

# close if unknown length, otherwise take client's preference

|

||||

response.append(H_CONN_KEEPALIVE if self.keepalive else H_CONN_CLOSE)

|

||||

response.append("Date: " + formatdate())

|

||||

|

||||

for k, zs in list(self.out_headers.items()) + self.out_headerlist:

|

||||

response.append("%s: %s" % (k, zs))

|

||||

@@ -861,6 +876,19 @@ class HttpCli(object):

|

||||

self.cbonk(self.conn.hsrv.gmal, zs, "cc_hdr", "Cc in out-hdr")

|

||||

raise Pebkac(999)

|

||||

|

||||

if self.args.ohead and self.do_log:

|

||||

keys = self.args.ohead

|

||||

if "*" in keys:

|

||||

lines = response[1:]

|

||||

else:

|

||||

lines = []

|

||||

for zs in response[1:]:

|

||||

if zs.split(":")[0].lower() in keys:

|

||||

lines.append(zs)

|

||||

for zs in lines:

|

||||

hk, hv = zs.split(": ")

|

||||

self.log("[O] {}: \033[33m[{}]".format(hk, hv), 5)

|

||||

|

||||

response.append("\r\n")

|

||||

try:

|

||||

self.s.sendall("\r\n".join(response).encode("utf-8"))

|

||||

@@ -940,13 +968,14 @@ class HttpCli(object):

|

||||

|

||||

lines = [

|

||||

"%s %s %s" % (self.http_ver or "HTTP/1.1", status, HTTPCODE[status]),

|

||||

"Connection: Close",

|

||||

H_CONN_CLOSE,

|

||||

]

|

||||

|

||||

if body:

|

||||

lines.append("Content-Length: " + unicode(len(body)))

|

||||

|

||||

self.s.sendall("\r\n".join(lines).encode("utf-8") + b"\r\n\r\n" + body)

|

||||

lines.append("\r\n")

|

||||

self.s.sendall("\r\n".join(lines).encode("utf-8") + body)

|

||||

|

||||

def urlq(self, add: dict[str, str], rm: list[str]) -> str:

|

||||

"""

|

||||

@@ -1173,6 +1202,9 @@ class HttpCli(object):

|

||||

if "move" in self.uparam:

|

||||

return self.handle_mv()

|

||||

|

||||

if "copy" in self.uparam:

|

||||

return self.handle_cp()

|

||||

|

||||

if not self.vpath and self.ouparam:

|

||||

if "reload" in self.uparam:

|

||||

return self.handle_reload()

|

||||

@@ -1180,12 +1212,6 @@ class HttpCli(object):

|

||||

if "stack" in self.uparam:

|

||||

return self.tx_stack()

|

||||

|

||||

if "ups" in self.uparam:

|

||||

return self.tx_ups()

|

||||

|

||||

if "k304" in self.uparam:

|

||||

return self.set_k304()

|

||||

|

||||

if "setck" in self.uparam:

|

||||

return self.setck()

|

||||

|

||||

@@ -1198,11 +1224,156 @@ class HttpCli(object):

|

||||

if "shares" in self.uparam:

|

||||

return self.tx_shares()

|

||||

|

||||

if "dls" in self.uparam:

|

||||

return self.tx_dls()

|

||||

|

||||

if "h" in self.uparam:

|

||||

return self.tx_mounts()

|

||||

|

||||