Compare commits

241 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

4accef00fb | ||

|

|

d779525500 | ||

|

|

65a7706f77 | ||

|

|

5e12abbb9b | ||

|

|

e0fe2b97be | ||

|

|

bd33863f9f | ||

|

|

a011139894 | ||

|

|

36866f1d36 | ||

|

|

407531bcb1 | ||

|

|

3adbb2ff41 | ||

|

|

499ae1c7a1 | ||

|

|

438ea6ccb0 | ||

|

|

598a29a733 | ||

|

|

6d102fc826 | ||

|

|

fca07fbb62 | ||

|

|

cdedcc24b8 | ||

|

|

60d5f27140 | ||

|

|

cb413bae49 | ||

|

|

e9f78ea70c | ||

|

|

6858cb066f | ||

|

|

4be0d426f4 | ||

|

|

7d7d5d6c3c | ||

|

|

0422387e90 | ||

|

|

2ed5fd9ac4 | ||

|

|

2beb2acc24 | ||

|

|

56ce591908 | ||

|

|

b190e676b4 | ||

|

|

19520b2ec9 | ||

|

|

eeb96ae8b5 | ||

|

|

cddedd37d5 | ||

|

|

4d6626b099 | ||

|

|

7a55833bb2 | ||

|

|

7e4702cf09 | ||

|

|

685f08697a | ||

|

|

a255db706d | ||

|

|

9d76902710 | ||

|

|

62ee7f6980 | ||

|

|

2f6707825a | ||

|

|

7dda77dcb4 | ||

|

|

ddec22d04c | ||

|

|

32e90859f4 | ||

|

|

8b8970c787 | ||

|

|

03d35ba799 | ||

|

|

c035d7d88a | ||

|

|

46f9e9efff | ||

|

|

4fa8d7ed79 | ||

|

|

cd71b505a9 | ||

|

|

c7db08ed3e | ||

|

|

3582a1004c | ||

|

|

22cbd2dbb5 | ||

|

|

c87af9e85c | ||

|

|

6c202effa4 | ||

|

|

632f52af22 | ||

|

|

46e59529a4 | ||

|

|

bdf060236a | ||

|

|

d9d2a09282 | ||

|

|

b020fd4ad2 | ||

|

|

4ef3526354 | ||

|

|

20ddeb6e1b | ||

|

|

d27f110498 | ||

|

|

910797ccb6 | ||

|

|

7de9d15aef | ||

|

|

6a9ffe7e06 | ||

|

|

12dcea4f70 | ||

|

|

b3b39bd8f1 | ||

|

|

c7caecf77c | ||

|

|

1fe30363c7 | ||

|

|

54a7256c8d | ||

|

|

8e8e4ff132 | ||

|

|

1dace72092 | ||

|

|

3a5c1d9faf | ||

|

|

f38c754301 | ||

|

|

fff38f484d | ||

|

|

95390b655f | ||

|

|

5967c421ca | ||

|

|

b8b5214f44 | ||

|

|

cdd3b67a5c | ||

|

|

28c9de3f6a | ||

|

|

f3b9bfc114 | ||

|

|

c9eba39edd | ||

|

|

40a1c7116e | ||

|

|

c03af9cfcc | ||

|

|

c4cbc32cc5 | ||

|

|

1231ce199e | ||

|

|

e0cac6fd99 | ||

|

|

d9db1534b1 | ||

|

|

6a0aaaf069 | ||

|

|

4c04798aa5 | ||

|

|

3f84b0a015 | ||

|

|

917380ddbb | ||

|

|

d9ae067e52 | ||

|

|

b2e8bf6e89 | ||

|

|

170cbe98c5 | ||

|

|

c94f662095 | ||

|

|

0987dcfb1c | ||

|

|

6920c01d4a | ||

|

|

cc0cc8cdf0 | ||

|

|

fb13969798 | ||

|

|

278258ee9f | ||

|

|

9e542cf86b | ||

|

|

244e952f79 | ||

|

|

aa2a8fa223 | ||

|

|

467acb47bf | ||

|

|

0c0d6b2bfc | ||

|

|

ce0e5be406 | ||

|

|

65ce4c90fa | ||

|

|

9897a08d09 | ||

|

|

f5753ba720 | ||

|

|

fcf32a935b | ||

|

|

ec50788987 | ||

|

|

ac0a2da3b5 | ||

|

|

9f84dc42fe | ||

|

|

21f9304235 | ||

|

|

5cedd22bbd | ||

|

|

c0dacbc4dd | ||

|

|

dd6e9ea70c | ||

|

|

87598dcd7f | ||

|

|

3bb7b677f8 | ||

|

|

988a7223f4 | ||

|

|

7f044372fa | ||

|

|

552897abbc | ||

|

|

946a8c5baa | ||

|

|

888b31aa92 | ||

|

|

e2dec2510f | ||

|

|

da5ad2ab9f | ||

|

|

eaa4b04a22 | ||

|

|

3051b13108 | ||

|

|

4c4e48bab7 | ||

|

|

01a3eb29cb | ||

|

|

73f7249c5f | ||

|

|

18c6559199 | ||

|

|

e66ece993f | ||

|

|

0686860624 | ||

|

|

24ce46b380 | ||

|

|

a49bf81ff2 | ||

|

|

64501fd7f1 | ||

|

|

db3c0b0907 | ||

|

|

edda117a7a | ||

|

|

cdface0dd5 | ||

|

|

be6afe2d3a | ||

|

|

9163780000 | ||

|

|

d7aa7dfe64 | ||

|

|

f1decb531d | ||

|

|

99399c698b | ||

|

|

1f5f42f216 | ||

|

|

9082c4702f | ||

|

|

6cedcfbf77 | ||

|

|

8a631f045e | ||

|

|

a6a2ee5b6b | ||

|

|

016708276c | ||

|

|

4cfdc4c513 | ||

|

|

0f257c9308 | ||

|

|

c8104b6e78 | ||

|

|

1a1d731043 | ||

|

|

c5a000d2ae | ||

|

|

94d1924fa9 | ||

|

|

6c1cf68bca | ||

|

|

395af051bd | ||

|

|

42fd66675e | ||

|

|

21a3f3699b | ||

|

|

d168b2acac | ||

|

|

2ce8233921 | ||

|

|

697a4fa8a4 | ||

|

|

2f83c6c7d1 | ||

|

|

127f414e9c | ||

|

|

33c4ccffab | ||

|

|

bafe7f5a09 | ||

|

|

baf41112d1 | ||

|

|

a90dde94e1 | ||

|

|

7dfbfc7227 | ||

|

|

b10843d051 | ||

|

|

520ac8f4dc | ||

|

|

537a6e50e9 | ||

|

|

2d0cbdf1a8 | ||

|

|

5afb562aa3 | ||

|

|

db069c3d4a | ||

|

|

fae40c7e2f | ||

|

|

0c43b592dc | ||

|

|

2ab8924e2d | ||

|

|

0e31cfa784 | ||

|

|

8f7ffcf350 | ||

|

|

9c8507a0fd | ||

|

|

e9b2cab088 | ||

|

|

d3ccacccb1 | ||

|

|

df386c8fbc | ||

|

|

4d15dd6e17 | ||

|

|

56a0499636 | ||

|

|

10fc4768e8 | ||

|

|

2b63d7d10d | ||

|

|

1f177528c1 | ||

|

|

fc3bbb70a3 | ||

|

|

ce3cab0295 | ||

|

|

c784e5285e | ||

|

|

2bf9055cae | ||

|

|

8aba5aed4f | ||

|

|

0ce7cf5e10 | ||

|

|

96edcbccd7 | ||

|

|

4603afb6de | ||

|

|

56317b00af | ||

|

|

cacec9c1f3 | ||

|

|

44ee07f0b2 | ||

|

|

6a8d5e1731 | ||

|

|

d9962f65b3 | ||

|

|

119e88d87b | ||

|

|

71d9e010d9 | ||

|

|

5718caa957 | ||

|

|

efd8a32ed6 | ||

|

|

b22d700e16 | ||

|

|

ccdacea0c4 | ||

|

|

4bdcbc1cb5 | ||

|

|

833c6cf2ec | ||

|

|

dd6dbdd90a | ||

|

|

63013cc565 | ||

|

|

912402364a | ||

|

|

159f51b12b | ||

|

|

7678a91b0e | ||

|

|

b13899c63d | ||

|

|

3a0d882c5e | ||

|

|

cb81f0ad6d | ||

|

|

518bacf628 | ||

|

|

ca63b03e55 | ||

|

|

cecef88d6b | ||

|

|

7ffd805a03 | ||

|

|

a7e2a0c981 | ||

|

|

2a570bb4ca | ||

|

|

5ca8f0706d | ||

|

|

a9b4436cdc | ||

|

|

5f91999512 | ||

|

|

9f000beeaf | ||

|

|

ff0a71f212 | ||

|

|

22dfc6ec24 | ||

|

|

48147c079e | ||

|

|

d715479ef6 | ||

|

|

fc8298c468 | ||

|

|

e94ca5dc91 | ||

|

|

114b71b751 | ||

|

|

b2770a2087 | ||

|

|

cba1878bb2 | ||

|

|

a2e037d6af | ||

|

|

65a2b6a223 | ||

|

|

9ed799e803 |

32

.github/ISSUE_TEMPLATE/bug_report.md

vendored

32

.github/ISSUE_TEMPLATE/bug_report.md

vendored

@@ -11,30 +11,38 @@ NOTE:

|

||||

all of the below are optional, consider them as inspiration, delete and rewrite at will, thx md

|

||||

|

||||

|

||||

**Describe the bug**

|

||||

### Describe the bug

|

||||

a description of what the bug is

|

||||

|

||||

**To Reproduce**

|

||||

### To Reproduce

|

||||

List of steps to reproduce the issue, or, if it's hard to reproduce, then at least a detailed explanation of what you did to run into it

|

||||

|

||||

**Expected behavior**

|

||||

### Expected behavior

|

||||

a description of what you expected to happen

|

||||

|

||||

**Screenshots**

|

||||

### Screenshots

|

||||

if applicable, add screenshots to help explain your problem, such as the kickass crashpage :^)

|

||||

|

||||

**Server details**

|

||||

if the issue is possibly on the server-side, then mention some of the following:

|

||||

* server OS / version:

|

||||

* python version:

|

||||

* copyparty arguments:

|

||||

* filesystem (`lsblk -f` on linux):

|

||||

### Server details (if you are using docker/podman)

|

||||

remove the ones that are not relevant:

|

||||

* **server OS / version:**

|

||||

* **how you're running copyparty:** (docker/podman/something-else)

|

||||

* **docker image:** (variant, version, and arch if you know)

|

||||

* **copyparty arguments and/or config-file:**

|

||||

|

||||

**Client details**

|

||||

### Server details (if you're NOT using docker/podman)

|

||||

remove the ones that are not relevant:

|

||||

* **server OS / version:**

|

||||

* **what copyparty did you grab:** (sfx/exe/pip/aur/...)

|

||||

* **how you're running it:** (in a terminal, as a systemd-service, ...)

|

||||

* run copyparty with `--version` and grab the last 3 lines (they start with `copyparty`, `CPython`, `sqlite`) and paste them below this line:

|

||||

* **copyparty arguments and/or config-file:**

|

||||

|

||||

### Client details

|

||||

if the issue is possibly on the client-side, then mention some of the following:

|

||||

* the device type and model:

|

||||

* OS version:

|

||||

* browser version:

|

||||

|

||||

**Additional context**

|

||||

### Additional context

|

||||

any other context about the problem here

|

||||

|

||||

@@ -28,6 +28,8 @@ aside from documentation and ideas, some other things that would be cool to have

|

||||

|

||||

* **translations** -- the copyparty web-UI has translations for english and norwegian at the top of [browser.js](https://github.com/9001/copyparty/blob/hovudstraum/copyparty/web/browser.js); if you'd like to add a translation for another language then that'd be welcome! and if that language has a grammar that doesn't fit into the way the strings are assembled, then we'll fix that as we go :>

|

||||

|

||||

* but please note that support for [RTL (Right-to-Left) languages](https://en.wikipedia.org/wiki/Right-to-left_script) is currently not planned, since the javascript is a bit too jank for that

|

||||

|

||||

* **UI ideas** -- at some point I was thinking of rewriting the UI in react/preact/something-not-vanilla-javascript, but I'll admit the comfiness of not having any build stage combined with raw performance has kinda convinced me otherwise :p but I'd be very open to ideas on how the UI could be improved, or be more intuitive.

|

||||

|

||||

* **docker improvements** -- I don't really know what I'm doing when it comes to containers, so I'm sure there's a *huge* room for improvement here, mainly regarding how you're supposed to use the container with kubernetes / docker-compose / any of the other popular ways to do things. At some point I swear I'll start learning about docker so I can pick up clach04's [docker-compose draft](https://github.com/9001/copyparty/issues/38) and learn how that stuff ticks, unless someone beats me to it!

|

||||

|

||||

473

README.md

473

README.md

@@ -47,6 +47,8 @@ turn almost any device into a file server with resumable uploads/downloads using

|

||||

* [file manager](#file-manager) - cut/paste, rename, and delete files/folders (if you have permission)

|

||||

* [shares](#shares) - share a file or folder by creating a temporary link

|

||||

* [batch rename](#batch-rename) - select some files and press `F2` to bring up the rename UI

|

||||

* [rss feeds](#rss-feeds) - monitor a folder with your RSS reader

|

||||

* [recent uploads](#recent-uploads) - list all recent uploads

|

||||

* [media player](#media-player) - plays almost every audio format there is

|

||||

* [audio equalizer](#audio-equalizer) - and [dynamic range compressor](https://en.wikipedia.org/wiki/Dynamic_range_compression)

|

||||

* [fix unreliable playback on android](#fix-unreliable-playback-on-android) - due to phone / app settings

|

||||

@@ -78,6 +80,7 @@ turn almost any device into a file server with resumable uploads/downloads using

|

||||

* [metadata from audio files](#metadata-from-audio-files) - set `-e2t` to index tags on upload

|

||||

* [file parser plugins](#file-parser-plugins) - provide custom parsers to index additional tags

|

||||

* [event hooks](#event-hooks) - trigger a program on uploads, renames etc ([examples](./bin/hooks/))

|

||||

* [zeromq](#zeromq) - event-hooks can send zeromq messages

|

||||

* [upload events](#upload-events) - the older, more powerful approach ([examples](./bin/mtag/))

|

||||

* [handlers](#handlers) - redefine behavior with plugins ([examples](./bin/handlers/))

|

||||

* [ip auth](#ip-auth) - autologin based on IP range (CIDR)

|

||||

@@ -90,9 +93,12 @@ turn almost any device into a file server with resumable uploads/downloads using

|

||||

* [listen on port 80 and 443](#listen-on-port-80-and-443) - become a *real* webserver

|

||||

* [reverse-proxy](#reverse-proxy) - running copyparty next to other websites

|

||||

* [real-ip](#real-ip) - teaching copyparty how to see client IPs

|

||||

* [reverse-proxy performance](#reverse-proxy-performance)

|

||||

* [permanent cloudflare tunnel](#permanent-cloudflare-tunnel) - if you have a domain and want to get your copyparty online real quick

|

||||

* [prometheus](#prometheus) - metrics/stats can be enabled

|

||||

* [other extremely specific features](#other-extremely-specific-features) - you'll never find a use for these

|

||||

* [custom mimetypes](#custom-mimetypes) - change the association of a file extension

|

||||

* [GDPR compliance](#GDPR-compliance) - imagine using copyparty professionally...

|

||||

* [feature chickenbits](#feature-chickenbits) - buggy feature? rip it out

|

||||

* [packages](#packages) - the party might be closer than you think

|

||||

* [arch package](#arch-package) - now [available on aur](https://aur.archlinux.org/packages/copyparty) maintained by [@icxes](https://github.com/icxes)

|

||||

@@ -138,7 +144,11 @@ just run **[copyparty-sfx.py](https://github.com/9001/copyparty/releases/latest/

|

||||

* or if you cannot install python, you can use [copyparty.exe](#copypartyexe) instead

|

||||

* or install [on arch](#arch-package) ╱ [on NixOS](#nixos-module) ╱ [through nix](#nix-package)

|

||||

* or if you are on android, [install copyparty in termux](#install-on-android)

|

||||

* or maybe you have a [synology nas / dsm](./docs/synology-dsm.md)

|

||||

* or if your computer is messed up and nothing else works, [try the pyz](#zipapp)

|

||||

* or if you don't trust copyparty yet and want to isolate it a little, then...

|

||||

* ...maybe [prisonparty](./bin/prisonparty.sh) to create a tiny [chroot](https://wiki.archlinux.org/title/Chroot) (very portable),

|

||||

* ...or [bubbleparty](./bin/bubbleparty.sh) to wrap it in [bubblewrap](https://github.com/containers/bubblewrap) (much better)

|

||||

* or if you prefer to [use docker](./scripts/docker/) 🐋 you can do that too

|

||||

* docker has all deps built-in, so skip this step:

|

||||

|

||||

@@ -151,8 +161,8 @@ enable thumbnails (images/audio/video), media indexing, and audio transcoding by

|

||||

* **MacOS:** `port install py-Pillow ffmpeg`

|

||||

* **MacOS** (alternative): `brew install pillow ffmpeg`

|

||||

* **Windows:** `python -m pip install --user -U Pillow`

|

||||

* install python and ffmpeg manually; do not use `winget` or `Microsoft Store` (it breaks $PATH)

|

||||

* copyparty.exe comes with `Pillow` and only needs `ffmpeg`

|

||||

* install [python](https://www.python.org/downloads/windows/) and [ffmpeg](#optional-dependencies) manually; do not use `winget` or `Microsoft Store` (it breaks $PATH)

|

||||

* copyparty.exe comes with `Pillow` and only needs [ffmpeg](#optional-dependencies) for mediatags/videothumbs

|

||||

* see [optional dependencies](#optional-dependencies) to enable even more features

|

||||

|

||||

running copyparty without arguments (for example doubleclicking it on Windows) will give everyone read/write access to the current folder; you may want [accounts and volumes](#accounts-and-volumes)

|

||||

@@ -175,6 +185,8 @@ first download [cloudflared](https://developers.cloudflare.com/cloudflare-one/co

|

||||

|

||||

as the tunnel starts, it will show a URL which you can share to let anyone browse your stash or upload files to you

|

||||

|

||||

but if you have a domain, then you probably want to skip the random autogenerated URL and instead make a [permanent cloudflare tunnel](#permanent-cloudflare-tunnel)

|

||||

|

||||

since people will be connecting through cloudflare, run copyparty with `--xff-hdr cf-connecting-ip` to detect client IPs correctly

|

||||

|

||||

|

||||

@@ -216,10 +228,11 @@ also see [comparison to similar software](./docs/versus.md)

|

||||

* ☑ [upnp / zeroconf / mdns / ssdp](#zeroconf)

|

||||

* ☑ [event hooks](#event-hooks) / script runner

|

||||

* ☑ [reverse-proxy support](https://github.com/9001/copyparty#reverse-proxy)

|

||||

* ☑ cross-platform (Windows, Linux, Macos, Android, FreeBSD, arm32/arm64, ppc64le, s390x, risc-v/riscv64)

|

||||

* upload

|

||||

* ☑ basic: plain multipart, ie6 support

|

||||

* ☑ [up2k](#uploading): js, resumable, multithreaded

|

||||

* **no filesize limit!** ...unless you use Cloudflare, then it's 383.9 GiB

|

||||

* **no filesize limit!** even on Cloudflare

|

||||

* ☑ stash: simple PUT filedropper

|

||||

* ☑ filename randomizer

|

||||

* ☑ write-only folders

|

||||

@@ -250,7 +263,7 @@ also see [comparison to similar software](./docs/versus.md)

|

||||

* ☑ search by name/path/date/size

|

||||

* ☑ [search by ID3-tags etc.](#searching)

|

||||

* client support

|

||||

* ☑ [folder sync](#folder-sync)

|

||||

* ☑ [folder sync](#folder-sync) (one-way only; full sync will never be supported)

|

||||

* ☑ [curl-friendly](https://user-images.githubusercontent.com/241032/215322619-ea5fd606-3654-40ad-94ee-2bc058647bb2.png)

|

||||

* ☑ [opengraph](#opengraph) (discord embeds)

|

||||

* markdown

|

||||

@@ -338,6 +351,9 @@ same order here too

|

||||

|

||||

* [Chrome issue 1352210](https://bugs.chromium.org/p/chromium/issues/detail?id=1352210) -- plaintext http may be faster at filehashing than https (but also extremely CPU-intensive)

|

||||

|

||||

* [Chrome issue 383568268](https://issues.chromium.org/issues/383568268) -- filereaders in webworkers can OOM / crash the browser-tab

|

||||

* copyparty has a workaround which seems to work well enough

|

||||

|

||||

* [Firefox issue 1790500](https://bugzilla.mozilla.org/show_bug.cgi?id=1790500) -- entire browser can crash after uploading ~4000 small files

|

||||

|

||||

* Android: music playback randomly stops due to [battery usage settings](#fix-unreliable-playback-on-android)

|

||||

@@ -345,10 +361,19 @@ same order here too

|

||||

* iPhones: the volume control doesn't work because [apple doesn't want it to](https://developer.apple.com/library/archive/documentation/AudioVideo/Conceptual/Using_HTML5_Audio_Video/Device-SpecificConsiderations/Device-SpecificConsiderations.html#//apple_ref/doc/uid/TP40009523-CH5-SW11)

|

||||

* `AudioContext` will probably never be a viable workaround as apple introduces new issues faster than they fix current ones

|

||||

|

||||

* iPhones: music volume goes on a rollercoaster during song changes

|

||||

* nothing I can do about it because `AudioContext` is still broken in safari

|

||||

|

||||

* iPhones: the preload feature (in the media-player-options tab) can cause a tiny audio glitch 20sec before the end of each song, but disabling it may cause worse iOS bugs to appear instead

|

||||

* just a hunch, but disabling preloading may cause playback to stop entirely, or possibly mess with bluetooth speakers

|

||||

* tried to add a tooltip regarding this but looks like apple broke my tooltips

|

||||

|

||||

* iPhones: preloaded awo files make safari log MEDIA_ERR_NETWORK errors as playback starts, but the song plays just fine so eh whatever

|

||||

* awo, opus-weba, is apple's new take on opus support, replacing opus-caf which was technically limited to cbr opus

|

||||

|

||||

* iPhones: preloading another awo file may cause playback to stop

|

||||

* can be somewhat mitigated with `mp.au.play()` in `mp.onpreload` but that can hit a race condition in safari that starts playing the same audio object twice in parallel...

|

||||

|

||||

* Windows: folders cannot be accessed if the name ends with `.`

|

||||

* python or windows bug

|

||||

|

||||

@@ -381,6 +406,9 @@ upgrade notes

|

||||

|

||||

"frequently" asked questions

|

||||

|

||||

* can I change the 🌲 spinning pine-tree loading animation?

|

||||

* [yeah...](https://github.com/9001/copyparty/tree/hovudstraum/docs/rice#boring-loader-spinner) :-(

|

||||

|

||||

* is it possible to block read-access to folders unless you know the exact URL for a particular file inside?

|

||||

* yes, using the [`g` permission](#accounts-and-volumes), see the examples there

|

||||

* you can also do this with linux filesystem permissions; `chmod 111 music` will make it possible to access files and folders inside the `music` folder but not list the immediate contents -- also works with other software, not just copyparty

|

||||

@@ -403,6 +431,14 @@ upgrade notes

|

||||

* copyparty seems to think I am using http, even though the URL is https

|

||||

* your reverse-proxy is not sending the `X-Forwarded-Proto: https` header; this could be because your reverse-proxy itself is confused. Ensure that none of the intermediates (such as cloudflare) are terminating https before the traffic hits your entrypoint

|

||||

|

||||

* thumbnails are broken (you get a colorful square which says the filetype instead)

|

||||

* you need to install `FFmpeg` or `Pillow`; see [thumbnails](#thumbnails)

|

||||

|

||||

* thumbnails are broken (some images appear, but other files just get a blank box, and/or the broken-image placeholder)

|

||||

* probably due to a reverse-proxy messing with the request URLs and stripping the query parameters (`?th=w`), so check your URL rewrite rules

|

||||

* could also be due to incorrect caching settings in reverse-proxies and/or CDNs, so make sure that nothing is set to ignore the query string

|

||||

* could also be due to misbehaving privacy-related browser extensions, so try to disable those

|

||||

|

||||

* i want to learn python and/or programming and am considering looking at the copyparty source code in that occasion

|

||||

* ```bash

|

||||

_| _ __ _ _|_

|

||||

@@ -427,7 +463,7 @@ configuring accounts/volumes with arguments:

|

||||

|

||||

permissions:

|

||||

* `r` (read): browse folder contents, download files, download as zip/tar, see filekeys/dirkeys

|

||||

* `w` (write): upload files, move files *into* this folder

|

||||

* `w` (write): upload files, move/copy files *into* this folder

|

||||

* `m` (move): move files/folders *from* this folder

|

||||

* `d` (delete): delete files/folders

|

||||

* `.` (dots): user can ask to show dotfiles in directory listings

|

||||

@@ -455,6 +491,40 @@ examples:

|

||||

|

||||

anyone trying to bruteforce a password gets banned according to `--ban-pw`; default is 24h ban for 9 failed attempts in 1 hour

|

||||

|

||||

and if you want to use config files instead of commandline args (good!) then here's the same examples as a configfile; save it as `foobar.conf` and use it like this: `python copyparty-sfx.py -c foobar.conf`

|

||||

|

||||

```yaml

|

||||

[accounts]

|

||||

u1: p1 # create account "u1" with password "p1"

|

||||

u2: p2 # (note that comments must have

|

||||

u3: p3 # two spaces before the # sign)

|

||||

|

||||

[/] # this URL will be mapped to...

|

||||

/srv # ...this folder on the server filesystem

|

||||

accs:

|

||||

r: * # read-only for everyone, no account necessary

|

||||

|

||||

[/music] # create another volume at this URL,

|

||||

/mnt/music # which is mapped to this folder

|

||||

accs:

|

||||

r: u1, u2 # only these accounts can read,

|

||||

rw: u3 # and only u3 can read-write

|

||||

|

||||

[/inc]

|

||||

/mnt/incoming

|

||||

accs:

|

||||

w: u1 # u1 can upload but not see/download any files,

|

||||

rm: u2 # u2 can browse + move files out of this volume

|

||||

|

||||

[/i]

|

||||

/mnt/ss

|

||||

accs:

|

||||

rw: u1 # u1 can read-write,

|

||||

g: * # everyone can access files if they know the URL

|

||||

flags:

|

||||

fk: 4 # each file URL will have a 4-character password

|

||||

```

|

||||

|

||||

|

||||

## shadowing

|

||||

|

||||

@@ -462,6 +532,8 @@ hiding specific subfolders by mounting another volume on top of them

|

||||

|

||||

for example `-v /mnt::r -v /var/empty:web/certs:r` mounts the server folder `/mnt` as the webroot, but another volume is mounted at `/web/certs` -- so visitors can only see the contents of `/mnt` and `/mnt/web` (at URLs `/` and `/web`), but not `/mnt/web/certs` because URL `/web/certs` is mapped to `/var/empty`

|

||||

|

||||

the example config file right above this section may explain this better; the first volume `/` is mapped to `/srv` which means http://127.0.0.1:3923/music would try to read `/srv/music` on the server filesystem, but since there's another volume at `/music` mapped to `/mnt/music` then it'll go to `/mnt/music` instead

|

||||

|

||||

|

||||

## dotfiles

|

||||

|

||||

@@ -473,6 +545,19 @@ a client can request to see dotfiles in directory listings if global option `-ed

|

||||

|

||||

dotfiles do not appear in search results unless one of the above is true, **and** the global option / volflag `dotsrch` is set

|

||||

|

||||

config file example, where the same permission to see dotfiles is given in two different ways just for reference:

|

||||

|

||||

```yaml

|

||||

[/foo]

|

||||

/srv/foo

|

||||

accs:

|

||||

r.: ed # user "ed" has read-access + dot-access in this volume;

|

||||

# dotfiles are visible in listings, but not in searches

|

||||

flags:

|

||||

dotsrch # dotfiles will now appear in search results too

|

||||

dots # another way to let everyone see dotfiles in this vol

|

||||

```

|

||||

|

||||

|

||||

# the browser

|

||||

|

||||

@@ -507,7 +592,8 @@ the browser has the following hotkeys (always qwerty)

|

||||

* `ESC` close various things

|

||||

* `ctrl-K` delete selected files/folders

|

||||

* `ctrl-X` cut selected files/folders

|

||||

* `ctrl-V` paste

|

||||

* `ctrl-C` copy selected files/folders to clipboard

|

||||

* `ctrl-V` paste (move/copy)

|

||||

* `Y` download selected files

|

||||

* `F2` [rename](#batch-rename) selected file/folder

|

||||

* when a file/folder is selected (in not-grid-view):

|

||||

@@ -576,12 +662,14 @@ click the `🌲` or pressing the `B` hotkey to toggle between breadcrumbs path (

|

||||

|

||||

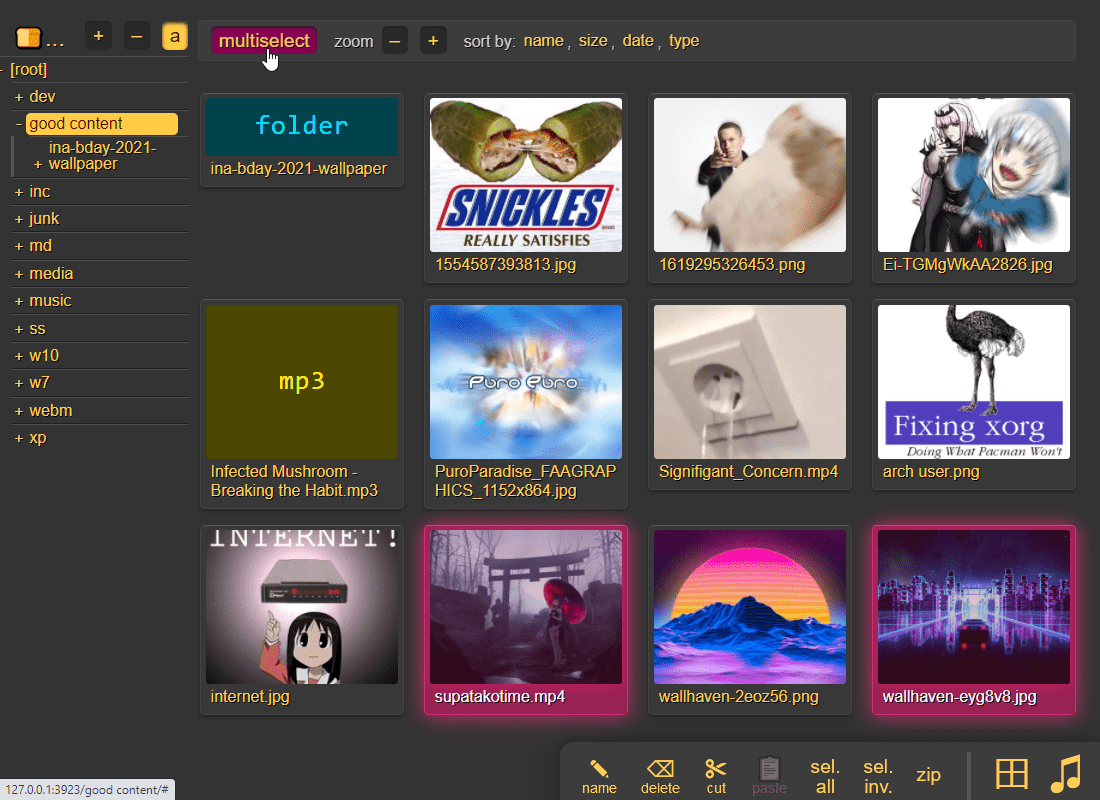

press `g` or `田` to toggle grid-view instead of the file listing and `t` toggles icons / thumbnails

|

||||

* can be made default globally with `--grid` or per-volume with volflag `grid`

|

||||

* enable by adding `?imgs` to a link, or disable with `?imgs=0`

|

||||

|

||||

|

||||

|

||||

it does static images with Pillow / pyvips / FFmpeg, and uses FFmpeg for video files, so you may want to `--no-thumb` or maybe just `--no-vthumb` depending on how dangerous your users are

|

||||

* pyvips is 3x faster than Pillow, Pillow is 3x faster than FFmpeg

|

||||

* disable thumbnails for specific volumes with volflag `dthumb` for all, or `dvthumb` / `dathumb` / `dithumb` for video/audio/images only

|

||||

* for installing FFmpeg on windows, see [optional dependencies](#optional-dependencies)

|

||||

|

||||

audio files are converted into spectrograms using FFmpeg unless you `--no-athumb` (and some FFmpeg builds may need `--th-ff-swr`)

|

||||

|

||||

@@ -593,6 +681,26 @@ enabling `multiselect` lets you click files to select them, and then shift-click

|

||||

* `multiselect` is mostly intended for phones/tablets, but the `sel` option in the `[⚙️] settings` tab is better suited for desktop use, allowing selection by CTRL-clicking and range-selection with SHIFT-click, all without affecting regular clicking

|

||||

* the `sel` option can be made default globally with `--gsel` or per-volume with volflag `gsel`

|

||||

|

||||

to show `/icons/exe.png` as the thumbnail for all .exe files, `--ext-th=exe=/icons/exe.png` (optionally as a volflag)

|

||||

|

||||

config file example:

|

||||

|

||||

```yaml

|

||||

[global]

|

||||

no-thumb # disable ALL thumbnails and audio transcoding

|

||||

no-vthumb # only disable video thumbnails

|

||||

|

||||

[/music]

|

||||

/mnt/nas/music

|

||||

accs:

|

||||

r: * # everyone can read

|

||||

flags:

|

||||

dthumb # disable ALL thumbnails and audio transcoding

|

||||

dvthumb # only disable video thumbnails

|

||||

ext-th: exe=/ico/exe.png # /ico/exe.png is the thumbnail of *.exe

|

||||

th-covers: folder.png,folder.jpg,cover.png,cover.jpg # the default

|

||||

```

|

||||

|

||||

|

||||

## zip downloads

|

||||

|

||||

@@ -606,8 +714,8 @@ select which type of archive you want in the `[⚙️] config` tab:

|

||||

| `pax` | `?tar=pax` | pax-format tar, futureproof, not as fast |

|

||||

| `tgz` | `?tar=gz` | gzip compressed gnu-tar (slow), for `curl \| tar -xvz` |

|

||||

| `txz` | `?tar=xz` | gnu-tar with xz / lzma compression (v.slow) |

|

||||

| `zip` | `?zip=utf8` | works everywhere, glitchy filenames on win7 and older |

|

||||

| `zip_dos` | `?zip` | traditional cp437 (no unicode) to fix glitchy filenames |

|

||||

| `zip` | `?zip` | works everywhere, glitchy filenames on win7 and older |

|

||||

| `zip_dos` | `?zip=dos` | traditional cp437 (no unicode) to fix glitchy filenames |

|

||||

| `zip_crc` | `?zip=crc` | cp437 with crc32 computed early for truly ancient software |

|

||||

|

||||

* gzip default level is `3` (0=fast, 9=best), change with `?tar=gz:9`

|

||||

@@ -615,7 +723,7 @@ select which type of archive you want in the `[⚙️] config` tab:

|

||||

* bz2 default level is `2` (1=fast, 9=best), change with `?tar=bz2:9`

|

||||

* hidden files ([dotfiles](#dotfiles)) are excluded unless account is allowed to list them

|

||||

* `up2k.db` and `dir.txt` is always excluded

|

||||

* bsdtar supports streaming unzipping: `curl foo?zip=utf8 | bsdtar -xv`

|

||||

* bsdtar supports streaming unzipping: `curl foo?zip | bsdtar -xv`

|

||||

* good, because copyparty's zip is faster than tar on small files

|

||||

* `zip_crc` will take longer to download since the server has to read each file twice

|

||||

* this is only to support MS-DOS PKZIP v2.04g (october 1993) and older

|

||||

@@ -639,7 +747,7 @@ dragdrop is the recommended way, but you may also:

|

||||

|

||||

* select some files (not folders) in your file explorer and press CTRL-V inside the browser window

|

||||

* use the [command-line uploader](https://github.com/9001/copyparty/tree/hovudstraum/bin#u2cpy)

|

||||

* upload using [curl or sharex](#client-examples)

|

||||

* upload using [curl, sharex, ishare, ...](#client-examples)

|

||||

|

||||

when uploading files through dragdrop or CTRL-V, this initiates an upload using `up2k`; there are two browser-based uploaders available:

|

||||

* `[🎈] bup`, the basic uploader, supports almost every browser since netscape 4.0

|

||||

@@ -654,7 +762,7 @@ up2k has several advantages:

|

||||

* uploads resume if you reboot your browser or pc, just upload the same files again

|

||||

* server detects any corruption; the client reuploads affected chunks

|

||||

* the client doesn't upload anything that already exists on the server

|

||||

* no filesize limit unless imposed by a proxy, for example Cloudflare, which blocks uploads over 383.9 GiB

|

||||

* no filesize limit, even when a proxy limits the request size (for example Cloudflare)

|

||||

* much higher speeds than ftp/scp/tarpipe on some internet connections (mainly american ones) thanks to parallel connections

|

||||

* the last-modified timestamp of the file is preserved

|

||||

|

||||

@@ -673,8 +781,11 @@ the up2k UI is the epitome of polished intuitive experiences:

|

||||

* "parallel uploads" specifies how many chunks to upload at the same time

|

||||

* `[🏃]` analysis of other files should continue while one is uploading

|

||||

* `[🥔]` shows a simpler UI for faster uploads from slow devices

|

||||

* `[🛡️]` decides when to overwrite existing files on the server

|

||||

* `🛡️` = never (generate a new filename instead)

|

||||

* `🕒` = overwrite if the server-file is older

|

||||

* `♻️` = always overwrite if the files are different

|

||||

* `[🎲]` generate random filenames during upload

|

||||

* `[📅]` preserve last-modified timestamps; server times will match yours

|

||||

* `[🔎]` switch between upload and [file-search](#file-search) mode

|

||||

* ignore `[🔎]` if you add files by dragging them into the browser

|

||||

|

||||

@@ -690,6 +801,8 @@ note that since up2k has to read each file twice, `[🎈] bup` can *theoreticall

|

||||

|

||||

if you are resuming a massive upload and want to skip hashing the files which already finished, you can enable `turbo` in the `[⚙️] config` tab, but please read the tooltip on that button

|

||||

|

||||

if the server is behind a proxy which imposes a request-size limit, you can configure up2k to sneak below the limit with server-option `--u2sz` (the default is 96 MiB to support Cloudflare)

|

||||

|

||||

|

||||

### file-search

|

||||

|

||||

@@ -709,12 +822,20 @@ files go into `[ok]` if they exist (and you get a link to where it is), otherwis

|

||||

|

||||

### unpost

|

||||

|

||||

undo/delete accidental uploads

|

||||

undo/delete accidental uploads using the `[🧯]` tab in the UI

|

||||

|

||||

|

||||

|

||||

you can unpost even if you don't have regular move/delete access, however only for files uploaded within the past `--unpost` seconds (default 12 hours) and the server must be running with `-e2d`

|

||||

|

||||

config file example:

|

||||

|

||||

```yaml

|

||||

[global]

|

||||

e2d # enable up2k database (remember uploads)

|

||||

unpost: 43200 # 12 hours (default)

|

||||

```

|

||||

|

||||

|

||||

### self-destruct

|

||||

|

||||

@@ -753,10 +874,11 @@ file selection: click somewhere on the line (not the link itself), then:

|

||||

* shift-click another line for range-select

|

||||

|

||||

* cut: select some files and `ctrl-x`

|

||||

* copy: select some files and `ctrl-c`

|

||||

* paste: `ctrl-v` in another folder

|

||||

* rename: `F2`

|

||||

|

||||

you can move files across browser tabs (cut in one tab, paste in another)

|

||||

you can copy/move files across browser tabs (cut/copy in one tab, paste in another)

|

||||

|

||||

|

||||

## shares

|

||||

@@ -843,6 +965,52 @@ or a mix of both:

|

||||

the metadata keys you can use in the format field are the ones in the file-browser table header (whatever is collected with `-mte` and `-mtp`)

|

||||

|

||||

|

||||

## rss feeds

|

||||

|

||||

monitor a folder with your RSS reader , optionally recursive

|

||||

|

||||

must be enabled per-volume with volflag `rss` or globally with `--rss`

|

||||

|

||||

the feed includes itunes metadata for use with podcast readers such as [AntennaPod](https://antennapod.org/)

|

||||

|

||||

a feed example: https://cd.ocv.me/a/d2/d22/?rss&fext=mp3

|

||||

|

||||

url parameters:

|

||||

|

||||

* `pw=hunter2` for password auth

|

||||

* `recursive` to also include subfolders

|

||||

* `title=foo` changes the feed title (default: folder name)

|

||||

* `fext=mp3,opus` only include mp3 and opus files (default: all)

|

||||

* `nf=30` only show the first 30 results (default: 250)

|

||||

* `sort=m` sort by mtime (file last-modified), newest first (default)

|

||||

* `u` = upload-time; NOTE: non-uploaded files have upload-time `0`

|

||||

* `n` = filename

|

||||

* `a` = filesize

|

||||

* uppercase = reverse-sort; `M` = oldest file first

|

||||

|

||||

|

||||

## recent uploads

|

||||

|

||||

list all recent uploads by clicking "show recent uploads" in the controlpanel

|

||||

|

||||

will show uploader IP and upload-time if the visitor has the admin permission

|

||||

|

||||

* global-option `--ups-when` makes upload-time visible to all users, and not just admins

|

||||

|

||||

* global-option `--ups-who` (volflag `ups_who`) specifies who gets access (0=nobody, 1=admins, 2=everyone), default=2

|

||||

|

||||

note that the [🧯 unpost](#unpost) feature is better suited for viewing *your own* recent uploads, as it includes the option to undo/delete them

|

||||

|

||||

config file example:

|

||||

|

||||

```yaml

|

||||

[global]

|

||||

ups-when # everyone can see upload times

|

||||

ups-who: 1 # but only admins can see the list,

|

||||

# so ups-when doesn't take effect

|

||||

```

|

||||

|

||||

|

||||

## media player

|

||||

|

||||

plays almost every audio format there is (if the server has FFmpeg installed for on-demand transcoding)

|

||||

@@ -879,6 +1047,11 @@ open the `[🎺]` media-player-settings tab to configure it,

|

||||

* `[aac]` converts `aac` and `m4a` files into opus (if supported by browser) or mp3

|

||||

* `[oth]` converts all other known formats into opus (if supported by browser) or mp3

|

||||

* `aac|ac3|aif|aiff|alac|alaw|amr|ape|au|dfpwm|dts|flac|gsm|it|m4a|mo3|mod|mp2|mp3|mpc|mptm|mt2|mulaw|ogg|okt|opus|ra|s3m|tak|tta|ulaw|wav|wma|wv|xm|xpk`

|

||||

* "transcode to":

|

||||

* `[opus]` produces an `opus` whenever transcoding is necessary (the best choice on Android and PCs)

|

||||

* `[awo]` is `opus` in a `weba` file, good for iPhones (iOS 17.5 and newer) but Apple is still fixing some state-confusion bugs as of iOS 18.2.1

|

||||

* `[caf]` is `opus` in a `caf` file, good for iPhones (iOS 11 through 17), technically unsupported by Apple but works for the mos tpart

|

||||

* `[mp3]` -- the myth, the legend, the undying master of mediocre sound quality that definitely works everywhere

|

||||

* "tint" reduces the contrast of the playback bar

|

||||

|

||||

|

||||

@@ -981,7 +1154,16 @@ using arguments or config files, or a mix of both:

|

||||

|

||||

announce enabled services on the LAN ([pic](https://user-images.githubusercontent.com/241032/215344737-0eae8d98-9496-4256-9aa8-cd2f6971810d.png)) -- `-z` enables both [mdns](#mdns) and [ssdp](#ssdp)

|

||||

|

||||

* `--z-on` / `--z-off`' limits the feature to certain networks

|

||||

* `--z-on` / `--z-off` limits the feature to certain networks

|

||||

|

||||

config file example:

|

||||

|

||||

```yaml

|

||||

[global]

|

||||

z # enable all zeroconf features (mdns, ssdp)

|

||||

zm # only enables mdns (does nothing since we already have z)

|

||||

z-on: 192.168.0.0/16, 10.1.2.0/24 # restrict to certain subnets

|

||||

```

|

||||

|

||||

|

||||

### mdns

|

||||

@@ -1059,6 +1241,8 @@ on macos, connect from finder:

|

||||

|

||||

in order to grant full write-access to webdav clients, the volflag `daw` must be set and the account must also have delete-access (otherwise the client won't be allowed to replace the contents of existing files, which is how webdav works)

|

||||

|

||||

> note: if you have enabled [IdP authentication](#identity-providers) then that may cause issues for some/most webdav clients; see [the webdav section in the IdP docs](https://github.com/9001/copyparty/blob/hovudstraum/docs/idp.md#connecting-webdav-clients)

|

||||

|

||||

|

||||

### connecting to webdav from windows

|

||||

|

||||

@@ -1067,11 +1251,12 @@ using the GUI (winXP or later):

|

||||

* on winXP only, click the `Sign up for online storage` hyperlink instead and put the URL there

|

||||

* providing your password as the username is recommended; the password field can be anything or empty

|

||||

|

||||

known client bugs:

|

||||

the webdav client that's built into windows has the following list of bugs; you can avoid all of these by connecting with rclone instead:

|

||||

* win7+ doesn't actually send the password to the server when reauthenticating after a reboot unless you first try to login with an incorrect password and then switch to the correct password

|

||||

* or just type your password into the username field instead to get around it entirely

|

||||

* connecting to a folder which allows anonymous read will make writing impossible, as windows has decided it doesn't need to login

|

||||

* workaround: connect twice; first to a folder which requires auth, then to the folder you actually want, and leave both of those mounted

|

||||

* or set the server-option `--dav-auth` to force password-auth for all webdav clients

|

||||

* win7+ may open a new tcp connection for every file and sometimes forgets to close them, eventually needing a reboot

|

||||

* maybe NIC-related (??), happens with win10-ltsc on e1000e but not virtio

|

||||

* windows cannot access folders which contain filenames with invalid unicode or forbidden characters (`<>:"/\|?*`), or names ending with `.`

|

||||

@@ -1119,7 +1304,7 @@ dependencies: `python3 -m pip install --user -U impacket==0.11.0`

|

||||

|

||||

some **BIG WARNINGS** specific to SMB/CIFS, in decreasing importance:

|

||||

* not entirely confident that read-only is read-only

|

||||

* the smb backend is not fully integrated with vfs, meaning there could be security issues (path traversal). Please use `--smb-port` (see below) and [prisonparty](./bin/prisonparty.sh)

|

||||

* the smb backend is not fully integrated with vfs, meaning there could be security issues (path traversal). Please use `--smb-port` (see below) and [prisonparty](./bin/prisonparty.sh) or [bubbleparty](./bin/bubbleparty.sh)

|

||||

* account passwords work per-volume as expected, and so does account permissions (read/write/move/delete), but `--smbw` must be given to allow write-access from smb

|

||||

* [shadowing](#shadowing) probably works as expected but no guarantees

|

||||

|

||||

@@ -1205,6 +1390,18 @@ advantages of using symlinks (default):

|

||||

|

||||

global-option `--xlink` / volflag `xlink` additionally enables deduplication across volumes, but this is probably buggy and not recommended

|

||||

|

||||

config file example:

|

||||

|

||||

```yaml

|

||||

[global]

|

||||

e2dsa # scan and index filesystem on startup

|

||||

dedup # symlink-based deduplication for all volumes

|

||||

|

||||

[/media]

|

||||

/mnt/nas/media

|

||||

flags:

|

||||

hardlinkonly # this vol does hardlinks instead of symlinks

|

||||

```

|

||||

|

||||

|

||||

## file indexing

|

||||

@@ -1236,19 +1433,41 @@ note:

|

||||

* `e2tsr` is probably always overkill, since `e2ds`/`e2dsa` would pick up any file modifications and `e2ts` would then reindex those, unless there is a new copyparty version with new parsers and the release note says otherwise

|

||||

* the rescan button in the admin panel has no effect unless the volume has `-e2ds` or higher

|

||||

|

||||

config file example (these options are recommended btw):

|

||||

|

||||

```yaml

|

||||

[global]

|

||||

e2dsa # scan and index all files in all volumes on startup

|

||||

e2ts # check newly-discovered or uploaded files for media tags

|

||||

```

|

||||

|

||||

### exclude-patterns

|

||||

|

||||

to save some time, you can provide a regex pattern for filepaths to only index by filename/path/size/last-modified (and not the hash of the file contents) by setting `--no-hash \.iso$` or the volflag `:c,nohash=\.iso$`, this has the following consequences:

|

||||

to save some time, you can provide a regex pattern for filepaths to only index by filename/path/size/last-modified (and not the hash of the file contents) by setting `--no-hash '\.iso$'` or the volflag `:c,nohash=\.iso$`, this has the following consequences:

|

||||

* initial indexing is way faster, especially when the volume is on a network disk

|

||||

* makes it impossible to [file-search](#file-search)

|

||||

* if someone uploads the same file contents, the upload will not be detected as a dupe, so it will not get symlinked or rejected

|

||||

|

||||

similarly, you can fully ignore files/folders using `--no-idx [...]` and `:c,noidx=\.iso$`

|

||||

|

||||

NOTE: `no-idx` and/or `no-hash` prevents deduplication of those files

|

||||

|

||||

* when running on macos, all the usual apple metadata files are excluded by default

|

||||

|

||||

if you set `--no-hash [...]` globally, you can enable hashing for specific volumes using flag `:c,nohash=`

|

||||

|

||||

to exclude certain filepaths from search-results, use `--srch-excl` or volflag `srch_excl` instead of `--no-idx`, for example `--srch-excl 'password|logs/[0-9]'`

|

||||

|

||||

config file example:

|

||||

|

||||

```yaml

|

||||

[/games]

|

||||

/mnt/nas/games

|

||||

flags:

|

||||

noidx: \.iso$ # skip indexing iso-files

|

||||

srch_excl: password|logs/[0-9] # filter search results

|

||||

```

|

||||

|

||||

### filesystem guards

|

||||

|

||||

avoid traversing into other filesystems using `--xdev` / volflag `:c,xdev`, skipping any symlinks or bind-mounts to another HDD for example

|

||||

@@ -1269,6 +1488,20 @@ argument `--re-maxage 60` will rescan all volumes every 60 sec, same as volflag

|

||||

|

||||

uploads are disabled while a rescan is happening, so rescans will be delayed by `--db-act` (default 10 sec) when there is write-activity going on (uploads, renames, ...)

|

||||

|

||||

note: folder-thumbnails are selected during filesystem indexing, so periodic rescans can be used to keep them accurate as images are uploaded/deleted (or manually do a rescan with the `reload` button in the controlpanel)

|

||||

|

||||

config file example:

|

||||

|

||||

```yaml

|

||||

[global]

|

||||

re-maxage: 3600

|

||||

|

||||

[/pics]

|

||||

/mnt/nas/pics

|

||||

flags:

|

||||

scan: 900

|

||||

```

|

||||

|

||||

|

||||

## upload rules

|

||||

|

||||

@@ -1294,6 +1527,26 @@ you can also set transaction limits which apply per-IP and per-volume, but these

|

||||

notes:

|

||||

* `vmaxb` and `vmaxn` requires either the `e2ds` volflag or `-e2dsa` global-option

|

||||

|

||||

config file example:

|

||||

|

||||

```yaml

|

||||

[/inc]

|

||||

/mnt/nas/uploads

|

||||

accs:

|

||||

w: * # anyone can upload here

|

||||

rw: ed # only user "ed" can read-write

|

||||

flags:

|

||||

e2ds: # filesystem indexing is required for many of these:

|

||||

sz: 1k-3m # accept upload only if filesize in this range

|

||||

df: 4g # free disk space cannot go lower than this

|

||||

vmaxb: 1g # volume can never exceed 1 GiB

|

||||

vmaxn: 4k # ...or 4000 files, whichever comes first

|

||||

nosub # must upload to toplevel folder

|

||||

lifetime: 300 # uploads are deleted after 5min

|

||||

maxn: 250,3600 # each IP can upload 250 files in 1 hour

|

||||

maxb: 1g,300 # each IP can upload 1 GiB over 5 minutes

|

||||

```

|

||||

|

||||

|

||||

## compress uploads

|

||||

|

||||

@@ -1339,10 +1592,24 @@ this can instead be kept in a single place using the `--hist` argument, or the `

|

||||

* `--hist ~/.cache/copyparty -v ~/music::r:c,hist=-` sets `~/.cache/copyparty` as the default place to put volume info, but `~/music` gets the regular `.hist` subfolder (`-` restores default behavior)

|

||||

|

||||

note:

|

||||

* putting the hist-folders on an SSD is strongly recommended for performance

|

||||

* markdown edits are always stored in a local `.hist` subdirectory

|

||||

* on windows the volflag path is cyglike, so `/c/temp` means `C:\temp` but use regular paths for `--hist`

|

||||

* you can use cygpaths for volumes too, `-v C:\Users::r` and `-v /c/users::r` both work

|

||||

|

||||

config file example:

|

||||

|

||||

```yaml

|

||||

[global]

|

||||

hist: ~/.cache/copyparty # put db/thumbs/etc. here by default

|

||||

|

||||

[/pics]

|

||||

/mnt/nas/pics

|

||||

flags:

|

||||

hist: - # restore the default (/mnt/nas/pics/.hist/)

|

||||

hist: /mnt/nas/cache/pics/ # can be absolute path

|

||||

```

|

||||

|

||||

|

||||

## metadata from audio files

|

||||

|

||||

@@ -1394,6 +1661,18 @@ copyparty can invoke external programs to collect additional metadata for files

|

||||

|

||||

if something doesn't work, try `--mtag-v` for verbose error messages

|

||||

|

||||

config file example; note that `mtp` is an additive option so all of the mtp options will take effect:

|

||||

|

||||

```yaml

|

||||

[/music]

|

||||

/mnt/nas/music

|

||||

flags:

|

||||

mtp: .bpm=~/bin/audio-bpm.py # assign ".bpm" (numeric) with script

|

||||

mtp: key=f,t5,~/bin/audio-key.py # force/overwrite, 5sec timeout

|

||||

mtp: ext=an,~/bin/file-ext.py # will only run on non-audio files

|

||||

mtp: arch,built,ver,orig=an,eexe,edll,~/bin/exe.py # only exe/dll

|

||||

```

|

||||

|

||||

|

||||

## event hooks

|

||||

|

||||

@@ -1406,12 +1685,51 @@ there's a bunch of flags and stuff, see `--help-hooks`

|

||||

if you want to write your own hooks, see [devnotes](./docs/devnotes.md#event-hooks)

|

||||

|

||||

|

||||

### zeromq

|

||||

|

||||

event-hooks can send zeromq messages instead of running programs

|

||||

|

||||

to send a 0mq message every time a file is uploaded,

|

||||

|

||||

* `--xau zmq:pub:tcp://*:5556` sends a PUB to any/all connected SUB clients

|

||||

* `--xau t3,zmq:push:tcp://*:5557` sends a PUSH to exactly one connected PULL client

|

||||

* `--xau t3,j,zmq:req:tcp://localhost:5555` sends a REQ to the connected REP client

|

||||

|

||||

the PUSH and REQ examples have `t3` (timeout after 3 seconds) because they block if there's no clients to talk to

|

||||

|

||||

* the REQ example does `t3,j` to send extended upload-info as json instead of just the filesystem-path

|

||||

|

||||

see [zmq-recv.py](https://github.com/9001/copyparty/blob/hovudstraum/bin/zmq-recv.py) if you need something to receive the messages with

|

||||

|

||||

config file example; note that the hooks are additive options, so all of the xau options will take effect:

|

||||

|

||||

```yaml

|

||||

[global]

|

||||

xau: zmq:pub:tcp://*:5556` # send a PUB to any/all connected SUB clients

|

||||

xau: t3,zmq:push:tcp://*:5557` # send PUSH to exactly one connected PULL cli

|

||||

xau: t3,j,zmq:req:tcp://localhost:5555` # send REQ to the connected REP cli

|

||||

```

|

||||

|

||||

|

||||

### upload events

|

||||

|

||||

the older, more powerful approach ([examples](./bin/mtag/)):

|

||||

|

||||

```

|

||||

-v /mnt/inc:inc:w:c,mte=+x1:c,mtp=x1=ad,kn,/usr/bin/notify-send

|

||||

-v /mnt/inc:inc:w:c,e2d,e2t,mte=+x1:c,mtp=x1=ad,kn,/usr/bin/notify-send

|

||||

```

|

||||

|

||||

that was the commandline example; here's the config file example:

|

||||

|

||||

```yaml

|

||||

[/inc]

|

||||

/mnt/inc

|

||||

accs:

|

||||

w: *

|

||||

flags:

|

||||

e2d, e2t # enable indexing of uploaded files and their tags

|

||||

mte: +x1

|

||||

mtp: x1=ad,kn,/usr/bin/notify-send

|

||||

```

|

||||

|

||||

so filesystem location `/mnt/inc` shared at `/inc`, write-only for everyone, appending `x1` to the list of tags to index (`mte`), and using `/usr/bin/notify-send` to "provide" tag `x1` for any filetype (`ad`) with kill-on-timeout disabled (`kn`)

|

||||

@@ -1425,6 +1743,8 @@ note that this is way more complicated than the new [event hooks](#event-hooks)

|

||||

|

||||

note that it will occupy the parsing threads, so fork anything expensive (or set `kn` to have copyparty fork it for you) -- otoh if you want to intentionally queue/singlethread you can combine it with `--mtag-mt 1`

|

||||

|

||||

for reference, if you were to do this using event hooks instead, it would be like this: `-e2d --xau notify-send,hello,--`

|

||||

|

||||

|

||||

## handlers

|

||||

|

||||

@@ -1432,6 +1752,8 @@ redefine behavior with plugins ([examples](./bin/handlers/))

|

||||

|

||||

replace 404 and 403 errors with something completely different (that's it for now)

|

||||

|

||||

as for client-side stuff, there is [plugins for modifying UI/UX](./contrib/plugins/)

|

||||

|

||||

|

||||

## ip auth

|

||||

|

||||

@@ -1455,7 +1777,9 @@ replace copyparty passwords with oauth and such

|

||||

|

||||

you can disable the built-in password-based login system, and instead replace it with a separate piece of software (an identity provider) which will then handle authenticating / authorizing of users; this makes it possible to login with passkeys / fido2 / webauthn / yubikey / ldap / active directory / oauth / many other single-sign-on contraptions

|

||||

|

||||

a popular choice is [Authelia](https://www.authelia.com/) (config-file based), another one is [authentik](https://goauthentik.io/) (GUI-based, more complex)

|

||||

* the regular config-defined users will be used as a fallback for requests which don't include a valid (trusted) IdP username header

|

||||

|

||||

some popular identity providers are [Authelia](https://www.authelia.com/) (config-file based) and [authentik](https://goauthentik.io/) (GUI-based, more complex)

|

||||

|

||||

there is a [docker-compose example](./docs/examples/docker/idp-authelia-traefik) which is hopefully a good starting point (alternatively see [./docs/idp.md](./docs/idp.md) if you're the DIY type)

|

||||

|

||||

@@ -1491,12 +1815,18 @@ connecting to an aws s3 bucket and similar

|

||||

|

||||

there is no built-in support for this, but you can use FUSE-software such as [rclone](https://rclone.org/) / [geesefs](https://github.com/yandex-cloud/geesefs) / [JuiceFS](https://juicefs.com/en/) to first mount your cloud storage as a local disk, and then let copyparty use (a folder in) that disk as a volume

|

||||

|

||||

you may experience poor upload performance this way, but that can sometimes be fixed by specifying the volflag `sparse` to force the use of sparse files; this has improved the upload speeds from `1.5 MiB/s` to over `80 MiB/s` in one case, but note that you are also more likely to discover funny bugs in your FUSE software this way, so buckle up

|

||||

if copyparty is unable to access the local folder that rclone/geesefs/JuiceFS provides (for example if it looks invisible) then you may need to run rclone with `--allow-other` and/or enable `user_allow_other` in `/etc/fuse.conf`

|

||||

|

||||

you will probably get decent speeds with the default config, however most likely restricted to using one TCP connection per file, so the upload-client won't be able to send multiple chunks in parallel

|

||||

|

||||

> before [v1.13.5](https://github.com/9001/copyparty/releases/tag/v1.13.5) it was recommended to use the volflag `sparse` to force-allow multiple chunks in parallel; this would improve the upload-speed from `1.5 MiB/s` to over `80 MiB/s` at the risk of provoking latent bugs in S3 or JuiceFS. But v1.13.5 added chunk-stitching, so this is now probably much less important. On the contrary, `nosparse` *may* now increase performance in some cases. Please try all three options (default, `sparse`, `nosparse`) as the optimal choice depends on your network conditions and software stack (both the FUSE-driver and cloud-server)

|

||||

|

||||

someone has also tested geesefs in combination with [gocryptfs](https://nuetzlich.net/gocryptfs/) with surprisingly good results, getting 60 MiB/s upload speeds on a gbit line, but JuiceFS won with 80 MiB/s using its built-in encryption

|

||||

|

||||

you may improve performance by specifying larger values for `--iobuf` / `--s-rd-sz` / `--s-wr-sz`

|

||||

|

||||

> if you've experimented with this and made interesting observations, please share your findings so we can add a section with specific recommendations :-)

|

||||

|

||||

|

||||

## hiding from google

|

||||

|

||||

@@ -1619,10 +1949,16 @@ some reverse proxies (such as [Caddy](https://caddyserver.com/)) can automatical

|

||||

|

||||

for improved security (and a 10% performance boost) consider listening on a unix-socket with `-i unix:770:www:/tmp/party.sock` (permission `770` means only members of group `www` can access it)

|

||||

|

||||

example webserver configs:

|

||||

example webserver / reverse-proxy configs:

|

||||

|

||||

* [nginx config](contrib/nginx/copyparty.conf) -- entire domain/subdomain

|

||||

* [apache2 config](contrib/apache/copyparty.conf) -- location-based

|

||||

* [apache config](contrib/apache/copyparty.conf)

|

||||

* caddy uds: `caddy reverse-proxy --from :8080 --to unix///dev/shm/party.sock`

|

||||

* caddy tcp: `caddy reverse-proxy --from :8081 --to http://127.0.0.1:3923`

|

||||

* [haproxy config](contrib/haproxy/copyparty.conf)

|

||||

* [lighttpd subdomain](contrib/lighttpd/subdomain.conf) -- entire domain/subdomain

|

||||

* [lighttpd subpath](contrib/lighttpd/subpath.conf) -- location-based (not optimal, but in case you need it)

|

||||

* [nginx config](contrib/nginx/copyparty.conf) -- recommended

|

||||

* [traefik config](contrib/traefik/copyparty.yaml)

|

||||

|

||||

|

||||

### real-ip

|

||||

@@ -1634,6 +1970,58 @@ if you (and maybe everybody else) keep getting a message that says `thank you fo

|

||||

for most common setups, there should be a helpful message in the server-log explaining what to do, but see [docs/xff.md](docs/xff.md) if you want to learn more, including a quick hack to **just make it work** (which is **not** recommended, but hey...)

|

||||

|

||||

|

||||

### reverse-proxy performance

|

||||

|

||||

most reverse-proxies support connecting to copyparty either using uds/unix-sockets (`/dev/shm/party.sock`, faster/recommended) or using tcp (`127.0.0.1`)

|

||||

|

||||

with copyparty listening on a uds / unix-socket / unix-domain-socket and the reverse-proxy connecting to that:

|

||||

|

||||

| index.html | upload | download | software |

|

||||

| ------------ | ----------- | ----------- | -------- |

|

||||

| 28'900 req/s | 6'900 MiB/s | 7'400 MiB/s | no-proxy |

|

||||

| 18'750 req/s | 3'500 MiB/s | 2'370 MiB/s | haproxy |

|

||||

| 9'900 req/s | 3'750 MiB/s | 2'200 MiB/s | caddy |

|

||||

| 18'700 req/s | 2'200 MiB/s | 1'570 MiB/s | nginx |

|

||||

| 9'700 req/s | 1'750 MiB/s | 1'830 MiB/s | apache |

|

||||

| 9'900 req/s | 1'300 MiB/s | 1'470 MiB/s | lighttpd |

|

||||

|

||||

when connecting the reverse-proxy to `127.0.0.1` instead (the basic and/or old-fasioned way), speeds are a bit worse:

|

||||

|

||||

| index.html | upload | download | software |

|

||||

| ------------ | ----------- | ----------- | -------- |

|

||||

| 21'200 req/s | 5'700 MiB/s | 6'700 MiB/s | no-proxy |

|

||||

| 14'500 req/s | 1'700 MiB/s | 2'170 MiB/s | haproxy |

|

||||

| 11'100 req/s | 2'750 MiB/s | 2'000 MiB/s | traefik |

|

||||

| 8'400 req/s | 2'300 MiB/s | 1'950 MiB/s | caddy |

|

||||

| 13'400 req/s | 1'100 MiB/s | 1'480 MiB/s | nginx |

|

||||

| 8'400 req/s | 1'000 MiB/s | 1'000 MiB/s | apache |

|

||||

| 6'500 req/s | 1'270 MiB/s | 1'500 MiB/s | lighttpd |

|

||||

|

||||

in summary, `haproxy > caddy > traefik > nginx > apache > lighttpd`, and use uds when possible (traefik does not support it yet)

|

||||

|

||||

* if these results are bullshit because my config exampels are bad, please submit corrections!

|

||||

|

||||

|

||||

## permanent cloudflare tunnel

|

||||

|

||||

if you have a domain and want to get your copyparty online real quick, either from your home-PC behind a CGNAT or from a server without an existing [reverse-proxy](#reverse-proxy) setup, one approach is to create a [Cloudflare Tunnel](https://developers.cloudflare.com/cloudflare-one/connections/connect-networks/get-started/) (formerly "Argo Tunnel")

|

||||

|

||||

I'd recommend making a `Locally-managed tunnel` for more control, but if you prefer to make a `Remotely-managed tunnel` then this is currently how:

|

||||

|

||||

* `cloudflare dashboard` » `zero trust` » `networks` » `tunnels` » `create a tunnel` » `cloudflared` » choose a cool `subdomain` and leave the `path` blank, and use `service type` = `http` and `URL` = `127.0.0.1:3923`

|

||||

|

||||

* and if you want to just run the tunnel without installing it, skip the `cloudflared service install BASE64` step and instead do `cloudflared --no-autoupdate tunnel run --token BASE64`

|

||||

|

||||

NOTE: since people will be connecting through cloudflare, as mentioned in [real-ip](#real-ip) you should run copyparty with `--xff-hdr cf-connecting-ip` to detect client IPs correctly

|

||||

|

||||

config file example:

|

||||

|

||||

```yaml

|

||||

[global]

|

||||

xff-hdr: cf-connecting-ip

|

||||

```

|

||||

|

||||

|

||||

## prometheus

|

||||

|

||||

metrics/stats can be enabled at URL `/.cpr/metrics` for grafana / prometheus / etc (openmetrics 1.0.0)

|

||||

@@ -1657,6 +2045,7 @@ scrape_configs:

|

||||

currently the following metrics are available,

|

||||

* `cpp_uptime_seconds` time since last copyparty restart

|

||||

* `cpp_boot_unixtime_seconds` same but as an absolute timestamp

|

||||

* `cpp_active_dl` number of active downloads

|

||||

* `cpp_http_conns` number of open http(s) connections

|

||||

* `cpp_http_reqs` number of http(s) requests handled

|

||||

* `cpp_sus_reqs` number of 403/422/malicious requests

|

||||

@@ -1708,7 +2097,7 @@ change the association of a file extension

|

||||

|

||||

using commandline args, you can do something like `--mime gif=image/jif` and `--mime ts=text/x.typescript` (can be specified multiple times)

|

||||

|

||||

in a config-file, this is the same as:

|

||||

in a config file, this is the same as:

|

||||

|

||||

```yaml

|

||||

[global]

|

||||

@@ -1719,6 +2108,18 @@ in a config-file, this is the same as:

|

||||

run copyparty with `--mimes` to list all the default mappings

|

||||

|

||||

|

||||

### GDPR compliance

|

||||

|

||||

imagine using copyparty professionally... **TINLA/IANAL; EU laws are hella confusing**

|

||||

|

||||

* remember to disable logging, or configure logrotation to an acceptable timeframe with `-lo cpp-%Y-%m%d.txt.xz` or similar

|

||||

|

||||

* if running with the database enabled (recommended), then have it forget uploader-IPs after some time using `--forget-ip 43200`

|

||||

* don't set it too low; [unposting](#unpost) a file is no longer possible after this takes effect

|

||||

|

||||

* if you actually *are* a lawyer then I'm open for feedback, would be fun

|

||||

|

||||

|

||||